Reforge has joined Miro ↗

All articles

Your User Research Questions Answered: A Recap of Our AMA with Behzod Sirjani

Jun 6, 2024

Earlier this month, Behzod Sirjani, Program Partner at Reforge, founder of Yet Another Studio and former research leader at Slack and Meta, joined us for an insightful AMA on User Research.

In this conversation, he spoke about strategies for socializing customer insights across an organization, building an effective research strategy from scratch at a startup, AI tools for qualitative feedback analysis, and the key to a successful rapid research program.

Read on for some of his advice as you head into your next project or study.

For those interested in diving deeper and learning alongside Behzod, he is teaching Effective Customer Conversations live in July. It’s a 2-week course with weekly live sessions to dive deeper into the content, explore case studies drawing from his experience, and ask direct questions specific to your use case.

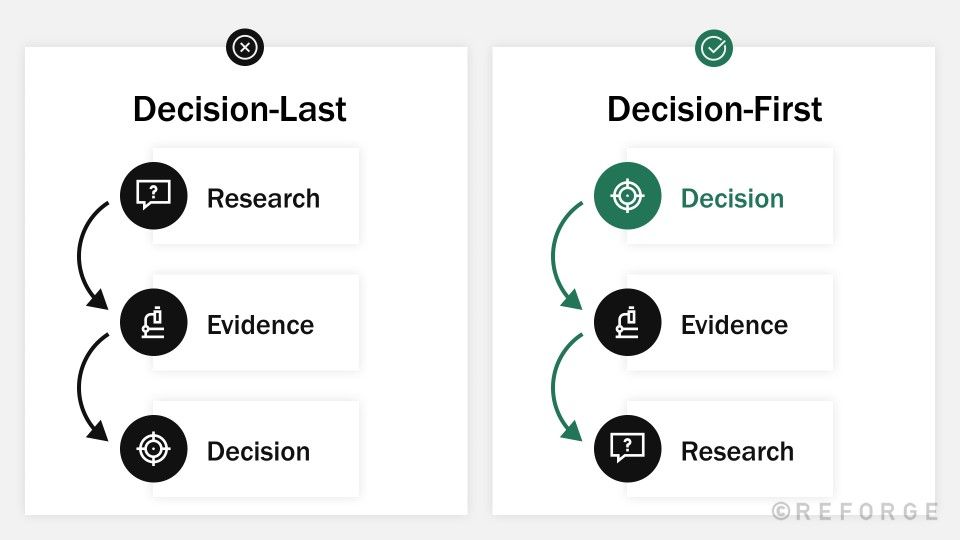

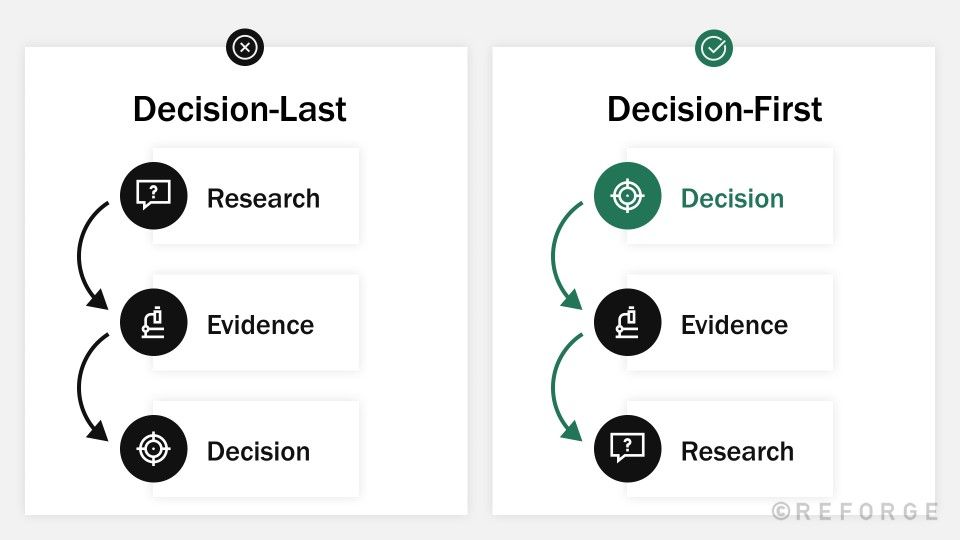

Q: How can I apply your 'decision first' framework to our usual user feedback on the product?

**Behzod: **The best way to think about applying the decision-first framework here is starting by asking yourself “Why am I trying to get customer feedback?” and “What will I do when I get it?”

Hopefully you’re not going in blind and just asking for feedback, so I’d encourage you to think about your goals (are you trying to acquire more customers, drive engagement and retention, etc) and use that to determine:

Who you should talk to.

What you should talk to them about.

Related to 1 — It’s really easy to find any customer to talk to, but gathering the wrong feedback can lead you in the wrong direction. When you think about the ideal customer to talk to, what attributes about them, experiences they have with your product (features they’ve used, tenure, etc), or problems they have in the world make them a good fit?

Related to 2 — Open-ended product exploration can be valuable at times, but it’s more likely that you have a specific area that you care about, whether that’s a new flow, a potential point of friction etc.

In a best case scenario, you have product analytics that can help you understand what people are doing in the product and this kind of engagement with customers can help you understand why they do that/how it feels.

If you’re looking for more of an audit of people’s experiences, I’d check out this post from Adam Fishman and dig into “Benchmark Studies”.

Q: What are some effective strategies for a small CX team to actively engage with user research findings and integrate them into decision-making processes within a product-led company?

Behzod: I tend to think that organizational decision-making is like playing wheel of fortune as a team. You have a puzzle you need to solve. Different teams contribute different letters.

You need to agree on what letters are missing and when you’d feel comfortable trying to solve them. You can make a plan to get the missing letters. You know the kinds of things that will not help you move forward (i.e. when NOT to do research).

I would encourage you to think about what are the decisions that you’re making and how research can help you “get another letter on the board” so that you’re confident in guessing (making the decision).

Another thing that I think can be helpful is thinking about how to not just consume the output but shape the projects themselves. What are things that you are seeing with your customers that may be unique, outliers, etc that product may want to encourage or hinder? How can you be a part of the roadmapping process for the product and research team so you’re learning things that actually help you rather than just making sense of what’s there.

I think about this as the difference between trying to cook with what’s in the kitchen versus actually contributing to the shopping list.

Q: What mechanisms do you find most effective for socializing customer insights across an organization?* *

Behzod: How can I share research better? What can my company do to be better aware of what customers need and what we’re learning in research?** **I hear these questions a lot.

One of the things I see people do poorly is that they share research in whatever way is easiest for them to create, rather than what is best for their audience to consume. You want to figure out what is the best way to communicate what you learned and help the person who is consuming that make sense of it.

Very often, as you share work beyond the immediate team who has influence over the decision, what you’re doing is improving someone’s intuition, understanding, or perspective about your customers. I think about it as giving them another lens by which they can look at a situation and understand what’s going on.

This means that the closer someone is to a problem, the easier it is for you to share the “what” and them to complete the “so what” and “now what” in their head. If someone is far away from a decision/problem, you have to do more work to communicate:

Here’s what we learned, observed, etc

Here’s why that is important

Here’s what we think we should do about it

I’m going to share a few ideas that I’ve seen work when it comes to sharing research.

1. Highlight Reels

In a remote world, something that’s been a great artifact is a quick clip with a short write up to be shared with your company on a regular basis. One of the risks here is that people over-index on this kind of thing, especially if they aren’t used to hearing from customers, so do your best to set proper expectations. Things like “Here’s a clip from a customer at COMPANY who is struggling with PROBLEM. We know that X% of customers have reported something like this. While that isn’t a majority, it does represent an opportunity for us to improve and align with COMPANY PRIORITY Y.” (You get the idea.) When you’re doing things like this, tell people what to look for and how to interpret it.

2. Roadmap Updates with supporting evidence

I’ve been a part of teams where monthly or quarterly roadmap updates include not just what is ahead, but why we feel those things are high priority. This is everything from a couple bullet points (with a link to the deck or dashboard) all the way through to a mini presentation on “why we’re shifting our focus” including a customer quote. If you and your team do regular share outs with other teams, this can be a great way to sprinkle in things that you’ve learned and point people at.

3. Regular Customer Feedback Share-outs

At Slack, the Customer Experience (Support) team, Research, and Customer Success would do a regular (monthly, and then quarterly) share out of the biggest themes we were seeing in our work. We tried to spend some time highlighting where these learnings could triangulate with each other so that instead of a laundry list of “problems” we were able to share three things that were top of mind for each team. We would use this as a way to develop broader awareness for the work and reinforce the fact that while many of us worked on specific aspects of the product, our customers only saw Slack as one thing. We didn’t want to ship the org chart. We also made sure to talk about when themes were recurring month over month.

4. “What We Know About X” wiki

Let’s talk about long-term knowledge, but first, I need to be clear that I have such a strong resistance to how most people set up research repositories. I think that it unfairly distributes the burden to the people looking, not the people who are best suited to help you look.

At Slack, we built a company wiki (on google sites at the time) to help everyone have the same understanding about our customers. We went to the leadership team and asked them, “What do you wish everyone on your team knew about slack’s product, business, or customers by the end of their first 30 days?” From there, we built a list of topics and then wrote one-page documents that summarized them and were meant to be consumable by anyone in the company. These cited recent research, included a “last updated” date and author, and linked out to all the relevant work (often at the bottom as a “feel free to read more” kind of thing). The goal of doing this was to reduce the cognitive burden on our colleagues from having to try and know THAT a project exists AND when/where they could find it PLUS searching through dozens of slides to find the answer.

We took on that burden and created what’s functionally the most concise and up to date source of truth, that also acts like a “read me” for the research. We wrote them almost like Q&A so that we could identify the confidence we had in our answers OR that there was no clear answer yet and it was on the roadmap for Q3... etc.

I think that more companies should do this, but I recognize that it’s a lot of effort (though less effort with AI). I think having something that highlights the most important truths/beliefs that everyone should operate with as well as links out to source material is huge.

5. Customer Listening Sessions

These are NOT LIVE USER INTERVIEWS. I just wanted to make that clear up front. The goal of a customer listening session is to invite a customer to share about a specific story/experience/perspective with a team and (potentially) give them the chance to ask questions (if they are prepared and trained). This should be more like a talk show, where both you (the interviewer) and the participant know what is going to be covered, have prepped ahead of time, and are comfortable and excited to talk about things. I’ve often done these as hour long sessions where I’ll talk with the customer for the first 30-40 minutes and then we save time at the end for Q&A if and only if they are comfortable. Similar to interviews, share homework (pre-reads) with the team so they don’t ask questions that are irrelevant.

To your point about doing this with limited resources, I’d focus on the kind of effort that you think will be the highest leverage for the effort, which may be 1 or 3 to start, especially to drive appetite for things. Then you can expand into other efforts.

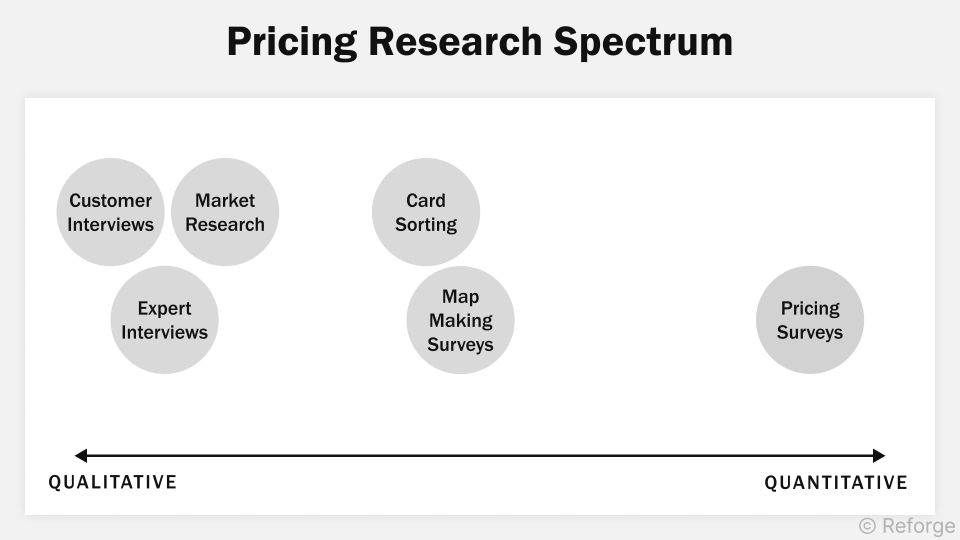

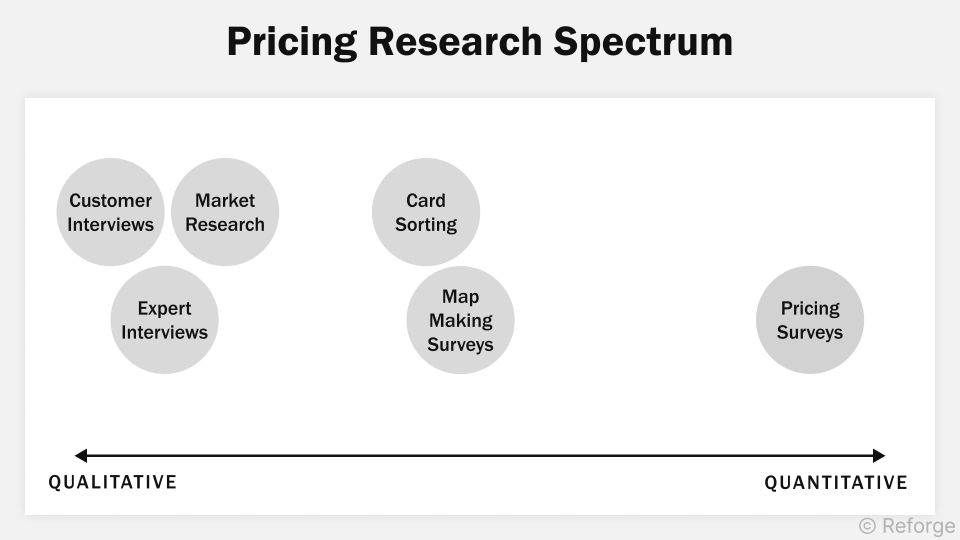

Q: What's a good way to test pricing and willingness to pay for a new product?

Behzod: I think pricing is one of the hardest things to evaluate, especially in a B2B context.

If you’re a Reforge member, I’d highly recommend checking out this bonus module in the Monetization and Pricing course on pricing research. Stephanie Kwok and I wrote this as an additional unit for the program and it’s the best encapsulation of my thinking.

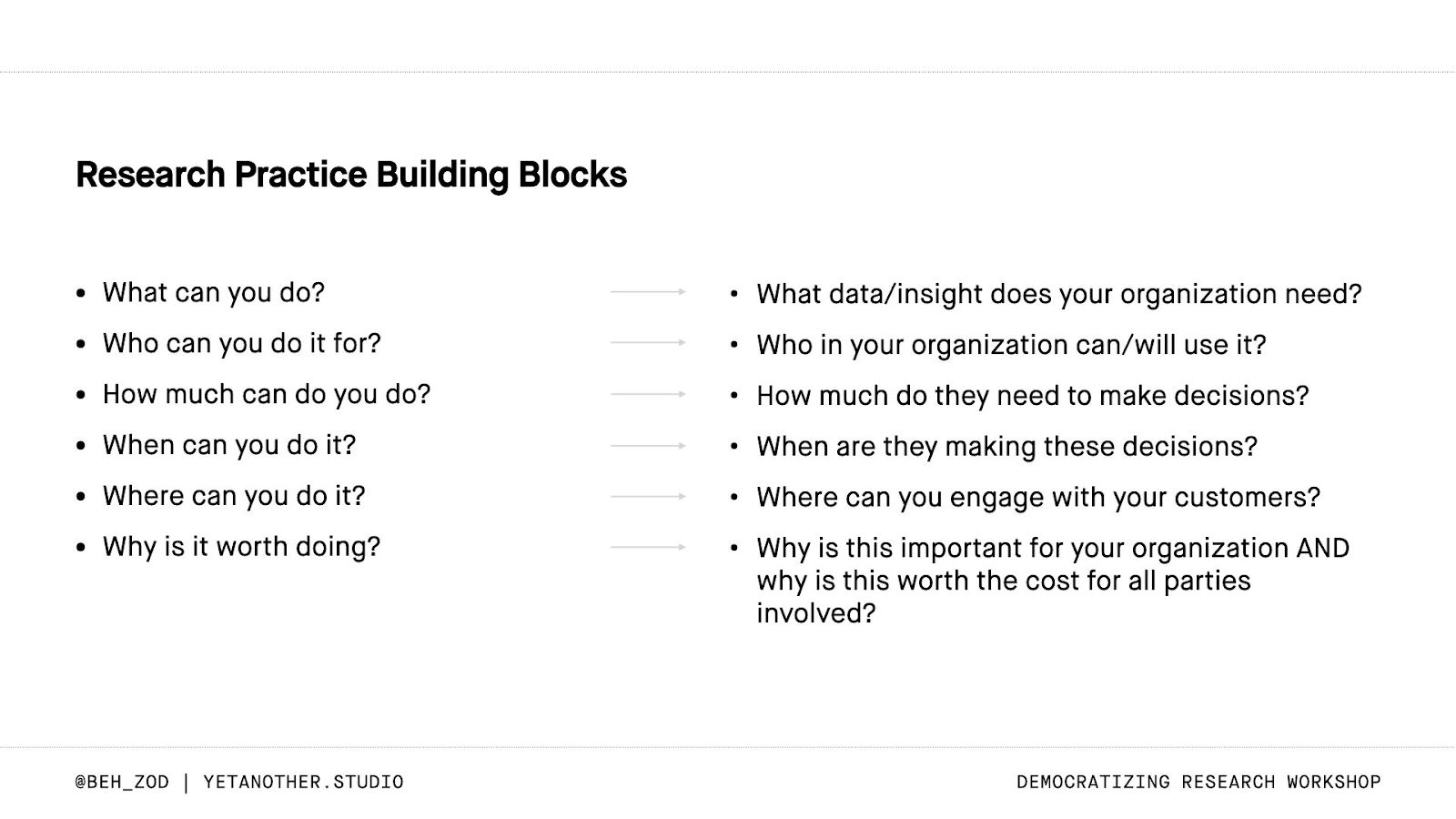

**Q: How can I **explain to my organization that we're having directed conversations with customers, and not doing user research? Also, any tips for educating them on real user research and building a solid strategy? We're a startup with 200+ customers

Behzod: I’ve found that most organizational change can be hard because you risk “organ rejection” by the team.

When you’re trying to change people’s perceptions on research (or anything), I start by researching the organization itself.

What do people think research is?

Why?

What have they seen done before that was good? Bad?

Doing this opens the door to collaboratively re-imagine what research can look like at your company. I think the best way to educate them is by helping reorient them from where they are and what they think to what you see as possible. But you have to do that in a pragmatic way, which doesn’t block other teams from making progress. (Very tactically, this is often why I get hired by companies — either to help do things like interview training or to help them re-imagine what research should look like given the way that they function.)

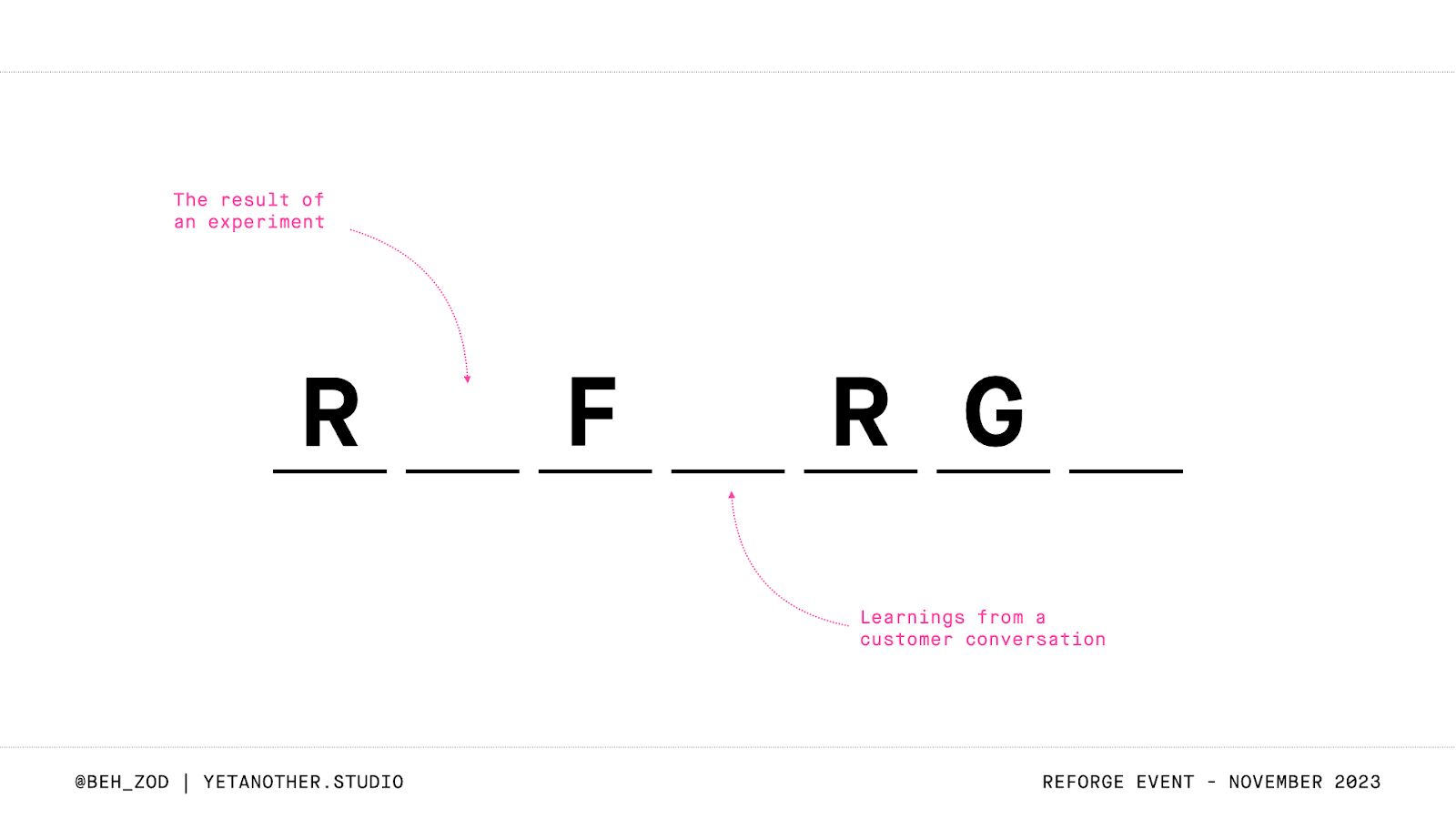

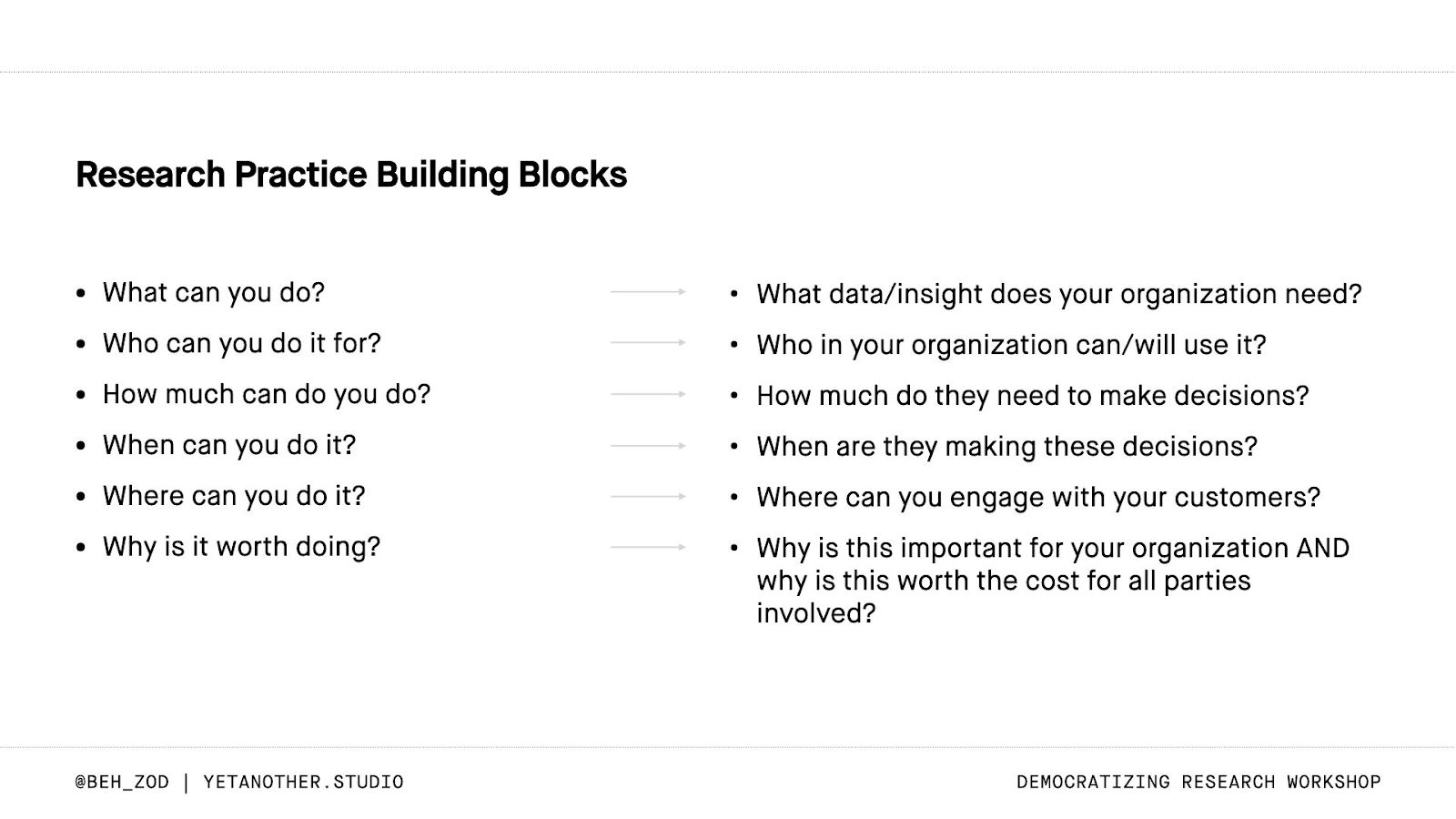

I often encourage folks who are starting to build practices to think about the building blocks in terms of what they have versus what the organization needs and use that as a roadmap to close the gaps (slide below).

I also encourage people to start small, get feedback, and build from there. Do one project/study in a way that you think is better. Reflect on what went well and why, then start to enroll more people in that practice.

**Q: What do you think about the new AI tools for qualitative feedback analysis that are now appearing? **

Behzod: Great timing, I just talked about this on the Unsolicited Feedback podcast.

I think there’s a lot of potential value in these tools, assuming you prompt them correctly and point them in the right direction. There is also a lot of opportunity to be led astray if you don’t have a strong sense of data literacy.

With any kind of analysis, I encourage people to work backwards from the decision they are making, think about what other forms of evidence would increase their confidence in that decision, and then go gather that evidence.

To me, decision making is a lot like wheel of fortune:

You have a puzzle you need to solve.

Different teams contribute different letters.

You need to agree on what letters are missing and when you’d feel comfortable trying to solve.

You can make a plan to get the missing letters.

You know the kinds of things that will not help you move forward (i.e. when NOT to do research).

Using LLMs feels like they will be a meaningful improvement over me writing and running R or Python scripts, but they still need to be used well. I’m also excited about tools like Outset.ai which feel like a new-ish approach thanks to what is now possible.

That said, I still think that the most important thing is understanding customer behavior and preferences. If you can’t explain what a human is doing such a metric moves, I’d start there.

Q: How can product marketing partners collaborate productively with user research colleagues?

Behzod: At Facebook on the business side of things, strategy was largely PMM driven, so I learned a lot about how to work with PMM there.

We had this “smiley face chart” that showed who was quarterbacking different phases of product development, and PMM was often responsible for “inbound” which was:

What should we do?

Why should we do it?

What customers benefit?

How does this impact our business?

These questions often were focused 12-18 months out, and they would complete these massive documents that went up to leadership (even Cheryl Sandberg at times) for sign off, before being shared with PMs who focused on “how to solve the problem” that was defined. Research contributed a TON to these efforts, helping PMM understand customer behaviors, attitudes, etc.

At Slack and other companies, what I’ve seen research - PMM partnerships often be focused on the GTM side of things, like:

How should we position this? (What resonates with customer segment X?)

How is our brand doing compared to competitors?

What customer stories/successes should we highlight to help people better understand how to use Slack?

This is definitely more on the outbound side of things, but equally as valuable at times. In these situations, I recommend a similar approach of:

working backwards from the outcomes you care about (Drive brand awareness)

identifying the decisions you want to make (which positioning resonates better)

aligning on what evidence will make you feel confident in that decision (hearing from decision-makers that XYZ)

designing a research approach to gather that evidence

Q: Do you believe in self-serve or rapid research programs in the absence of formal research support / resources ? If so, what is the key to a successful rapid research program?

Behzod: I generally believe in self-serve research programs, assuming they are set up correctly (I’ve written and spoken about democratization for a long time since I believe it’s one of the only ways we can scale practices).

In the absence of formal research support / resources, this is where it gets tricky. If you have no research support on your team, I would be a bit weary, but I also recognize that not every company is so lucky. If this is on a team level, where you have a researcher who helped set things up, then I’m less concerned.

I say this because there is a lot that can go wrong and there are very real harms to your business from a legal and privacy perspective if you aren’t careful about how you recruit, compensate, and handle data.

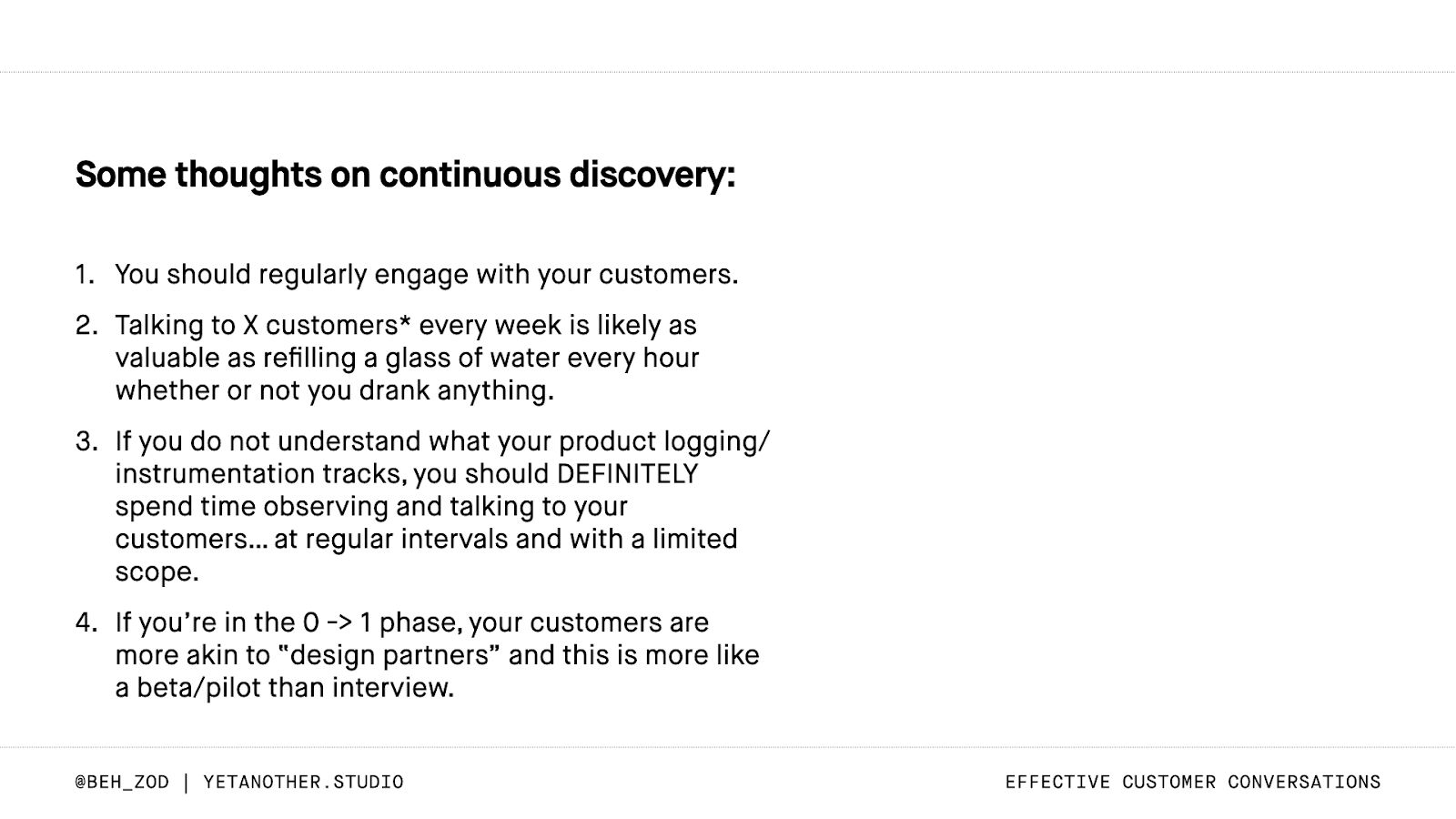

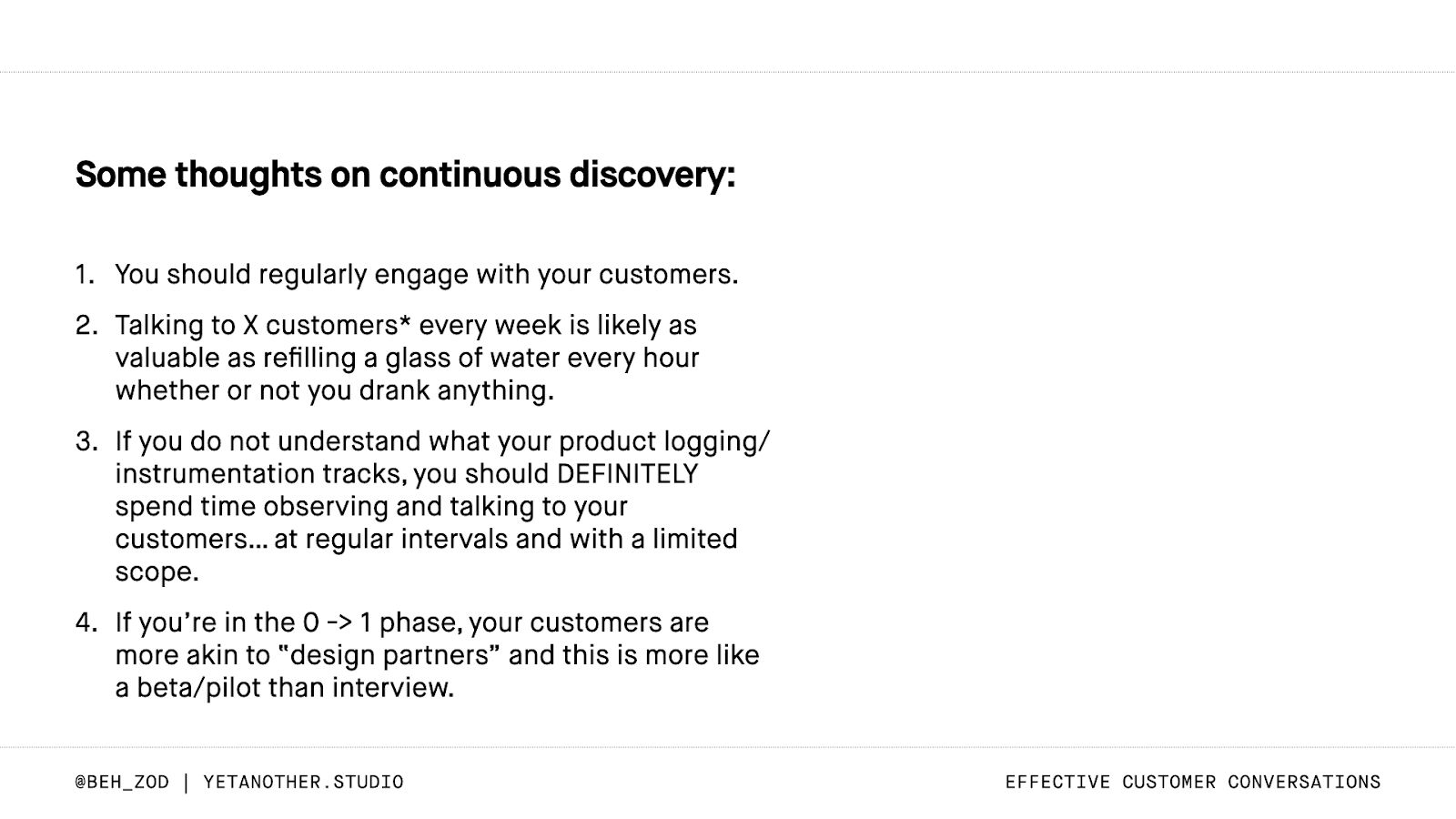

I’m generally against continuous discovery approaches, as you can see in the slide below.

I think my big push would be “why are you setting up this kind of program?” and “who will be conducting the research?”

I believe that everyone who builds products can and should be able to talk to customers, but I think the orientation towards quick hit research is less valuable in my opinion.

Ready to dive deeper ?

Behzod is teaching Effective Customer Conversations live in July. It’s a 2-week course with weekly live sessions to dive deeper into the content, explore case studies drawing from his experience at Slack, Meta, and more, and ask direct questions specific to your use case.

Enroll Today!

Earlier this month, Behzod Sirjani, Program Partner at Reforge, founder of Yet Another Studio and former research leader at Slack and Meta, joined us for an insightful AMA on User Research.

In this conversation, he spoke about strategies for socializing customer insights across an organization, building an effective research strategy from scratch at a startup, AI tools for qualitative feedback analysis, and the key to a successful rapid research program.

Read on for some of his advice as you head into your next project or study.

For those interested in diving deeper and learning alongside Behzod, he is teaching Effective Customer Conversations live in July. It’s a 2-week course with weekly live sessions to dive deeper into the content, explore case studies drawing from his experience, and ask direct questions specific to your use case.

Q: How can I apply your 'decision first' framework to our usual user feedback on the product?

**Behzod: **The best way to think about applying the decision-first framework here is starting by asking yourself “Why am I trying to get customer feedback?” and “What will I do when I get it?”

Hopefully you’re not going in blind and just asking for feedback, so I’d encourage you to think about your goals (are you trying to acquire more customers, drive engagement and retention, etc) and use that to determine:

Who you should talk to.

What you should talk to them about.

Related to 1 — It’s really easy to find any customer to talk to, but gathering the wrong feedback can lead you in the wrong direction. When you think about the ideal customer to talk to, what attributes about them, experiences they have with your product (features they’ve used, tenure, etc), or problems they have in the world make them a good fit?

Related to 2 — Open-ended product exploration can be valuable at times, but it’s more likely that you have a specific area that you care about, whether that’s a new flow, a potential point of friction etc.

In a best case scenario, you have product analytics that can help you understand what people are doing in the product and this kind of engagement with customers can help you understand why they do that/how it feels.

If you’re looking for more of an audit of people’s experiences, I’d check out this post from Adam Fishman and dig into “Benchmark Studies”.

Q: What are some effective strategies for a small CX team to actively engage with user research findings and integrate them into decision-making processes within a product-led company?

Behzod: I tend to think that organizational decision-making is like playing wheel of fortune as a team. You have a puzzle you need to solve. Different teams contribute different letters.

You need to agree on what letters are missing and when you’d feel comfortable trying to solve them. You can make a plan to get the missing letters. You know the kinds of things that will not help you move forward (i.e. when NOT to do research).

I would encourage you to think about what are the decisions that you’re making and how research can help you “get another letter on the board” so that you’re confident in guessing (making the decision).

Another thing that I think can be helpful is thinking about how to not just consume the output but shape the projects themselves. What are things that you are seeing with your customers that may be unique, outliers, etc that product may want to encourage or hinder? How can you be a part of the roadmapping process for the product and research team so you’re learning things that actually help you rather than just making sense of what’s there.

I think about this as the difference between trying to cook with what’s in the kitchen versus actually contributing to the shopping list.

Q: What mechanisms do you find most effective for socializing customer insights across an organization?* *

Behzod: How can I share research better? What can my company do to be better aware of what customers need and what we’re learning in research?** **I hear these questions a lot.

One of the things I see people do poorly is that they share research in whatever way is easiest for them to create, rather than what is best for their audience to consume. You want to figure out what is the best way to communicate what you learned and help the person who is consuming that make sense of it.

Very often, as you share work beyond the immediate team who has influence over the decision, what you’re doing is improving someone’s intuition, understanding, or perspective about your customers. I think about it as giving them another lens by which they can look at a situation and understand what’s going on.

This means that the closer someone is to a problem, the easier it is for you to share the “what” and them to complete the “so what” and “now what” in their head. If someone is far away from a decision/problem, you have to do more work to communicate:

Here’s what we learned, observed, etc

Here’s why that is important

Here’s what we think we should do about it

I’m going to share a few ideas that I’ve seen work when it comes to sharing research.

1. Highlight Reels

In a remote world, something that’s been a great artifact is a quick clip with a short write up to be shared with your company on a regular basis. One of the risks here is that people over-index on this kind of thing, especially if they aren’t used to hearing from customers, so do your best to set proper expectations. Things like “Here’s a clip from a customer at COMPANY who is struggling with PROBLEM. We know that X% of customers have reported something like this. While that isn’t a majority, it does represent an opportunity for us to improve and align with COMPANY PRIORITY Y.” (You get the idea.) When you’re doing things like this, tell people what to look for and how to interpret it.

2. Roadmap Updates with supporting evidence

I’ve been a part of teams where monthly or quarterly roadmap updates include not just what is ahead, but why we feel those things are high priority. This is everything from a couple bullet points (with a link to the deck or dashboard) all the way through to a mini presentation on “why we’re shifting our focus” including a customer quote. If you and your team do regular share outs with other teams, this can be a great way to sprinkle in things that you’ve learned and point people at.

3. Regular Customer Feedback Share-outs

At Slack, the Customer Experience (Support) team, Research, and Customer Success would do a regular (monthly, and then quarterly) share out of the biggest themes we were seeing in our work. We tried to spend some time highlighting where these learnings could triangulate with each other so that instead of a laundry list of “problems” we were able to share three things that were top of mind for each team. We would use this as a way to develop broader awareness for the work and reinforce the fact that while many of us worked on specific aspects of the product, our customers only saw Slack as one thing. We didn’t want to ship the org chart. We also made sure to talk about when themes were recurring month over month.

4. “What We Know About X” wiki

Let’s talk about long-term knowledge, but first, I need to be clear that I have such a strong resistance to how most people set up research repositories. I think that it unfairly distributes the burden to the people looking, not the people who are best suited to help you look.

At Slack, we built a company wiki (on google sites at the time) to help everyone have the same understanding about our customers. We went to the leadership team and asked them, “What do you wish everyone on your team knew about slack’s product, business, or customers by the end of their first 30 days?” From there, we built a list of topics and then wrote one-page documents that summarized them and were meant to be consumable by anyone in the company. These cited recent research, included a “last updated” date and author, and linked out to all the relevant work (often at the bottom as a “feel free to read more” kind of thing). The goal of doing this was to reduce the cognitive burden on our colleagues from having to try and know THAT a project exists AND when/where they could find it PLUS searching through dozens of slides to find the answer.

We took on that burden and created what’s functionally the most concise and up to date source of truth, that also acts like a “read me” for the research. We wrote them almost like Q&A so that we could identify the confidence we had in our answers OR that there was no clear answer yet and it was on the roadmap for Q3... etc.

I think that more companies should do this, but I recognize that it’s a lot of effort (though less effort with AI). I think having something that highlights the most important truths/beliefs that everyone should operate with as well as links out to source material is huge.

5. Customer Listening Sessions

These are NOT LIVE USER INTERVIEWS. I just wanted to make that clear up front. The goal of a customer listening session is to invite a customer to share about a specific story/experience/perspective with a team and (potentially) give them the chance to ask questions (if they are prepared and trained). This should be more like a talk show, where both you (the interviewer) and the participant know what is going to be covered, have prepped ahead of time, and are comfortable and excited to talk about things. I’ve often done these as hour long sessions where I’ll talk with the customer for the first 30-40 minutes and then we save time at the end for Q&A if and only if they are comfortable. Similar to interviews, share homework (pre-reads) with the team so they don’t ask questions that are irrelevant.

To your point about doing this with limited resources, I’d focus on the kind of effort that you think will be the highest leverage for the effort, which may be 1 or 3 to start, especially to drive appetite for things. Then you can expand into other efforts.

Q: What's a good way to test pricing and willingness to pay for a new product?

Behzod: I think pricing is one of the hardest things to evaluate, especially in a B2B context.

If you’re a Reforge member, I’d highly recommend checking out this bonus module in the Monetization and Pricing course on pricing research. Stephanie Kwok and I wrote this as an additional unit for the program and it’s the best encapsulation of my thinking.

**Q: How can I **explain to my organization that we're having directed conversations with customers, and not doing user research? Also, any tips for educating them on real user research and building a solid strategy? We're a startup with 200+ customers

Behzod: I’ve found that most organizational change can be hard because you risk “organ rejection” by the team.

When you’re trying to change people’s perceptions on research (or anything), I start by researching the organization itself.

What do people think research is?

Why?

What have they seen done before that was good? Bad?

Doing this opens the door to collaboratively re-imagine what research can look like at your company. I think the best way to educate them is by helping reorient them from where they are and what they think to what you see as possible. But you have to do that in a pragmatic way, which doesn’t block other teams from making progress. (Very tactically, this is often why I get hired by companies — either to help do things like interview training or to help them re-imagine what research should look like given the way that they function.)

I often encourage folks who are starting to build practices to think about the building blocks in terms of what they have versus what the organization needs and use that as a roadmap to close the gaps (slide below).

I also encourage people to start small, get feedback, and build from there. Do one project/study in a way that you think is better. Reflect on what went well and why, then start to enroll more people in that practice.

**Q: What do you think about the new AI tools for qualitative feedback analysis that are now appearing? **

Behzod: Great timing, I just talked about this on the Unsolicited Feedback podcast.

I think there’s a lot of potential value in these tools, assuming you prompt them correctly and point them in the right direction. There is also a lot of opportunity to be led astray if you don’t have a strong sense of data literacy.

With any kind of analysis, I encourage people to work backwards from the decision they are making, think about what other forms of evidence would increase their confidence in that decision, and then go gather that evidence.

To me, decision making is a lot like wheel of fortune:

You have a puzzle you need to solve.

Different teams contribute different letters.

You need to agree on what letters are missing and when you’d feel comfortable trying to solve.

You can make a plan to get the missing letters.

You know the kinds of things that will not help you move forward (i.e. when NOT to do research).

Using LLMs feels like they will be a meaningful improvement over me writing and running R or Python scripts, but they still need to be used well. I’m also excited about tools like Outset.ai which feel like a new-ish approach thanks to what is now possible.

That said, I still think that the most important thing is understanding customer behavior and preferences. If you can’t explain what a human is doing such a metric moves, I’d start there.

Q: How can product marketing partners collaborate productively with user research colleagues?

Behzod: At Facebook on the business side of things, strategy was largely PMM driven, so I learned a lot about how to work with PMM there.

We had this “smiley face chart” that showed who was quarterbacking different phases of product development, and PMM was often responsible for “inbound” which was:

What should we do?

Why should we do it?

What customers benefit?

How does this impact our business?

These questions often were focused 12-18 months out, and they would complete these massive documents that went up to leadership (even Cheryl Sandberg at times) for sign off, before being shared with PMs who focused on “how to solve the problem” that was defined. Research contributed a TON to these efforts, helping PMM understand customer behaviors, attitudes, etc.

At Slack and other companies, what I’ve seen research - PMM partnerships often be focused on the GTM side of things, like:

How should we position this? (What resonates with customer segment X?)

How is our brand doing compared to competitors?

What customer stories/successes should we highlight to help people better understand how to use Slack?

This is definitely more on the outbound side of things, but equally as valuable at times. In these situations, I recommend a similar approach of:

working backwards from the outcomes you care about (Drive brand awareness)

identifying the decisions you want to make (which positioning resonates better)

aligning on what evidence will make you feel confident in that decision (hearing from decision-makers that XYZ)

designing a research approach to gather that evidence

Q: Do you believe in self-serve or rapid research programs in the absence of formal research support / resources ? If so, what is the key to a successful rapid research program?

Behzod: I generally believe in self-serve research programs, assuming they are set up correctly (I’ve written and spoken about democratization for a long time since I believe it’s one of the only ways we can scale practices).

In the absence of formal research support / resources, this is where it gets tricky. If you have no research support on your team, I would be a bit weary, but I also recognize that not every company is so lucky. If this is on a team level, where you have a researcher who helped set things up, then I’m less concerned.

I say this because there is a lot that can go wrong and there are very real harms to your business from a legal and privacy perspective if you aren’t careful about how you recruit, compensate, and handle data.

I’m generally against continuous discovery approaches, as you can see in the slide below.

I think my big push would be “why are you setting up this kind of program?” and “who will be conducting the research?”

I believe that everyone who builds products can and should be able to talk to customers, but I think the orientation towards quick hit research is less valuable in my opinion.

Ready to dive deeper ?

Behzod is teaching Effective Customer Conversations live in July. It’s a 2-week course with weekly live sessions to dive deeper into the content, explore case studies drawing from his experience at Slack, Meta, and more, and ask direct questions specific to your use case.

Enroll Today!