Reforge has joined Miro ↗

Introduction: A Deep Dive into AI Product Strategy

The world of AI is dynamic and rapidly evolving, and understanding how to harness its potential for product strategy has never been more critical. On the cutting edge of this discussion are leaders like Ravi Mehta (Advisor, former CPO of Tinder), Claire Vo (LaunchDarkly & ChatPRD), Darius Contractor (Otter.AI), and Jeffrey Wang (Amplitude); each offering unique insights into the intricacies and strategies required to thrive in this high-stakes environment.

Today, we deep dive on user strategy, technological advancements, and competitive positioning, all underpinned by a deep understanding of:

How AI can transform your product strategy and,

How to ensure you're thinking strategically about your AI implementation.

Why Strategy Is So Critical in GenAI?

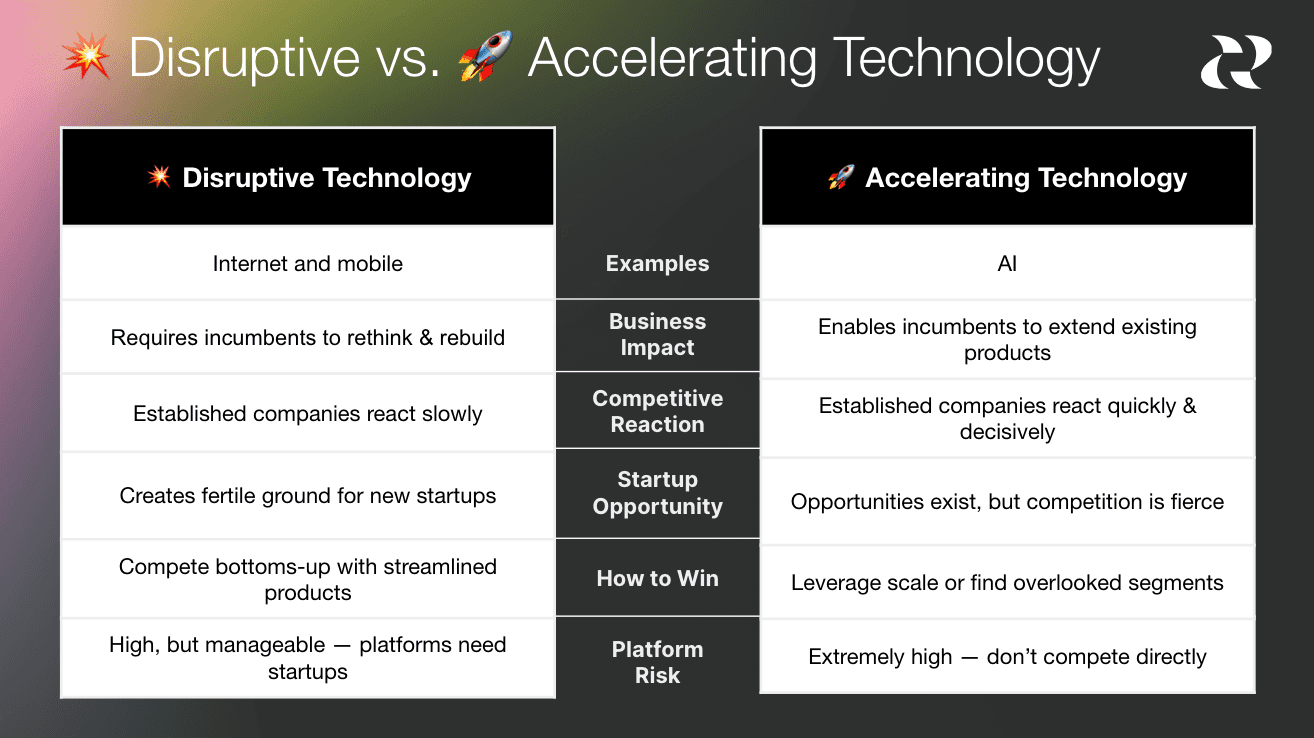

AI is an accelerating technology, not a disruptive technology!

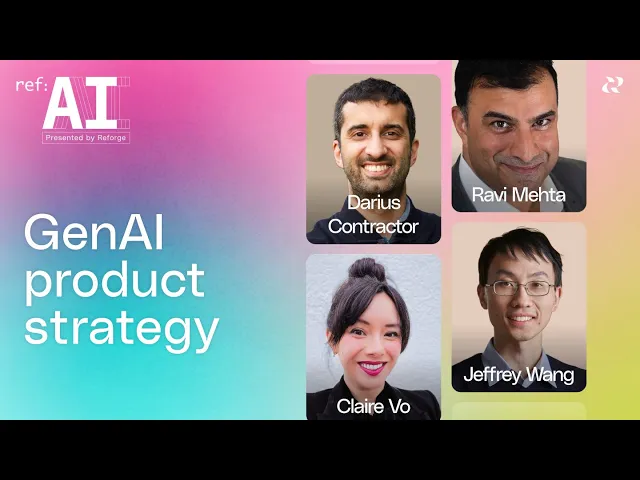

In contrast to previous technology shifts like the internet or mobile that created expansive opportunities for startups, AI has presented a different scenario. AI is an accelerating technology that can enhance existing products of incumbents as much as it can benefit startups. This is unlike disruptive technologies, such as the internet and mobile, which required large companies to rethink and rebuild their products.

A case in point is Adobe, which was slow to embrace cloud technology, creating opportunities for new players like Canva and Figma. However, with AI, Adobe quickly integrated the technology into their existing Creative Suite and launched new AI-centric products. This quick adoption has led to a fiercely competitive environment, benefiting Adobe in terms of adoption and stock price.

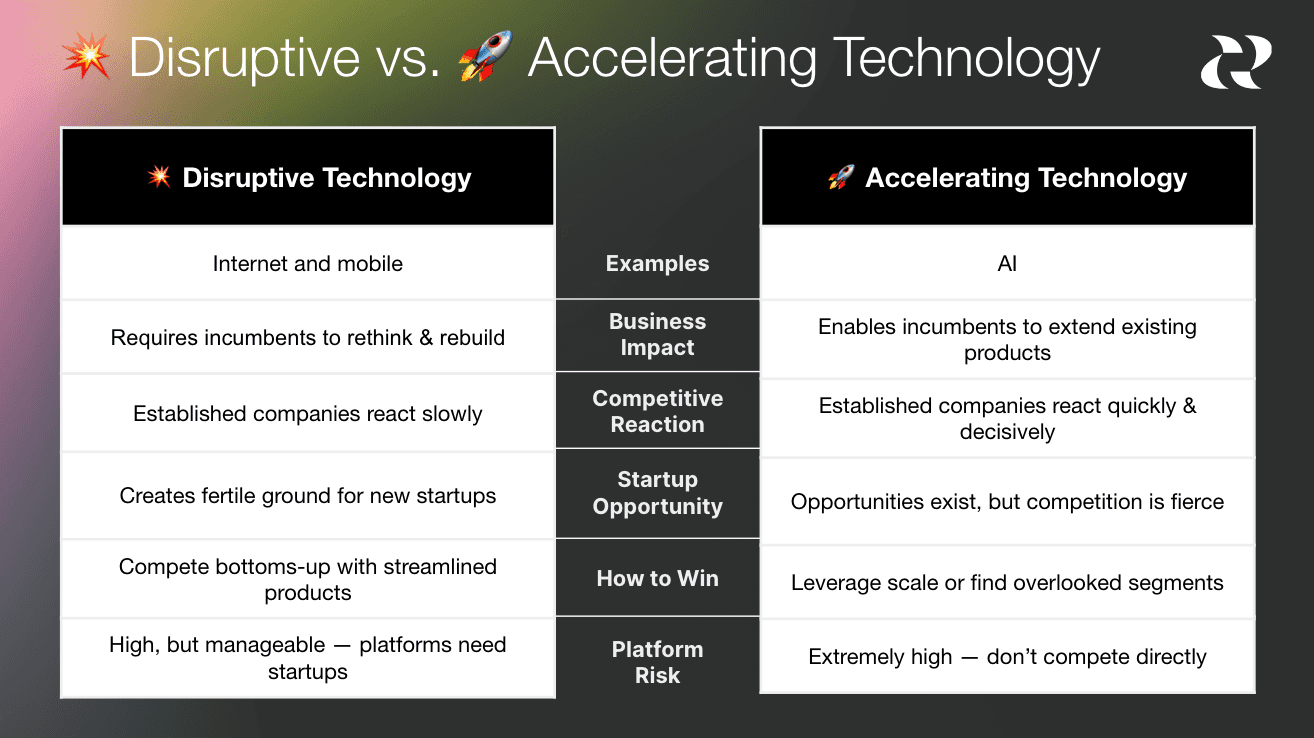

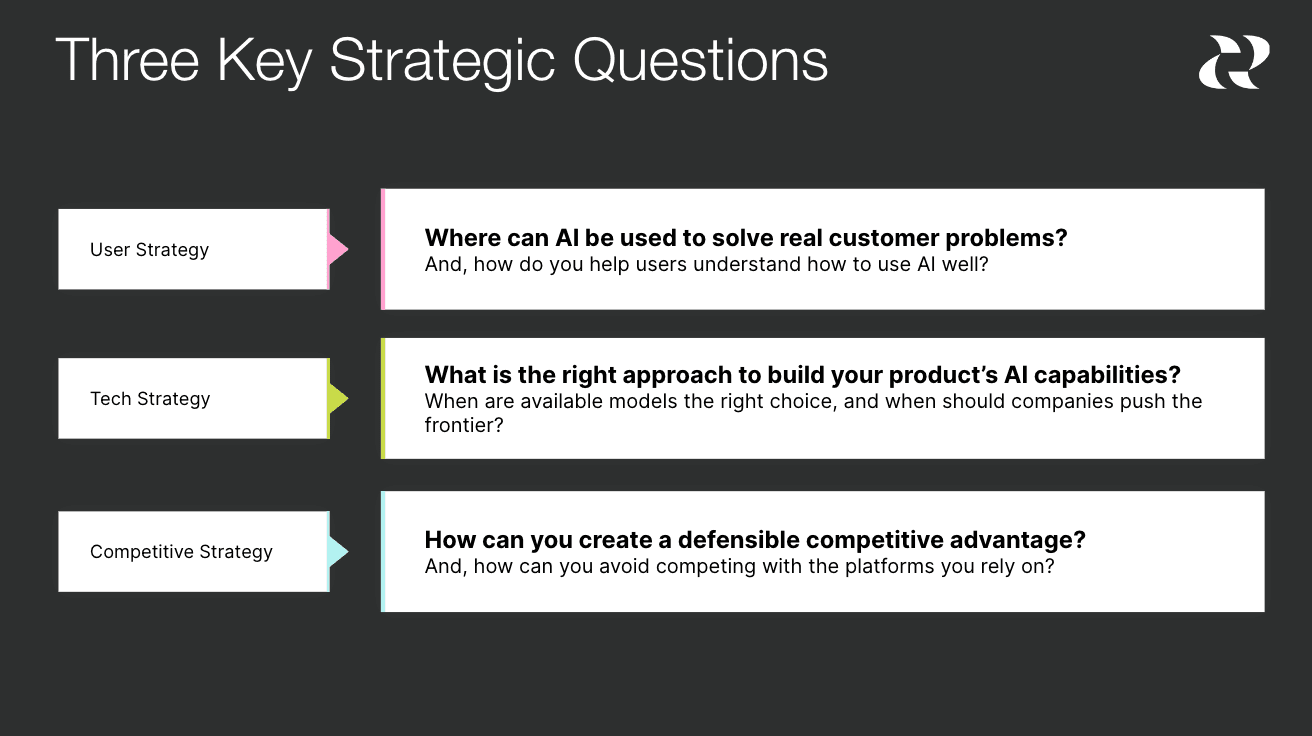

Given this competitive landscape, it's critical to think strategically about the products you're building and how to compete effectively. This involves considering three strategic areas:

User Strategy: Explore how AI can be used to solve real customer problems.

Technology Strategy: Determine the right approach to building your product's AI capabilities. This involves deciding when to use commonly available models and when to push the frontier of technology.

Competitive Strategy: Understand how to create a defensible position and avoid disruption from competitors or platforms you might be relying on.

User Strategy: Solving Real Problems First

Focus on User Insights

Ravi Mehta and his panel of experts underscored that solving real user problems should always precede the adoption of shiny new technology.

With the rapid advancement of AI, we're seeing a shift from AI as a novelty to AI as a powerful tool for solving real customer problems. The most successful AI products start with user pain points and use cases, structuring AI capabilities around those needs.

Claire Vo's Approach with ChatPRD

Claire Vo, Founder of ChatPRD, highlighted a vital lesson: start with what’s critical for your user base. She designed ChatPRD to alleviate her own workload. And, when general AI models attempted to solve this problem, it just didn’t meet her expectations. Claire has a very high bar for writing and the existing tools and models just didn’t level up. They didn’t sound like her.

Claire recommends refining your prompts, understanding user workflows deeply, and focusing on user satisfaction to ensure that your AI solution is both practical and valued. The result, she and numerous third parties have done side by side comparisons, and the reality is ChatPRD has generated higher quality, structured outputs for this use case.

💡 Reforge has a Prompt Engineering Template Artifact from Michael Taylor that you can use to help refine your prompts!

Otter.ai Transforms Meetings into Actionable Insights

Darius discussed how Otter is revolutionizing meeting notes into actionable insights. By using AI to transcribe and summarize meetings, Otter not only saves time but ensures critical information is accessible and actionable, directly fulfilling a critical user need.

He resolved several critical issues. Most individuals cannot afford a full-time human note-taker, and merely transcribing the meeting is insufficient. What you really need is someone who can remember when a topic was discussed and what was decided. Taking it a step further, you want someone who is in all of the meetings and can actually summarize overarching themes. Both are challenging tasks for humans, but AI can handle it effectively. Otter even cites the sources of the summaries. This means that if you or your leadership is questioning its accuracy, you can easily refer to the original meeting.

Amplitude Enhances Analytical Access

Jeffrey explained how Amplitude’s Ask Amplitude feature allows users to use AI to query data in natural language, breaking down complex analytic processes into more intuitive and accessible formats. This empowers users and drives deeper insights more efficiently, and helps alleviate the problem of not knowing where to start or how to approach the product.

Jeffrey also echoed one of Darius's points about the interesting input you get when you provide people with a text box. It's extremely enlightening to see what they put in there. For instance, in our text box where users can ask any question about their data, a common query was, "What is my API key to instrument Amplitude with?" The system wasn't designed to answer that as it's focused on data visualization, revealing users' needs beyond data visualization.

This led to our next iteration of Ask Amplitude, which will soon be more diverse in its capabilities. Jeffrey also highlighted the potential of a conversational framework in connecting with various tools, educating users, and creating dynamic workflows based on chat context. In other words, there is a world where Amplitude helps products improve themselves by analyzing the data, and suggesting action steps based on the data.

Enhance User Education and Trust

Helping users understand how to leverage AI effectively is vital. Darius highlighted Otter's approach to integrating help documentation into their AI assistant, providing users with immediate, context-specific support. He also gathers user feedback through thumbs-up and thumbs-down mechanisms helps refine AI outputs and build trust. This in turn, helps users become more confident in the AI's capabilities.

***💡 Darius released an Artifact on ***how to educate users about your AI offerings.

Tech Strategy: Balancing Speed with Defensibility

Embrace Readily Available Models

Focus on speed to market rather than reinventing the wheel. Claire and Jeffrey stressed using available models to quickly address market needs, which often proves more effective than developing new ones from scratch. Darius chose a hybrid approach, acknowledging that his business needed some custom builds to truly achieve their goals.

Claire’s Approach: One and Done

The key differentiating factor is not the underlying model we use, but whether we are creating a differentiated and valuable user experience while solving an important problem. I focus most of my thoughts on ensuring I'm solving the right problem. I consider whether I understand the needs and workflow of product managers. I spend less time debating between different models for minor, immeasurable improvements in subjective quality.

The core questions Claire asks are:

Is it easy to implement?

Does it meet the needs of my user experience?

Is it of sufficient quality that customers use it and don't complain?

Is it fast?

For Claire, OpenAI ticked all of the boxes, so she uses that model, and is squarely focused on making that model work for her.

The Hybrid Approach of Otter.ai

Otter.ai blends custom internal models with large language models (LLMs) to optimize cost and performance. This method allows them to stay technologically ahead while ensuring they meet practical user needs. Darius explains that specialized models, like speaker identification, are vital for Otter’s operations, whereas general LLMs serve more generalized tasks.

This approach significantly enhances Otter's defensibility, but it's the result of several years of hard work. If your goal is a quick market entry, this may not be a feasible option in the short term.

Fine-Tuning vs. Out-of-the-Box Solutions

Jeffrey recounted Amplitude’s experience with fine-tuning OpenAI’s models. Initially, custom fine-tuning seemed promising, but it fell short in reliability and latency when scaled. Leveraging OpenAI’s general models proved more effective in maintaining balance between performance and speed.

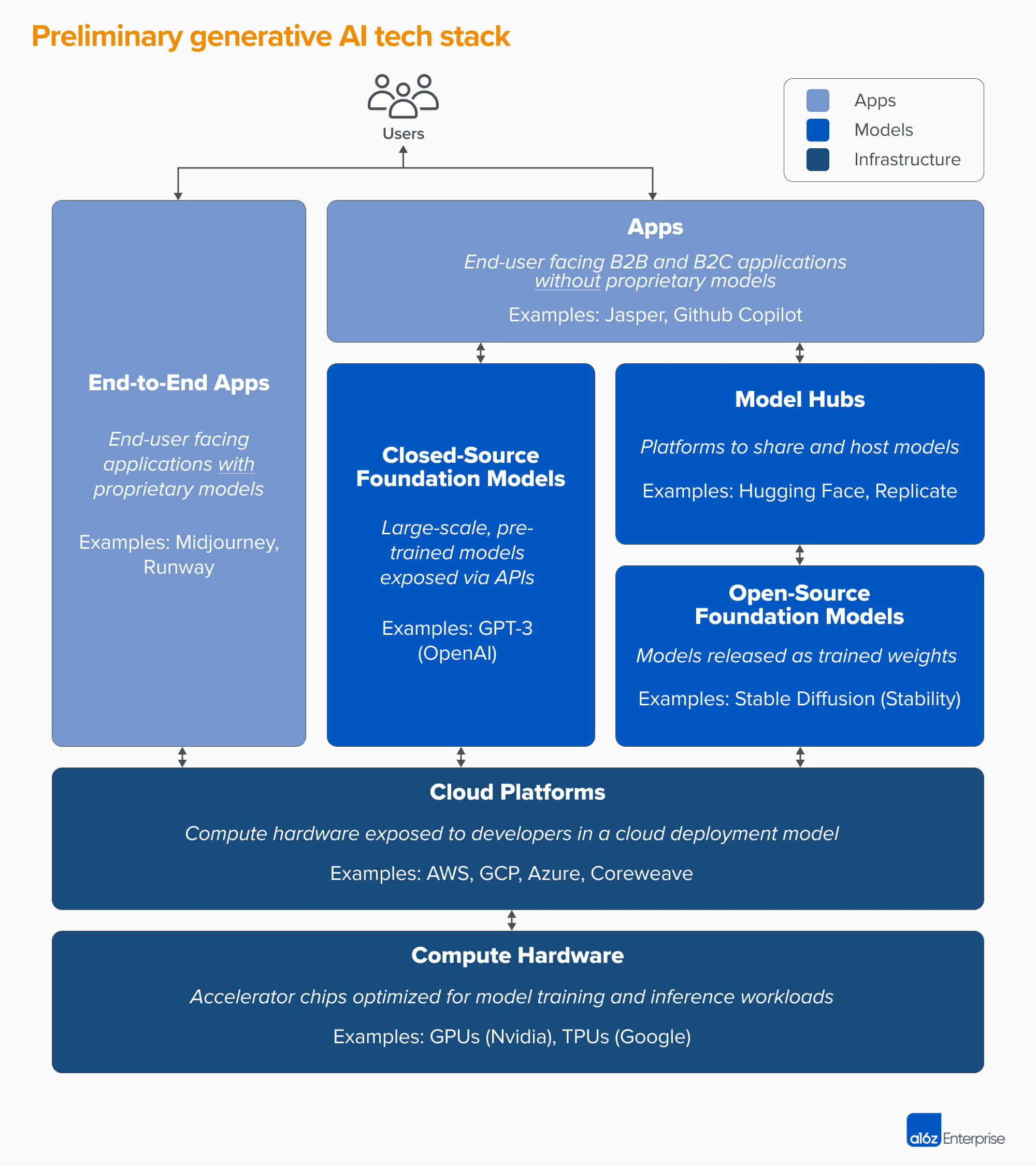

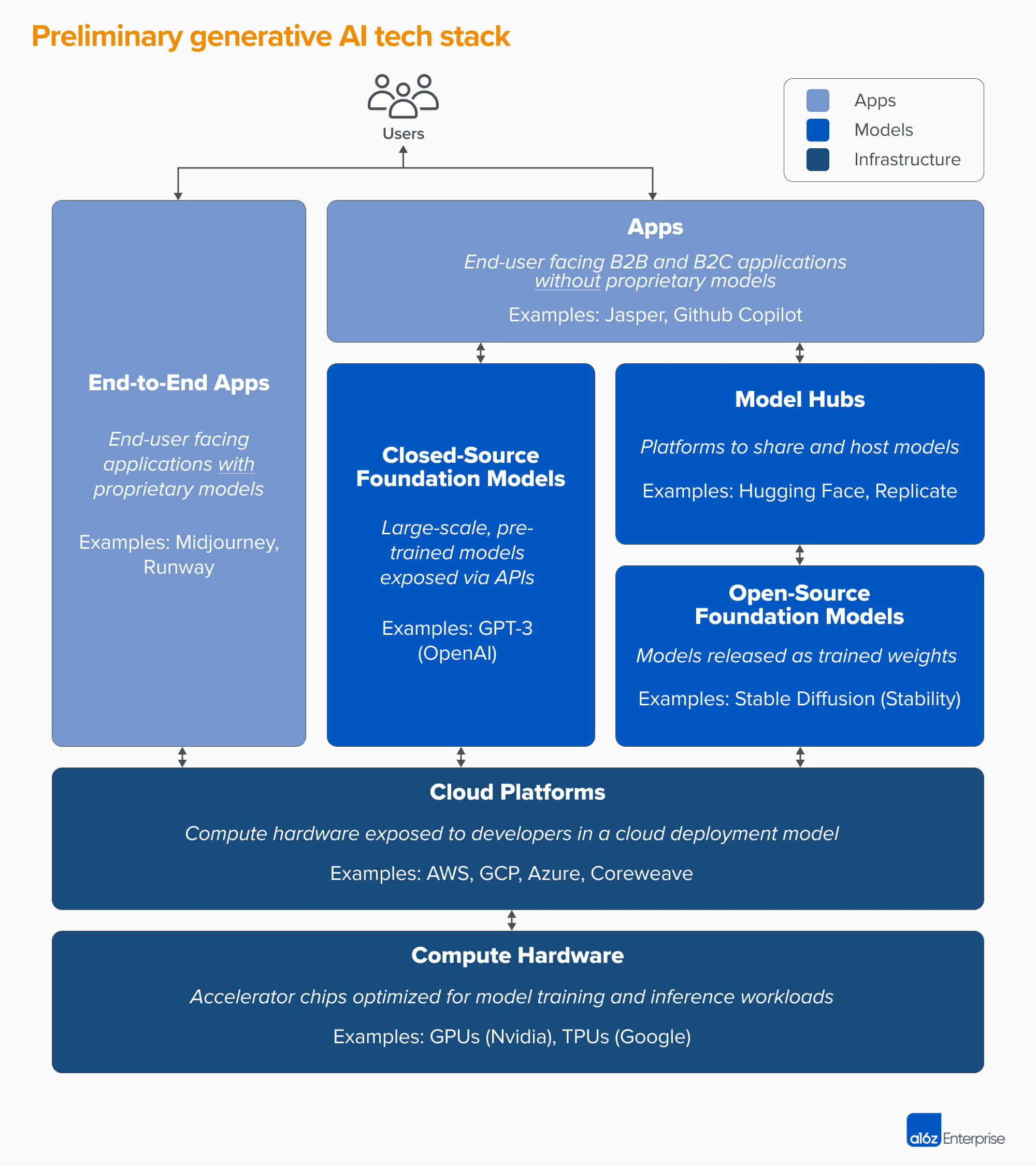

https://a16z.com/who-owns-the-generative-ai-platform/

Competitive Strategy: Defending Your Market Position

https://www.sequoiacap.com/article/generative-ai-act-two/

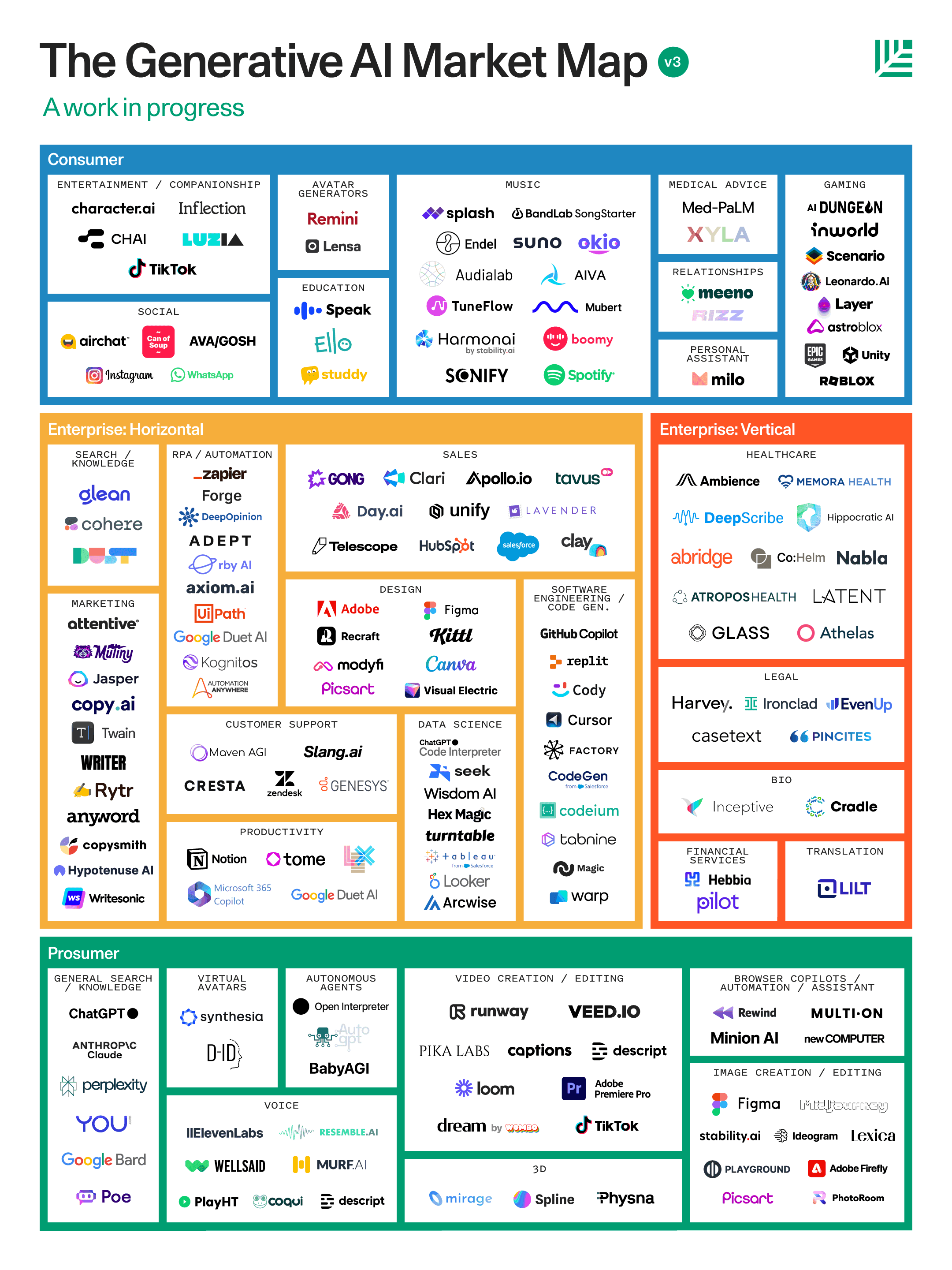

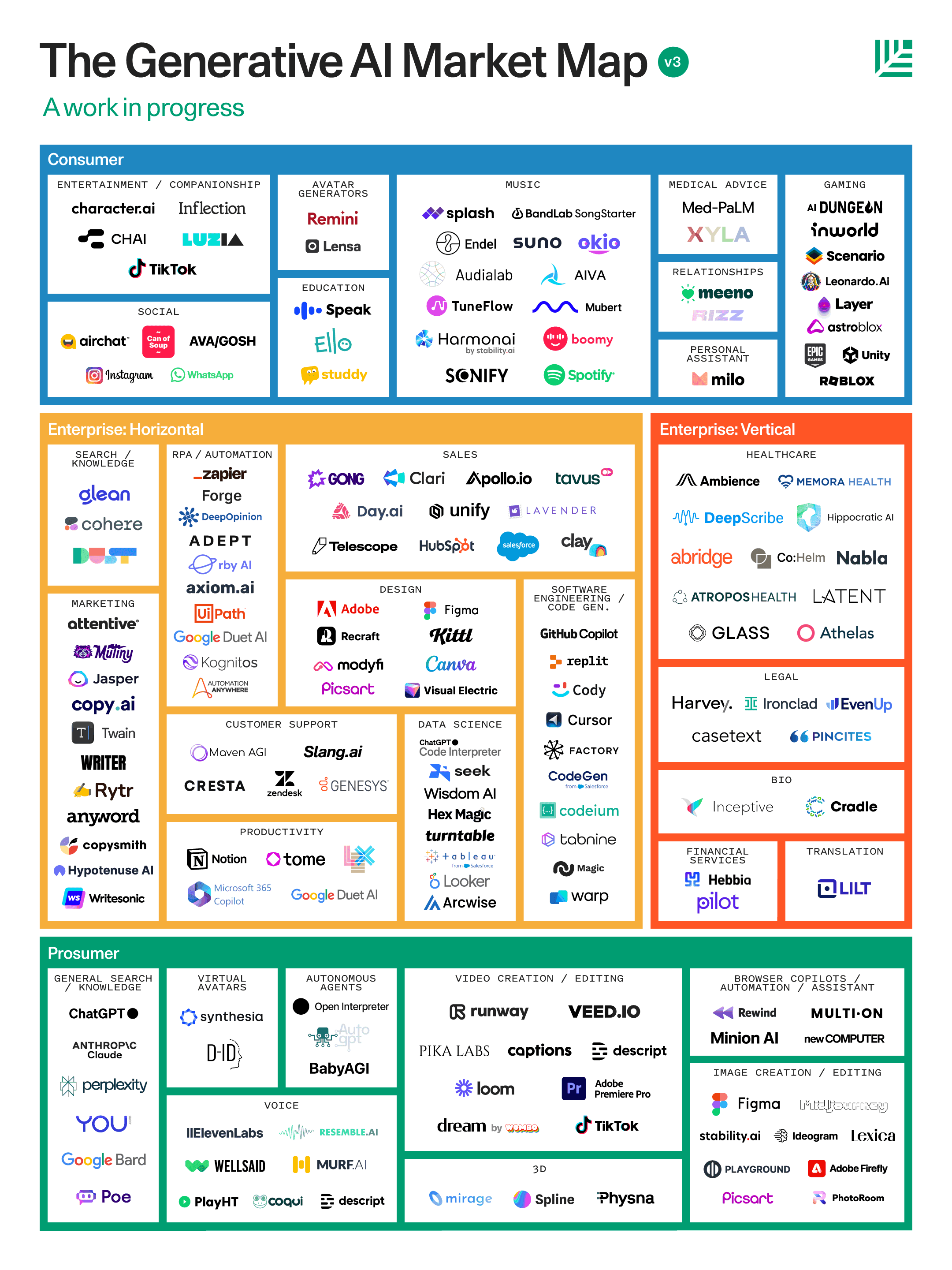

It’s easy to look at the above graphic and think there isn’t room in the market. Our panel suggests ignoring the noise and focusing on:

Focus on User Value for a Competitive Edge

Claire Vo emphasized that lasting competitive advantage is built not by technology alone but by crafting a delightful and high-value user experience. Ensuring user satisfaction and utility should be at the forefront to stay ahead of rivals.

Don’t Get Distracted By “Platform Risks”

An important discussion point was the potential risks of becoming overly reliant on platforms from major AI companies like OpenAI or Google. However, Claire and other panelists believe that building an adaptable, user-focused strategy provides more security than worrying about platform dependencies. Ultimately, what’s under the hood won’t matter as much.

Leverage Proprietary Data

Proprietary data is a key differentiator and a bulwark against competitors. Claire, Jeffrey, and Darius highlighted that unique data sets enhance competitive insulation, enabling tailored AI models that offer unparalleled functionality and user experiences.

Conclusion: Iterative Learning and User-Centric Development

Embrace Constant Refinement

The experts wrapped up with insights on the iterative nature of developing an AI strategy. Whether through feedback loops highlighted by Darius or iterating on prompts as discussed by Claire, the path to a successful AI product strategy lies in continuous learning and refinement.

Key Takeaway

AI serves as an accelerative force best leveraged with strategic foresight, a focus on real-world user problems, and relentless iteration. The landscape is competitive, but with the right approach, your AI strategy can carve out a definitive space in any market.

Focus on customer pain points first, not the novel technology. And help users understand how to get the most from AI features.

A good tech strategy balances speed and defensibility. The most valuable AI features don’t need to depend on frontier tech

Leverage proprietary data: Data is often the differentiator rather than the model.

Related Material:

Artifacts: Free, real-world products from leading experts

Bridging the gap from Gen AI POC to production

Prompt Engineering Template

The Six Core AI PM Competencies

PRD Template for AI-driven Features

AI/ML roadmap at Neurons Lab

Product spec for AI-powered malware scan MVP at BitNinja

Using Reforge’s AI to brainstorm around a new use case

GenAI Product Strategy and Roadmap at Spiffy.ai

Guides: Members-only, step by step instruction

Evaluate the value of Gen AI for your product

Evaluate techniques for incorporating LLMs into your product

Define Successful Conversational AI Products

Understand conversational AI technology

Design a Conversational UX Experience

Courses: Live, in-depth upskilling face-to-face with Reforge experts

Generative AI Products: How to Get from Idea to MVP - Polly Allen and Rupa Chaturvedi

GenAI Product Strategy - Aniket Deosthali

Blog Post + Demo Video - How we built the Reforge Extension!

Podcast: Reforge’s Unsolicited Feedback is available on the platform of your choice

Hear Box CTO, Ben Kus, evaluate AI models and discuss his approach to building enterprise AI tools.

Listen to Sachin Rekhi react to the shrinking S-Curve’s impact on Product and Marketing Strategy and learn how to quickly find product-market fit in an AI world.

Discover how Claire Vo built Chat PRD.

Select Q&A

Question: When should you go iterative vs transformative with AI? AI development is moving extremely quickly, and I believe it's one of the reasons many companies are reluctant or are adopting a wait-and-see approach. Some companies (Bloomberg, McDonald's, Amazon, GM) have actually jumped early and quickly into transformation with AI and are now reconsidering.

Answer: This is true for any product or technology. Think transformative, build iteratively. Sometimes, the transformative idea won't be ready for prime time yet, but your iterative building will still yield value.

Additionally, the distinction between transformative and iterative approaches in AI isn't always clear-cut. AI is inherently transformative, but it requires constant iteration due to the rapidly evolving market. It seems the question is more about positioning - for example, McDonald's didn't effectively communicate that their AI implementation was in early beta, leading to disappointment when it didn't function smoothly from the start. The key is to manage expectations with your customers so they know what to anticipate.

Question: When executives and Product leaders like yourself look at an AI Product Strategy, what would make you say "Nuh-uh, this is a bad Strategy?"

Answer: It doesn’t clearly articulate: (a) the customer problem being solved (of course), and (b) how AI makes a new solution possible (first principles reasoning or an example/POC, ideally both).

Question: As you integrate AI into your product strategies, how do you balance the excitement of innovation with the need to protect user privacy and data security?

Answer: As a B2B product, we think of AI as a better interface for data you already have access to. So as we build Meeting GenAI features like a chat interface for meetings, we make sure that the data we use to create the chat responses is pulled only from the set of meetings that the person chatting has access to in their Otter account already. This matches how search would work for any SaaS product, it’s just a more powerful interface.

Question: I have a feeling that everyone in this group has found themselves within a work that has AI so that’s how they started, but for someone who should have a blank page start, what would be the advice to give anyone starting from scratch in their genAI journey to understand the tech and the architecture? Answer: Play around with it + follow your curiosity down the rabbit hole + pick a single, narrowly-defined problem you have in your day-to-day (and care enough about) that AI might help automate — and try to make it work well.

Question: Any advice on how to systematically compare foundational models and communicate pros/cons to stakeholders? IMO, a lot of these kinds of assessments end up being quite subjective.

Answer: Yes, we have a few resources on this.

Dan Wolchonok discusses how he uses Adaline to seamlessly switch between foundational models.

There are also a couple of good leaderboards - for open source models & for embeddings.

Question: Otter, Amplitude, ChatPRD are all interesting use cases but they are covering mainly unregulated areas. Do you have any advice for companies who do regulated industry software like corporate tax for example?"

Answer: In unregulated domains, the output of large language models (LLMs) can often be sent directly to users without much oversight, especially for low-stakes or entertainment purposes.

But in regulated industries, we need to think differently. The stakes are higher, the consequences more serious. It's not just about creating cool features; it's about delivering accurate, compliant information that can withstand scrutiny from regulators and professionals alike.

So, how do we approach this? Think of it as a spectrum of oversight, ranging from minimal to comprehensive. Let's walk through the options:

The Minimal Approach: Warning LabelsAt the most basic level, you can provide the LLM's output to users with a clear warning. Imagine a disclaimer that reads: "This tax advice may not be accurate and should not be used without consulting a professional." It's simple, but it puts the onus on the user to seek verification.

User-Driven ModerationStep it up a notch by empowering users to flag content for review. You could include a call-to-action on all responses: "Flag this answer to be reviewed by a professional accountant." Or, get smarter about it – show this CTA only for responses that touch on sensitive topics or high-stakes information, like securities laws.

Sampling and Retroactive ReviewFor a more proactive approach, consider sampling a portion of outputs for professional review. Combine this with a threat detection system to prioritize high-stakes content for expert eyes. This allows you to catch and correct issues retroactively, improving the system over time.

Full Pre-Release ReviewAt the far end of the spectrum, you have a human expert reviewing every single output before it reaches the user. This approach is particularly useful when optimizing the supply side of the interaction. Picture a scenario where users interact with a professional accountant via messaging, and that accountant leverages an LLM to help generate responses.

The beauty of this approach is that it's not static. As you gather more data and feedback, you can feed it back into the model using reinforcement learning with human feedback (RLHF). This creates a virtuous cycle, continuously improving the accuracy and quality of your LLM's output.

Today, LLM's are powerful, but imperfect. By thoughtfully implementing oversight mechanisms, you can tap into the power LLMs to deliver products that set appropriate expectations with your users, meet regulatory requirements, improve steadily over time, and put your product at the forefront.

Introduction: A Deep Dive into AI Product Strategy

The world of AI is dynamic and rapidly evolving, and understanding how to harness its potential for product strategy has never been more critical. On the cutting edge of this discussion are leaders like Ravi Mehta (Advisor, former CPO of Tinder), Claire Vo (LaunchDarkly & ChatPRD), Darius Contractor (Otter.AI), and Jeffrey Wang (Amplitude); each offering unique insights into the intricacies and strategies required to thrive in this high-stakes environment.

Today, we deep dive on user strategy, technological advancements, and competitive positioning, all underpinned by a deep understanding of:

How AI can transform your product strategy and,

How to ensure you're thinking strategically about your AI implementation.

Why Strategy Is So Critical in GenAI?

AI is an accelerating technology, not a disruptive technology!

In contrast to previous technology shifts like the internet or mobile that created expansive opportunities for startups, AI has presented a different scenario. AI is an accelerating technology that can enhance existing products of incumbents as much as it can benefit startups. This is unlike disruptive technologies, such as the internet and mobile, which required large companies to rethink and rebuild their products.

A case in point is Adobe, which was slow to embrace cloud technology, creating opportunities for new players like Canva and Figma. However, with AI, Adobe quickly integrated the technology into their existing Creative Suite and launched new AI-centric products. This quick adoption has led to a fiercely competitive environment, benefiting Adobe in terms of adoption and stock price.

Given this competitive landscape, it's critical to think strategically about the products you're building and how to compete effectively. This involves considering three strategic areas:

User Strategy: Explore how AI can be used to solve real customer problems.

Technology Strategy: Determine the right approach to building your product's AI capabilities. This involves deciding when to use commonly available models and when to push the frontier of technology.

Competitive Strategy: Understand how to create a defensible position and avoid disruption from competitors or platforms you might be relying on.

User Strategy: Solving Real Problems First

Focus on User Insights

Ravi Mehta and his panel of experts underscored that solving real user problems should always precede the adoption of shiny new technology.

With the rapid advancement of AI, we're seeing a shift from AI as a novelty to AI as a powerful tool for solving real customer problems. The most successful AI products start with user pain points and use cases, structuring AI capabilities around those needs.

Claire Vo's Approach with ChatPRD

Claire Vo, Founder of ChatPRD, highlighted a vital lesson: start with what’s critical for your user base. She designed ChatPRD to alleviate her own workload. And, when general AI models attempted to solve this problem, it just didn’t meet her expectations. Claire has a very high bar for writing and the existing tools and models just didn’t level up. They didn’t sound like her.

Claire recommends refining your prompts, understanding user workflows deeply, and focusing on user satisfaction to ensure that your AI solution is both practical and valued. The result, she and numerous third parties have done side by side comparisons, and the reality is ChatPRD has generated higher quality, structured outputs for this use case.

💡 Reforge has a Prompt Engineering Template Artifact from Michael Taylor that you can use to help refine your prompts!

Otter.ai Transforms Meetings into Actionable Insights

Darius discussed how Otter is revolutionizing meeting notes into actionable insights. By using AI to transcribe and summarize meetings, Otter not only saves time but ensures critical information is accessible and actionable, directly fulfilling a critical user need.

He resolved several critical issues. Most individuals cannot afford a full-time human note-taker, and merely transcribing the meeting is insufficient. What you really need is someone who can remember when a topic was discussed and what was decided. Taking it a step further, you want someone who is in all of the meetings and can actually summarize overarching themes. Both are challenging tasks for humans, but AI can handle it effectively. Otter even cites the sources of the summaries. This means that if you or your leadership is questioning its accuracy, you can easily refer to the original meeting.

Amplitude Enhances Analytical Access

Jeffrey explained how Amplitude’s Ask Amplitude feature allows users to use AI to query data in natural language, breaking down complex analytic processes into more intuitive and accessible formats. This empowers users and drives deeper insights more efficiently, and helps alleviate the problem of not knowing where to start or how to approach the product.

Jeffrey also echoed one of Darius's points about the interesting input you get when you provide people with a text box. It's extremely enlightening to see what they put in there. For instance, in our text box where users can ask any question about their data, a common query was, "What is my API key to instrument Amplitude with?" The system wasn't designed to answer that as it's focused on data visualization, revealing users' needs beyond data visualization.

This led to our next iteration of Ask Amplitude, which will soon be more diverse in its capabilities. Jeffrey also highlighted the potential of a conversational framework in connecting with various tools, educating users, and creating dynamic workflows based on chat context. In other words, there is a world where Amplitude helps products improve themselves by analyzing the data, and suggesting action steps based on the data.

Enhance User Education and Trust

Helping users understand how to leverage AI effectively is vital. Darius highlighted Otter's approach to integrating help documentation into their AI assistant, providing users with immediate, context-specific support. He also gathers user feedback through thumbs-up and thumbs-down mechanisms helps refine AI outputs and build trust. This in turn, helps users become more confident in the AI's capabilities.

***💡 Darius released an Artifact on ***how to educate users about your AI offerings.

Tech Strategy: Balancing Speed with Defensibility

Embrace Readily Available Models

Focus on speed to market rather than reinventing the wheel. Claire and Jeffrey stressed using available models to quickly address market needs, which often proves more effective than developing new ones from scratch. Darius chose a hybrid approach, acknowledging that his business needed some custom builds to truly achieve their goals.

Claire’s Approach: One and Done

The key differentiating factor is not the underlying model we use, but whether we are creating a differentiated and valuable user experience while solving an important problem. I focus most of my thoughts on ensuring I'm solving the right problem. I consider whether I understand the needs and workflow of product managers. I spend less time debating between different models for minor, immeasurable improvements in subjective quality.

The core questions Claire asks are:

Is it easy to implement?

Does it meet the needs of my user experience?

Is it of sufficient quality that customers use it and don't complain?

Is it fast?

For Claire, OpenAI ticked all of the boxes, so she uses that model, and is squarely focused on making that model work for her.

The Hybrid Approach of Otter.ai

Otter.ai blends custom internal models with large language models (LLMs) to optimize cost and performance. This method allows them to stay technologically ahead while ensuring they meet practical user needs. Darius explains that specialized models, like speaker identification, are vital for Otter’s operations, whereas general LLMs serve more generalized tasks.

This approach significantly enhances Otter's defensibility, but it's the result of several years of hard work. If your goal is a quick market entry, this may not be a feasible option in the short term.

Fine-Tuning vs. Out-of-the-Box Solutions

Jeffrey recounted Amplitude’s experience with fine-tuning OpenAI’s models. Initially, custom fine-tuning seemed promising, but it fell short in reliability and latency when scaled. Leveraging OpenAI’s general models proved more effective in maintaining balance between performance and speed.

https://a16z.com/who-owns-the-generative-ai-platform/

Competitive Strategy: Defending Your Market Position

https://www.sequoiacap.com/article/generative-ai-act-two/

It’s easy to look at the above graphic and think there isn’t room in the market. Our panel suggests ignoring the noise and focusing on:

Focus on User Value for a Competitive Edge

Claire Vo emphasized that lasting competitive advantage is built not by technology alone but by crafting a delightful and high-value user experience. Ensuring user satisfaction and utility should be at the forefront to stay ahead of rivals.

Don’t Get Distracted By “Platform Risks”

An important discussion point was the potential risks of becoming overly reliant on platforms from major AI companies like OpenAI or Google. However, Claire and other panelists believe that building an adaptable, user-focused strategy provides more security than worrying about platform dependencies. Ultimately, what’s under the hood won’t matter as much.

Leverage Proprietary Data

Proprietary data is a key differentiator and a bulwark against competitors. Claire, Jeffrey, and Darius highlighted that unique data sets enhance competitive insulation, enabling tailored AI models that offer unparalleled functionality and user experiences.

Conclusion: Iterative Learning and User-Centric Development

Embrace Constant Refinement

The experts wrapped up with insights on the iterative nature of developing an AI strategy. Whether through feedback loops highlighted by Darius or iterating on prompts as discussed by Claire, the path to a successful AI product strategy lies in continuous learning and refinement.

Key Takeaway

AI serves as an accelerative force best leveraged with strategic foresight, a focus on real-world user problems, and relentless iteration. The landscape is competitive, but with the right approach, your AI strategy can carve out a definitive space in any market.

Focus on customer pain points first, not the novel technology. And help users understand how to get the most from AI features.

A good tech strategy balances speed and defensibility. The most valuable AI features don’t need to depend on frontier tech

Leverage proprietary data: Data is often the differentiator rather than the model.

Related Material:

Artifacts: Free, real-world products from leading experts

Bridging the gap from Gen AI POC to production

Prompt Engineering Template

The Six Core AI PM Competencies

PRD Template for AI-driven Features

AI/ML roadmap at Neurons Lab

Product spec for AI-powered malware scan MVP at BitNinja

Using Reforge’s AI to brainstorm around a new use case

GenAI Product Strategy and Roadmap at Spiffy.ai

Guides: Members-only, step by step instruction

Evaluate the value of Gen AI for your product

Evaluate techniques for incorporating LLMs into your product

Define Successful Conversational AI Products

Understand conversational AI technology

Design a Conversational UX Experience

Courses: Live, in-depth upskilling face-to-face with Reforge experts

Generative AI Products: How to Get from Idea to MVP - Polly Allen and Rupa Chaturvedi

GenAI Product Strategy - Aniket Deosthali

Blog Post + Demo Video - How we built the Reforge Extension!

Podcast: Reforge’s Unsolicited Feedback is available on the platform of your choice

Hear Box CTO, Ben Kus, evaluate AI models and discuss his approach to building enterprise AI tools.

Listen to Sachin Rekhi react to the shrinking S-Curve’s impact on Product and Marketing Strategy and learn how to quickly find product-market fit in an AI world.

Discover how Claire Vo built Chat PRD.

Select Q&A

Question: When should you go iterative vs transformative with AI? AI development is moving extremely quickly, and I believe it's one of the reasons many companies are reluctant or are adopting a wait-and-see approach. Some companies (Bloomberg, McDonald's, Amazon, GM) have actually jumped early and quickly into transformation with AI and are now reconsidering.

Answer: This is true for any product or technology. Think transformative, build iteratively. Sometimes, the transformative idea won't be ready for prime time yet, but your iterative building will still yield value.

Additionally, the distinction between transformative and iterative approaches in AI isn't always clear-cut. AI is inherently transformative, but it requires constant iteration due to the rapidly evolving market. It seems the question is more about positioning - for example, McDonald's didn't effectively communicate that their AI implementation was in early beta, leading to disappointment when it didn't function smoothly from the start. The key is to manage expectations with your customers so they know what to anticipate.

Question: When executives and Product leaders like yourself look at an AI Product Strategy, what would make you say "Nuh-uh, this is a bad Strategy?"

Answer: It doesn’t clearly articulate: (a) the customer problem being solved (of course), and (b) how AI makes a new solution possible (first principles reasoning or an example/POC, ideally both).

Question: As you integrate AI into your product strategies, how do you balance the excitement of innovation with the need to protect user privacy and data security?

Answer: As a B2B product, we think of AI as a better interface for data you already have access to. So as we build Meeting GenAI features like a chat interface for meetings, we make sure that the data we use to create the chat responses is pulled only from the set of meetings that the person chatting has access to in their Otter account already. This matches how search would work for any SaaS product, it’s just a more powerful interface.

Question: I have a feeling that everyone in this group has found themselves within a work that has AI so that’s how they started, but for someone who should have a blank page start, what would be the advice to give anyone starting from scratch in their genAI journey to understand the tech and the architecture? Answer: Play around with it + follow your curiosity down the rabbit hole + pick a single, narrowly-defined problem you have in your day-to-day (and care enough about) that AI might help automate — and try to make it work well.

Question: Any advice on how to systematically compare foundational models and communicate pros/cons to stakeholders? IMO, a lot of these kinds of assessments end up being quite subjective.

Answer: Yes, we have a few resources on this.

Dan Wolchonok discusses how he uses Adaline to seamlessly switch between foundational models.

There are also a couple of good leaderboards - for open source models & for embeddings.

Question: Otter, Amplitude, ChatPRD are all interesting use cases but they are covering mainly unregulated areas. Do you have any advice for companies who do regulated industry software like corporate tax for example?"

Answer: In unregulated domains, the output of large language models (LLMs) can often be sent directly to users without much oversight, especially for low-stakes or entertainment purposes.

But in regulated industries, we need to think differently. The stakes are higher, the consequences more serious. It's not just about creating cool features; it's about delivering accurate, compliant information that can withstand scrutiny from regulators and professionals alike.

So, how do we approach this? Think of it as a spectrum of oversight, ranging from minimal to comprehensive. Let's walk through the options:

The Minimal Approach: Warning LabelsAt the most basic level, you can provide the LLM's output to users with a clear warning. Imagine a disclaimer that reads: "This tax advice may not be accurate and should not be used without consulting a professional." It's simple, but it puts the onus on the user to seek verification.

User-Driven ModerationStep it up a notch by empowering users to flag content for review. You could include a call-to-action on all responses: "Flag this answer to be reviewed by a professional accountant." Or, get smarter about it – show this CTA only for responses that touch on sensitive topics or high-stakes information, like securities laws.

Sampling and Retroactive ReviewFor a more proactive approach, consider sampling a portion of outputs for professional review. Combine this with a threat detection system to prioritize high-stakes content for expert eyes. This allows you to catch and correct issues retroactively, improving the system over time.

Full Pre-Release ReviewAt the far end of the spectrum, you have a human expert reviewing every single output before it reaches the user. This approach is particularly useful when optimizing the supply side of the interaction. Picture a scenario where users interact with a professional accountant via messaging, and that accountant leverages an LLM to help generate responses.

The beauty of this approach is that it's not static. As you gather more data and feedback, you can feed it back into the model using reinforcement learning with human feedback (RLHF). This creates a virtuous cycle, continuously improving the accuracy and quality of your LLM's output.

Today, LLM's are powerful, but imperfect. By thoughtfully implementing oversight mechanisms, you can tap into the power LLMs to deliver products that set appropriate expectations with your users, meet regulatory requirements, improve steadily over time, and put your product at the forefront.