Reforge has joined Miro ↗

All articles

The Rise of the Operators: How Two Product Leaders Built a SaaS Company in 9 Months Without Engineers

Dec 4, 2025

Introducing Reforge Build

The Rise of the Operators has been top of mind for us here at Reforge lately. Early in 2025, we (like everyone else) were fascinated by the possibilities but frustrated that the market of vibe coding tools weren’t purpose-built for PMs working with existing products, stakeholders and organizational constraints. So we created Reforge Build, the AI prototyping tool for product builders. You can learn more and start building here.

My co-founder Andy Keil and I built three complete SaaS products in nine months. Just the two of us. Neither of us started as developers.

Full front-end, back-end, databases, deployed and running with paying customers. This kind of velocity is only recently possible, and I believe we're at the forefront of a fundamental shift in how software companies get built.

Call it The Rise of the Operators.

For most of my career, I ran Design and UX teams at companies with thousands of engineers with companies like Teradata, eBay, and MicroStrategy. Andy has always been a zero-to-one Product leader, most recently as Head of Product for a 20-person engineering team at QuotaPath, a Series B startup. We both know how product development is supposed to work. You hire engineers, write specs, run sprints, ship features.

We still do that. Well, sort of. Except on a dramatically compressed timeline without a team of engineers writing code.

Becoming The Technical Founder

When we started Dreambase, the plan was simple: prototype something quick with AI tools, then bring on a founding CTO to build the "real" product. We even talked to potential technical co-founders.

But AI tools kept getting better, which meant I could keep going. We pushed back our timeline for needing a CTO further and further out.

Around month three, Andy and I looked at each other and realized that maybe I should just step into that CTO role myself. Maybe we don't need to give up equity to bring on a technical partner. Maybe we can get this thing to market ourselves.

And we did.

Why Operators Move Faster

Counterintuitively, our background as operators is the main reason we're moving this fast.

Engineering expertise optimizes an early startup for the wrong things. When you know how to code, you start thinking about code quality, architecture, scalability, technical debt. It's all important—but later. None of it matters if you're building something nobody wants.

Being operators forced us to stay obsessed with the only question that mattered: Are we building something customers actually want?

That singular focus got the product and company off the ground. We validated our hypothesis with Supabase's customer base, secured initial funding, and opened our first roles. Getting this far on our own allowed us to zero in on exactly what technical expertise we needed to scale.

We'll probably hire engineers eventually (though honestly, the timeline keeps moving). In 2025, with the AI tools available right now, the bottleneck isn't writing code anymore. It's figuring out what to build.

Designing for Speed

Dylan Field, CEO of Figma, spoke at Supabase Select about something that captures the spirit of what we're doing:

"You have to always be designing the org for speed and whenever you notice a bottleneck, you have to figure out how to remove that bottleneck. And if you're not intentional about it, then you will slow down. You're always fighting to remain fast."

The bottleneck moved. Code isn't the constraint anymore. Customer insight is. And we've cracked a process for getting to customer insight faster than anyone we've talked to.

How We Actually Build

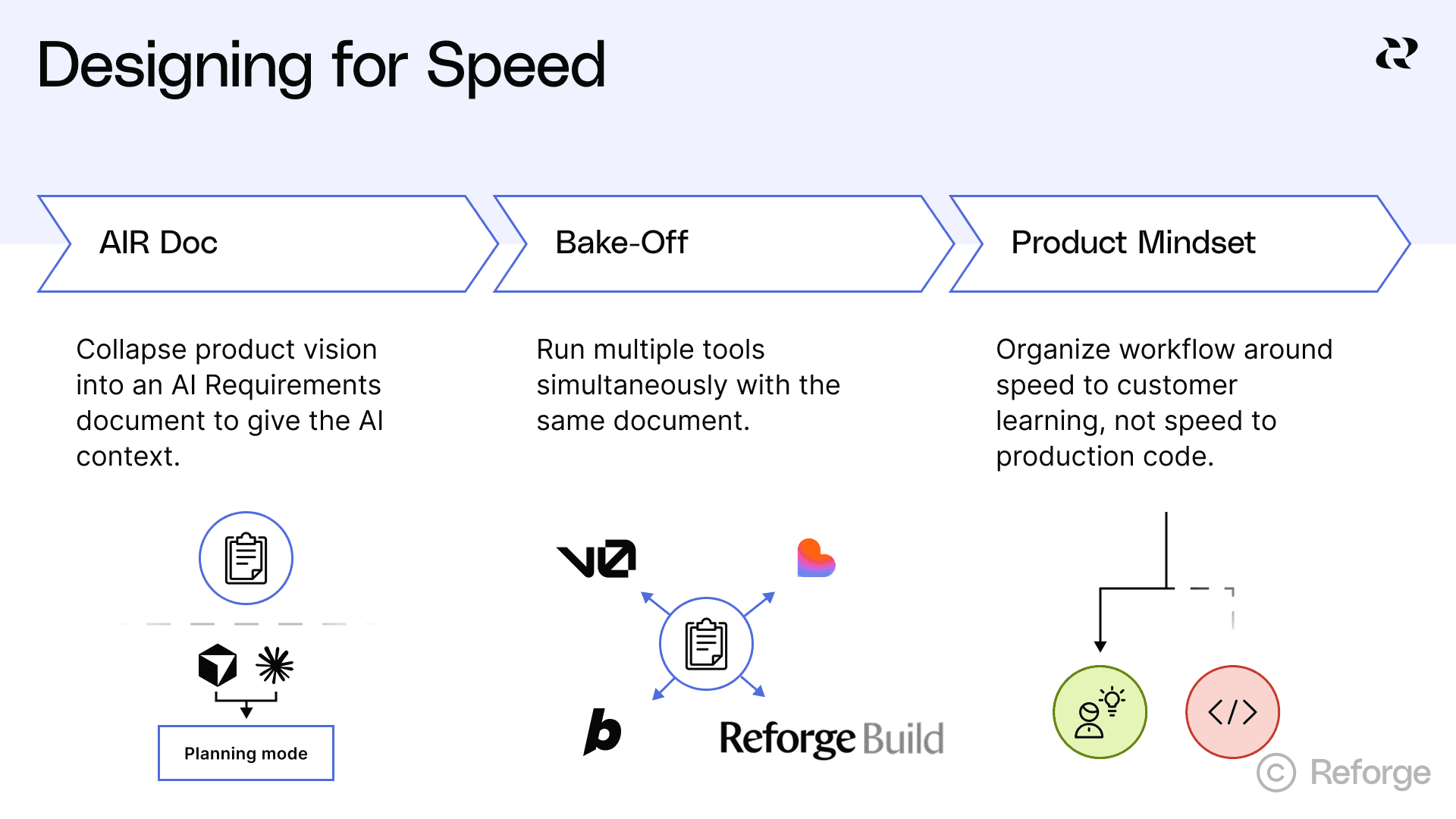

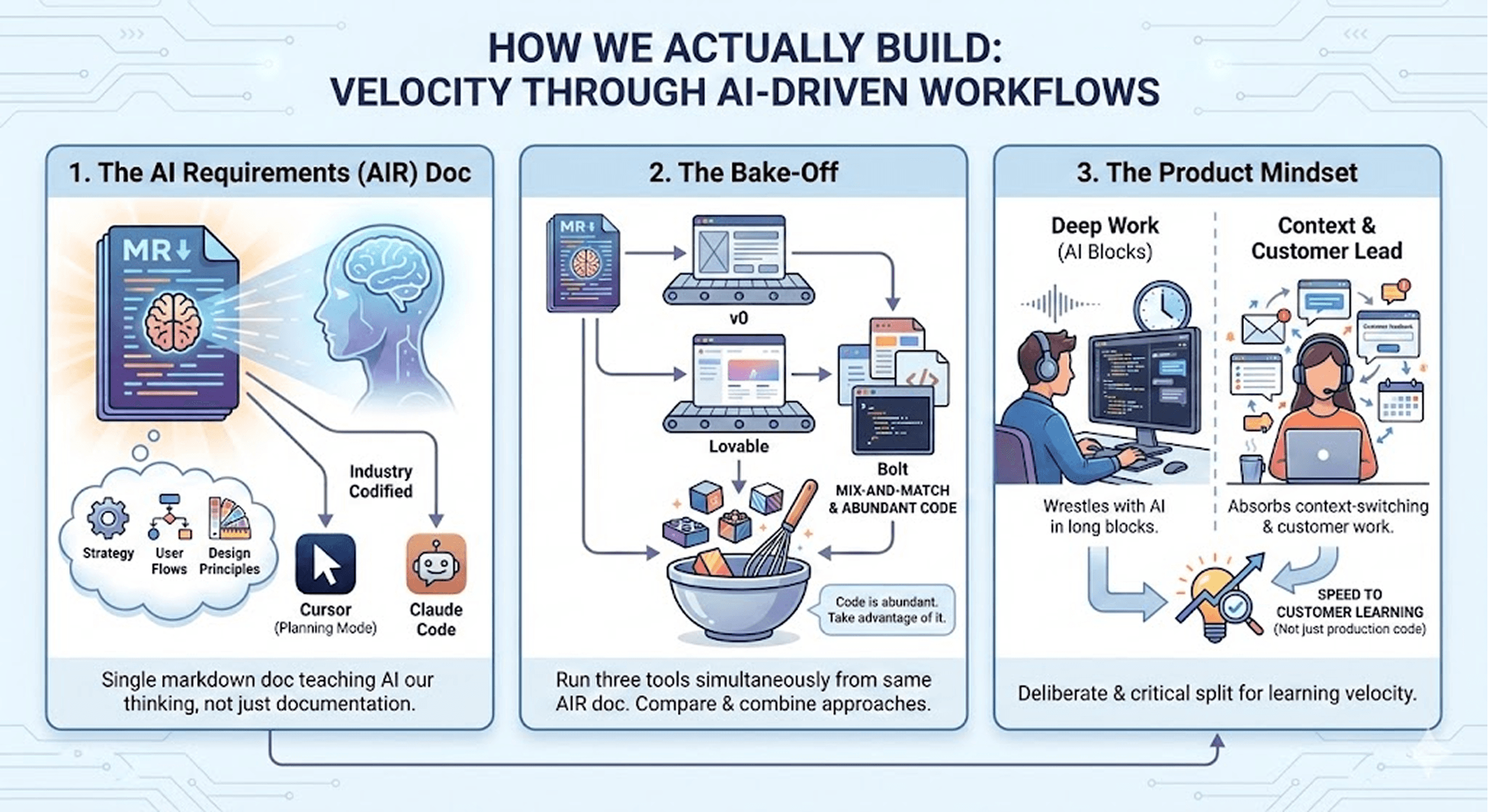

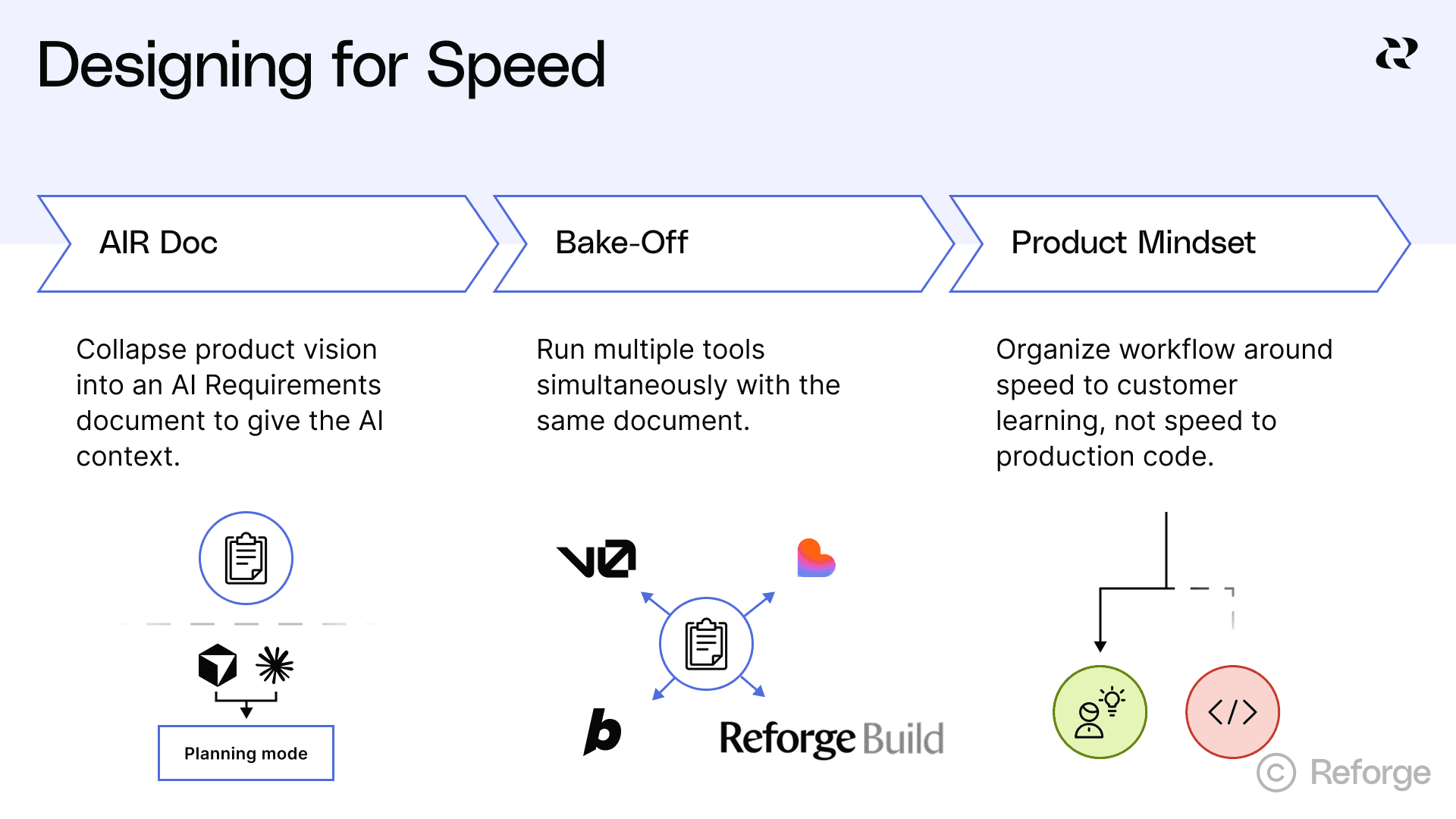

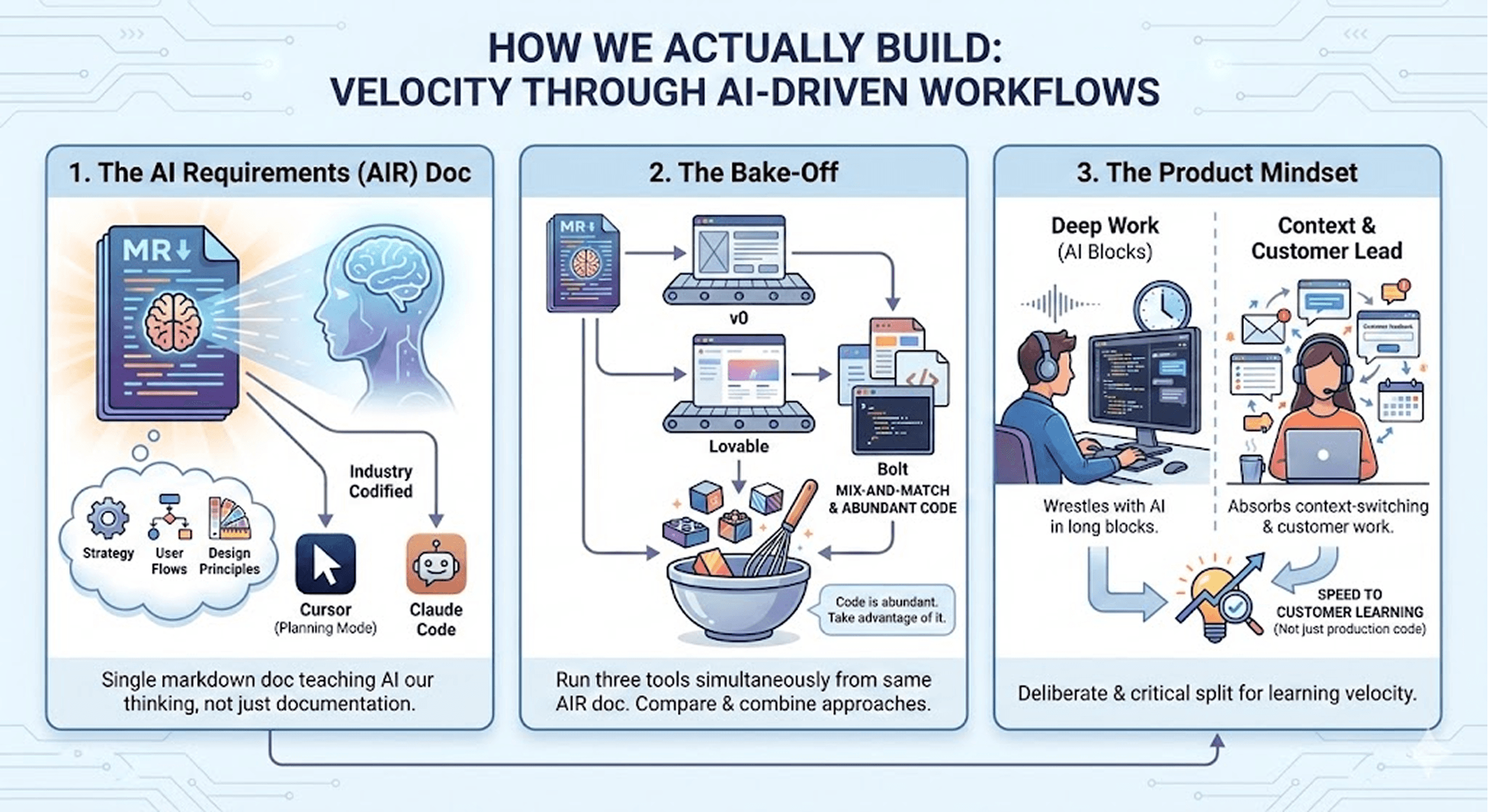

Our velocity comes down to three things:

1. The AI Requirements (AIR) Doc

We collapsed our entire product vision into one markdown document that teaches AI to think the way we do. It's not documentation—it's how we give AI full context on our product strategy, user flows, and design principles in every conversation.

Tools like Cursor and Claude Code are now codifying this approach with features like Planning Mode. The industry caught up to what we were already doing.

2. The Bake-Off

When it’s time to write code, I run three tools at the same time: v0, Lovable, and Bolt. All at once from the exact same AIR doc. Why commit to one tool's interpretation when you can see three different approaches and mix-and-match?

Code is abundant now. Take advantage of it.

3. The Product Mindset

We reorganized our entire workflow around speed to customer learning, not speed to production code. One of us wrestles with AI in long, uninterrupted blocks. The other absorbs all the context-switching and customer work.

This split is deliberate and critical.

The AIR Doc: Teaching AI to Think Like You

AI tools are only as good as the context you give them.

Most teams set themselves up for failure from the start. PRDs live in Notion, designs live in Figma, technical specs live in Confluence (if they exist at all). Every time you start a conversation with an AI coding tool, it's starting from zero.

You end up having the same conversations over and over. Explaining the same decisions. Re-establishing the same constraints.

Around month two, I was frustrated with how much time I spent re-explaining our data model to Cursor. I'd describe the tables, explain how users relate to workspaces, detail our auth flow. Then the next day I'd do it all over again.

So one night at Andy's place, working late, I started dumping everything into one massive markdown file. Database schema, product requirements, UX principles, technical decisions. Everything.

I called it "context.md" because I'm terrible at naming things.

Then something clicked. I could @mention that file in any conversation with Cursor. The AI would have full context every single time. We went from spending 30% of our time re-establishing context to 0%.

We Start in the Database

This feels backwards but works incredibly well. We kick off every new feature by modeling it in the database first—not wireframes or user stories.

We use database.build where you describe your product in natural language and it generates a Postgres schema. I'll just start talking: "Okay, we need user profiles, and users belong to workspaces, and workspaces can have multiple projects..."

You can't hide behind vague product requirements when you're defining a database schema. What actually is a "user"? How do workspaces relate to projects? Can a user belong to multiple workspaces?

(Spoiler: yes, and we didn't think about that until we had to model it.)

Then Low-Fidelity Wireframes

We do wireframes in Whimsical. I'm a designer by background, so I have to actively fight the urge to make things beautiful too early.

At this stage, wireframes are really about messaging. Andy and I will spend an hour arguing about the text on a button. Not because we're perfectionists, but because if we can't explain what this button does in 2-3 words, we don't understand the value prop yet.

Everything Goes Into One Document

Then we take all of it—the database schema, the wireframes, the product requirements, the UX principles, the technical constraints—and jam it into one markdown document that lives in Cursor.

We call it the "AI Requirements (AIR) doc."

Here's what's actually in it:

Database schema with explanations of why we structured it that way

User flows for core features

Design principles (we have 5-6 that guide every decision)

Technical constraints (built on Supabase, everything integrates there)

Decisions we've already made and don't want to revisit

It's part PRD, part technical design doc, part UX specification. Everything the AI needs to think about our product the way we do. And it lives right next to our code, same repo.

When I'm working on a feature, I @mention the AIR doc and Cursor immediately has full context. I'm not explaining things to the AI. I'm collaborating with it like it's been on the team for months.

If you're trying to build faster with AI, start with one markdown file. Write down your core product decisions, data model, design principles, and technical constraints. Keep it updated. Reference it constantly.

AI is really good at writing code, but it's only as good as the context you give it. And it’s not just our workflow anymore. This AIR doc approach has been validated by the industry, baked directly into tools like Claude Code and Cursor via their new Planning Mode, and it’s now being designed into emerging products like Reforge Build, which ships Project Plan Mode and Project Context as first-class primitives. The process we hacked together in markdown has become the blueprint.

The Bake-Off: Running Three AI Tools in Parallel

When it's time to actually write code, I run three tools at the same time: v0, Lovable, and Bolt. All at once. With the exact same AIR doc.

When I first explained this at a product meetup in Austin, someone looked at me like I was describing an elaborate Rube Goldberg machine. "Why wouldn't you just pick the best tool?"

Because I don't know which tool is going to be "best" for this specific feature. And AI is so fast that I don't have to choose.

Here's how it works: I copy our AIR doc into all three tools simultaneously. Then I let them run. This usually takes about 10 minutes. Each tool generates a complete, working prototype based on the same specifications.

Then I look at all three and cherry-pick the best parts from each one.

Bolt might nail the Shadcn UI components. v0 might generate a better layout for this particular flow. Lovable might create cleaner component logic. So I just... take the best from each and combine them.

Different LLMs, Different Approaches

These tools use different LLMs trained on different data. They literally think about problems differently.

I don't want to optimize for one tool's way of thinking. I want to see multiple approaches to the same problem and then make an informed decision about which direction is better.

Some people call this "competitive prototyping." Andy and I just call it “the bake-off.”

From Prompt to Customer in Days

We go from database schema to working prototype to something a customer can actually use in a matter of days.

Andy doesn't send customers Loom videos or do screen-share demos. He sends them the actual link to the prototype. "Here, play with this and break it."

He's particular about this. "If they're not actually touching and feeling it, I trust it less," he told me once. He always sends the link ahead of time, has them share their screen on the call, and records everything.

We can build and iterate all day long, but until we get in front of customers, it's not real. So we optimize the entire process for speed to that moment.

Database → wireframes → AIR doc → bake-off → customer validation → ship.

We run this cycle for every single feature, not just the initial product.

The Product Mindset: Reorganizing Around Learning

Working with AI like this doesn't just change how you code. It changes how you should structure your team.

Andy and I split our responsibilities in a way that wouldn't make sense for a traditional startup. I do all the AI work. Andy does zero coding. Instead, he handles everything customer-facing: research, demos, feedback sessions, support.

This isn't because Andy can't learn AI tools (he absolutely could). It's because AI work requires a totally different kind of time than customer work.

When I'm working with Cursor or Claude, I'm in what I can only describe as a bare-knuckle boxing match with the LLM. I'm close to getting something working. One more prompt. One more test. I know I'm almost there.

You can't do that effectively if you're jumping into customer calls every 30 minutes. You need deep, uninterrupted time. Context-switching kills productivity.

Traditional Collaboration Is the Wrong Model

Most teams are still running standups, sprint planning, retros—all the agile ceremonies we've used for years. Those make sense when coordination is the hard part. When you need five engineers to work together on one feature.

But coordination isn't the hard part anymore. Deep focus is the hard part.

We removed the code bottleneck by using AI. But we almost immediately created a new bottleneck: my ability to maintain focus long enough to wrestle with the AI effectively.

So we protect my time aggressively. While Andy's calendar is often owned by other people, mine is owned by me. This works because Andy's job is to get real customer feedback as fast as possible so we know what to build next.

What Being Operators Forced Us to See

Code used to be expensive and customer conversations were relatively cheap. You could talk to as many customers as you wanted, but it didn't speed up shipping features.

Now code is insanely cheap. We can build virtually any feature we want. The scarce resource is knowing which are the right features for the right users with the right positioning and pricing.

Finding the right person to talk to, getting on their calendar, asking the right questions, synthesizing the feedback—that's what's expensive now.

I think our background as operators is the main reason we figured this out. And why I believe there will be many more people like us and companies like Dreambase.

We don't get lost in technical architecture because we don't know enough about it to get lost. We couldn't worry about code quality or technical debt because, honestly, we're not sure we'd recognize it if we saw it.

What we do have strong opinions about is whether we're building something customers actually want. That's the question that kept us up at night. And it turns out that was the only question that mattered at this stage.

The Rise of the Operators

AI unlocked an insanely fast and effective way to build products. It's a superpower if you can surround it with good process and resist the temptation to do things the old way.

We got our AI-native analytics product to market fast enough to validate it with Supabase's customer base. (We coined the term "AI-Native Analytics" early on. Companies like Amplitude are now using it too, which is both validating and slightly surreal.)

We're still searching for product-market fit in the larger analytics market, but we're iterating weekly instead of quarterly. That speed only exists because we stopped thinking like a traditional engineering team.

For the first time in the history of software, operators who deeply understand customers, products, and business problems can go from insight to deployed code without waiting on engineering capacity.

I understand how this can be uncomfortable. I've talked to experienced engineers who struggle with these tools because they're thinking about them the old way. They're trying to write better code faster instead of trying to validate customer problems faster. They're optimizing for elegance when they should be optimizing for learning.

Dylan Field is right: you have to design your org for speed and remove bottlenecks as soon as you notice them. The bottleneck has moved. And it'll move again.

Before AI: More engineers = faster shipping.

After AI: Better product thinking = faster shipping.

We built three products in nine months as operators stepping into technical roles. We did it by collapsing our product vision into one document the AI could actually use, running multiple tools in parallel to see different approaches, and reorganizing our entire workflow around learning from customers instead of writing perfect code.

If we can do that, what's stopping you?

Want to see what AI-native analytics looks like? We built Dreambase to give Supabase users instant product insights without the complexity of traditional analytics tools.

Introducing Reforge Build

The Rise of the Operators has been top of mind for us here at Reforge lately. Early in 2025, we (like everyone else) were fascinated by the possibilities but frustrated that the market of vibe coding tools weren’t purpose-built for PMs working with existing products, stakeholders and organizational constraints. So we created Reforge Build, the AI prototyping tool for product builders. You can learn more and start building here.

My co-founder Andy Keil and I built three complete SaaS products in nine months. Just the two of us. Neither of us started as developers.

Full front-end, back-end, databases, deployed and running with paying customers. This kind of velocity is only recently possible, and I believe we're at the forefront of a fundamental shift in how software companies get built.

Call it The Rise of the Operators.

For most of my career, I ran Design and UX teams at companies with thousands of engineers with companies like Teradata, eBay, and MicroStrategy. Andy has always been a zero-to-one Product leader, most recently as Head of Product for a 20-person engineering team at QuotaPath, a Series B startup. We both know how product development is supposed to work. You hire engineers, write specs, run sprints, ship features.

We still do that. Well, sort of. Except on a dramatically compressed timeline without a team of engineers writing code.

Becoming The Technical Founder

When we started Dreambase, the plan was simple: prototype something quick with AI tools, then bring on a founding CTO to build the "real" product. We even talked to potential technical co-founders.

But AI tools kept getting better, which meant I could keep going. We pushed back our timeline for needing a CTO further and further out.

Around month three, Andy and I looked at each other and realized that maybe I should just step into that CTO role myself. Maybe we don't need to give up equity to bring on a technical partner. Maybe we can get this thing to market ourselves.

And we did.

Why Operators Move Faster

Counterintuitively, our background as operators is the main reason we're moving this fast.

Engineering expertise optimizes an early startup for the wrong things. When you know how to code, you start thinking about code quality, architecture, scalability, technical debt. It's all important—but later. None of it matters if you're building something nobody wants.

Being operators forced us to stay obsessed with the only question that mattered: Are we building something customers actually want?

That singular focus got the product and company off the ground. We validated our hypothesis with Supabase's customer base, secured initial funding, and opened our first roles. Getting this far on our own allowed us to zero in on exactly what technical expertise we needed to scale.

We'll probably hire engineers eventually (though honestly, the timeline keeps moving). In 2025, with the AI tools available right now, the bottleneck isn't writing code anymore. It's figuring out what to build.

Designing for Speed

Dylan Field, CEO of Figma, spoke at Supabase Select about something that captures the spirit of what we're doing:

"You have to always be designing the org for speed and whenever you notice a bottleneck, you have to figure out how to remove that bottleneck. And if you're not intentional about it, then you will slow down. You're always fighting to remain fast."

The bottleneck moved. Code isn't the constraint anymore. Customer insight is. And we've cracked a process for getting to customer insight faster than anyone we've talked to.

How We Actually Build

Our velocity comes down to three things:

1. The AI Requirements (AIR) Doc

We collapsed our entire product vision into one markdown document that teaches AI to think the way we do. It's not documentation—it's how we give AI full context on our product strategy, user flows, and design principles in every conversation.

Tools like Cursor and Claude Code are now codifying this approach with features like Planning Mode. The industry caught up to what we were already doing.

2. The Bake-Off

When it’s time to write code, I run three tools at the same time: v0, Lovable, and Bolt. All at once from the exact same AIR doc. Why commit to one tool's interpretation when you can see three different approaches and mix-and-match?

Code is abundant now. Take advantage of it.

3. The Product Mindset

We reorganized our entire workflow around speed to customer learning, not speed to production code. One of us wrestles with AI in long, uninterrupted blocks. The other absorbs all the context-switching and customer work.

This split is deliberate and critical.

The AIR Doc: Teaching AI to Think Like You

AI tools are only as good as the context you give them.

Most teams set themselves up for failure from the start. PRDs live in Notion, designs live in Figma, technical specs live in Confluence (if they exist at all). Every time you start a conversation with an AI coding tool, it's starting from zero.

You end up having the same conversations over and over. Explaining the same decisions. Re-establishing the same constraints.

Around month two, I was frustrated with how much time I spent re-explaining our data model to Cursor. I'd describe the tables, explain how users relate to workspaces, detail our auth flow. Then the next day I'd do it all over again.

So one night at Andy's place, working late, I started dumping everything into one massive markdown file. Database schema, product requirements, UX principles, technical decisions. Everything.

I called it "context.md" because I'm terrible at naming things.

Then something clicked. I could @mention that file in any conversation with Cursor. The AI would have full context every single time. We went from spending 30% of our time re-establishing context to 0%.

We Start in the Database

This feels backwards but works incredibly well. We kick off every new feature by modeling it in the database first—not wireframes or user stories.

We use database.build where you describe your product in natural language and it generates a Postgres schema. I'll just start talking: "Okay, we need user profiles, and users belong to workspaces, and workspaces can have multiple projects..."

You can't hide behind vague product requirements when you're defining a database schema. What actually is a "user"? How do workspaces relate to projects? Can a user belong to multiple workspaces?

(Spoiler: yes, and we didn't think about that until we had to model it.)

Then Low-Fidelity Wireframes

We do wireframes in Whimsical. I'm a designer by background, so I have to actively fight the urge to make things beautiful too early.

At this stage, wireframes are really about messaging. Andy and I will spend an hour arguing about the text on a button. Not because we're perfectionists, but because if we can't explain what this button does in 2-3 words, we don't understand the value prop yet.

Everything Goes Into One Document

Then we take all of it—the database schema, the wireframes, the product requirements, the UX principles, the technical constraints—and jam it into one markdown document that lives in Cursor.

We call it the "AI Requirements (AIR) doc."

Here's what's actually in it:

Database schema with explanations of why we structured it that way

User flows for core features

Design principles (we have 5-6 that guide every decision)

Technical constraints (built on Supabase, everything integrates there)

Decisions we've already made and don't want to revisit

It's part PRD, part technical design doc, part UX specification. Everything the AI needs to think about our product the way we do. And it lives right next to our code, same repo.

When I'm working on a feature, I @mention the AIR doc and Cursor immediately has full context. I'm not explaining things to the AI. I'm collaborating with it like it's been on the team for months.

If you're trying to build faster with AI, start with one markdown file. Write down your core product decisions, data model, design principles, and technical constraints. Keep it updated. Reference it constantly.

AI is really good at writing code, but it's only as good as the context you give it. And it’s not just our workflow anymore. This AIR doc approach has been validated by the industry, baked directly into tools like Claude Code and Cursor via their new Planning Mode, and it’s now being designed into emerging products like Reforge Build, which ships Project Plan Mode and Project Context as first-class primitives. The process we hacked together in markdown has become the blueprint.

The Bake-Off: Running Three AI Tools in Parallel

When it's time to actually write code, I run three tools at the same time: v0, Lovable, and Bolt. All at once. With the exact same AIR doc.

When I first explained this at a product meetup in Austin, someone looked at me like I was describing an elaborate Rube Goldberg machine. "Why wouldn't you just pick the best tool?"

Because I don't know which tool is going to be "best" for this specific feature. And AI is so fast that I don't have to choose.

Here's how it works: I copy our AIR doc into all three tools simultaneously. Then I let them run. This usually takes about 10 minutes. Each tool generates a complete, working prototype based on the same specifications.

Then I look at all three and cherry-pick the best parts from each one.

Bolt might nail the Shadcn UI components. v0 might generate a better layout for this particular flow. Lovable might create cleaner component logic. So I just... take the best from each and combine them.

Different LLMs, Different Approaches

These tools use different LLMs trained on different data. They literally think about problems differently.

I don't want to optimize for one tool's way of thinking. I want to see multiple approaches to the same problem and then make an informed decision about which direction is better.

Some people call this "competitive prototyping." Andy and I just call it “the bake-off.”

From Prompt to Customer in Days

We go from database schema to working prototype to something a customer can actually use in a matter of days.

Andy doesn't send customers Loom videos or do screen-share demos. He sends them the actual link to the prototype. "Here, play with this and break it."

He's particular about this. "If they're not actually touching and feeling it, I trust it less," he told me once. He always sends the link ahead of time, has them share their screen on the call, and records everything.

We can build and iterate all day long, but until we get in front of customers, it's not real. So we optimize the entire process for speed to that moment.

Database → wireframes → AIR doc → bake-off → customer validation → ship.

We run this cycle for every single feature, not just the initial product.

The Product Mindset: Reorganizing Around Learning

Working with AI like this doesn't just change how you code. It changes how you should structure your team.

Andy and I split our responsibilities in a way that wouldn't make sense for a traditional startup. I do all the AI work. Andy does zero coding. Instead, he handles everything customer-facing: research, demos, feedback sessions, support.

This isn't because Andy can't learn AI tools (he absolutely could). It's because AI work requires a totally different kind of time than customer work.

When I'm working with Cursor or Claude, I'm in what I can only describe as a bare-knuckle boxing match with the LLM. I'm close to getting something working. One more prompt. One more test. I know I'm almost there.

You can't do that effectively if you're jumping into customer calls every 30 minutes. You need deep, uninterrupted time. Context-switching kills productivity.

Traditional Collaboration Is the Wrong Model

Most teams are still running standups, sprint planning, retros—all the agile ceremonies we've used for years. Those make sense when coordination is the hard part. When you need five engineers to work together on one feature.

But coordination isn't the hard part anymore. Deep focus is the hard part.

We removed the code bottleneck by using AI. But we almost immediately created a new bottleneck: my ability to maintain focus long enough to wrestle with the AI effectively.

So we protect my time aggressively. While Andy's calendar is often owned by other people, mine is owned by me. This works because Andy's job is to get real customer feedback as fast as possible so we know what to build next.

What Being Operators Forced Us to See

Code used to be expensive and customer conversations were relatively cheap. You could talk to as many customers as you wanted, but it didn't speed up shipping features.

Now code is insanely cheap. We can build virtually any feature we want. The scarce resource is knowing which are the right features for the right users with the right positioning and pricing.

Finding the right person to talk to, getting on their calendar, asking the right questions, synthesizing the feedback—that's what's expensive now.

I think our background as operators is the main reason we figured this out. And why I believe there will be many more people like us and companies like Dreambase.

We don't get lost in technical architecture because we don't know enough about it to get lost. We couldn't worry about code quality or technical debt because, honestly, we're not sure we'd recognize it if we saw it.

What we do have strong opinions about is whether we're building something customers actually want. That's the question that kept us up at night. And it turns out that was the only question that mattered at this stage.

The Rise of the Operators

AI unlocked an insanely fast and effective way to build products. It's a superpower if you can surround it with good process and resist the temptation to do things the old way.

We got our AI-native analytics product to market fast enough to validate it with Supabase's customer base. (We coined the term "AI-Native Analytics" early on. Companies like Amplitude are now using it too, which is both validating and slightly surreal.)

We're still searching for product-market fit in the larger analytics market, but we're iterating weekly instead of quarterly. That speed only exists because we stopped thinking like a traditional engineering team.

For the first time in the history of software, operators who deeply understand customers, products, and business problems can go from insight to deployed code without waiting on engineering capacity.

I understand how this can be uncomfortable. I've talked to experienced engineers who struggle with these tools because they're thinking about them the old way. They're trying to write better code faster instead of trying to validate customer problems faster. They're optimizing for elegance when they should be optimizing for learning.

Dylan Field is right: you have to design your org for speed and remove bottlenecks as soon as you notice them. The bottleneck has moved. And it'll move again.

Before AI: More engineers = faster shipping.

After AI: Better product thinking = faster shipping.

We built three products in nine months as operators stepping into technical roles. We did it by collapsing our product vision into one document the AI could actually use, running multiple tools in parallel to see different approaches, and reorganizing our entire workflow around learning from customers instead of writing perfect code.

If we can do that, what's stopping you?

Want to see what AI-native analytics looks like? We built Dreambase to give Supabase users instant product insights without the complexity of traditional analytics tools.