Reforge has joined Miro ↗

In his recent ref:AI session, Justin Farris, VP of Product at GitLab, delved into the transformative impact of AI on the product development process. With a background spanning roles at GitLab and Zillow, Justin shared his insights from building AI products over the past few years. In this post, we’ll examine Justin’s key points, and you can view the full recording below.

Introduction to AI-Driven Product Development

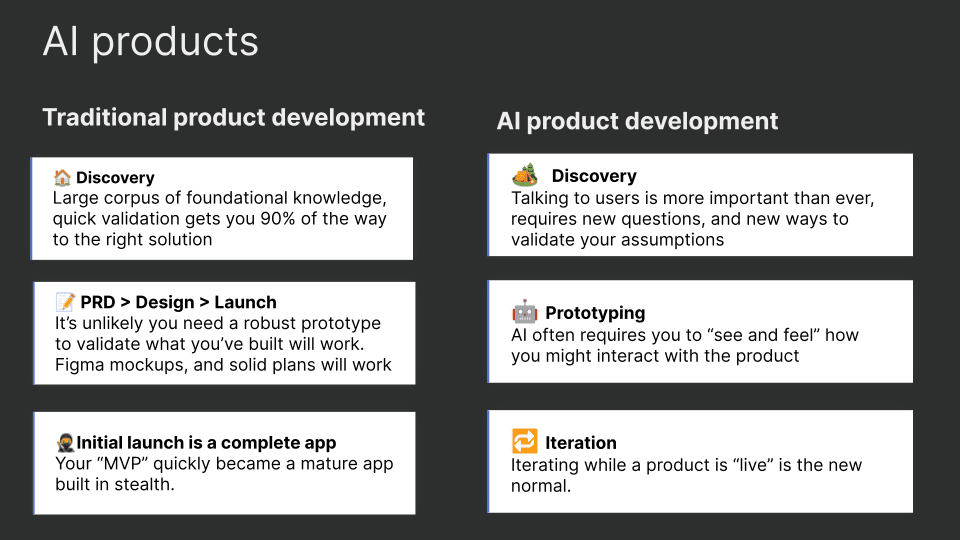

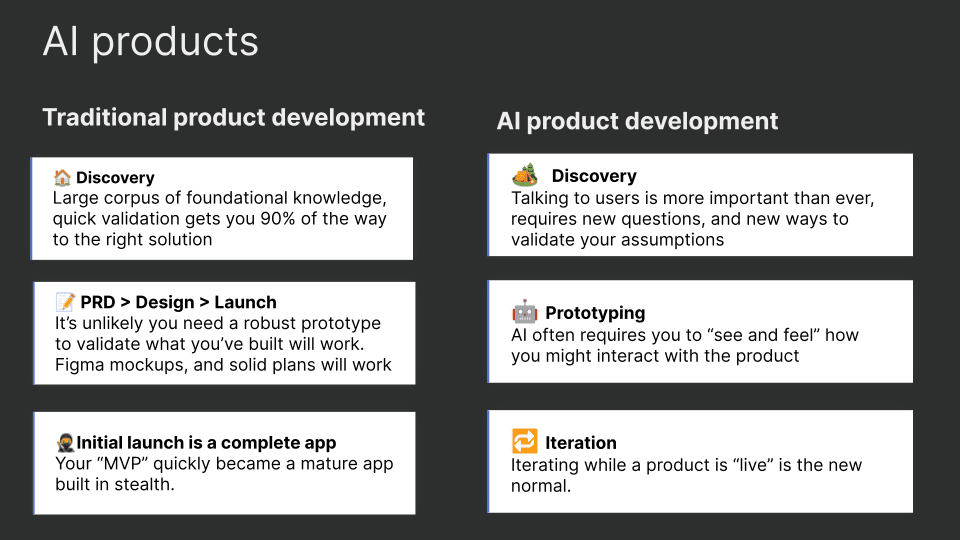

Justin begins by acknowledging the seismic shift in product development brought about by AI. Traditional product development has long relied on established frameworks and methodologies, refined over decades. However, the introduction of AI necessitates a fundamental change in approach. The traditional process, while still valuable, must now evolve to integrate AI's capabilities and unique challenges.

***Related Reading: ***AI/ML roadmap at Neurons Lab

Traditional product development often involves a linear progression: discovery, development, and launch. This approach is supported by decades of best practices and tools that streamline the journey from concept to market. However, with AI, several aspects of this process require rethinking. Justin outlines how this changes.

Discovery: A New Level of Importance

In the AI era, discovery is more crucial than ever. Product managers with deep domain expertise have always been valuable, but now, understanding the nuances of AI capabilities and limitations is essential. Justin emphasizes the need for rigorous user research and validation, as AI systems are inherently non-deterministic ( a process or system in which the outcome is not predetermined and can vary each time the process or system is executed). This means that continuous user feedback and real-world testing become vital to refining AI products.

Building AI Products: The Role of Prototyping

Prototyping in AI product development differs significantly from traditional methods. While a well-crafted PRD and FIGMA mock-ups were sufficient in the past, AI products require interactive prototypes that users can engage with. This hands-on approach helps validate early assumptions and refine the user experience in real-time. Justin highlights the importance of being comfortable with early, imperfect prototypes to gather valuable feedback.

The Evolving Definition of MVP

The concept of a Minimum Viable Product (MVP) has also evolved. In the past, an MVP was a stripped-down version of the final product, built to test core functionalities. However, AI products often need to be more mature before they are market-ready. Continuous iteration and improvement, even post-launch, are now integral to the development cycle.

Discovery in AI Product Development

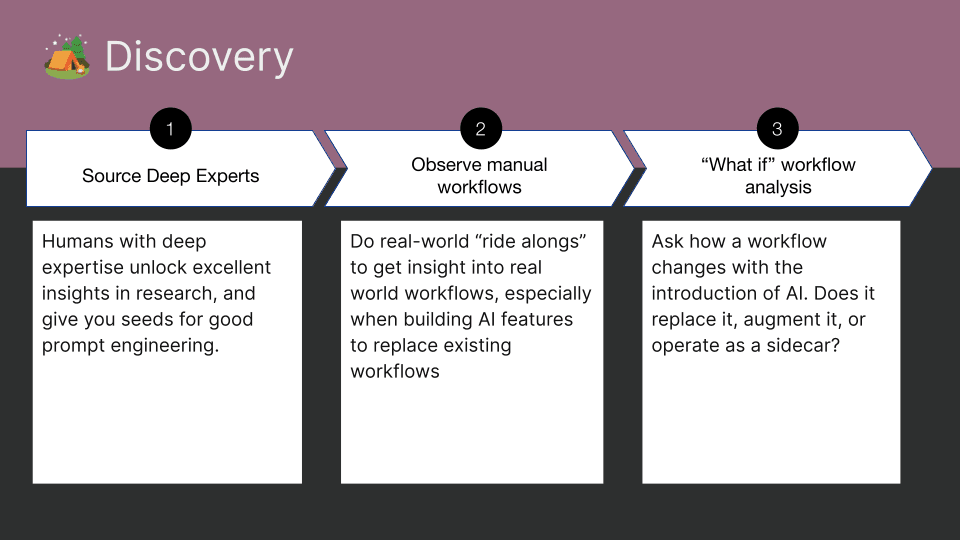

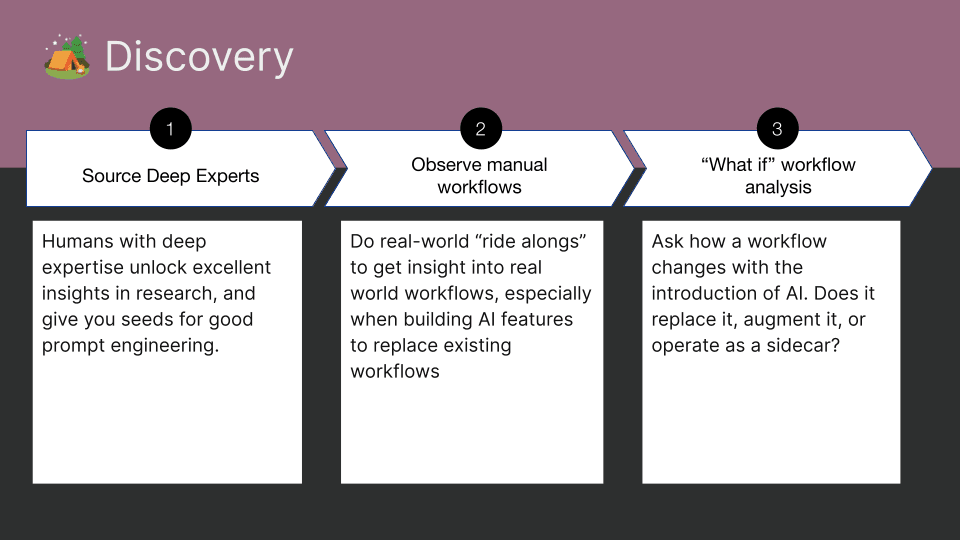

Justin explains that you can start with all the things you’ve learned historically on user validation and discovery — practices like conducting UX research, running surveys, and joining customer calls all will still give you great insight.

But, with AI, you should take it a step further and add three additional steps to your discovery process:

Engaging with Domain Experts

Deep expertise in specific areas can unlock critical insights and guide prompt engineering. For example, GitLab’s code suggestions product benefits from the expertise of Ruby developers but also requires input from experts in other programming languages to ensure comprehensive coverage.

Observing Manual Workflows

Identifying pain points in current workflows can reveal opportunities for AI integration. Justin provides an example of GitLab’s root cause analysis feature, which automates the labor-intensive process of diagnosing CI pipeline failures.

Analyzing Workflow Changes

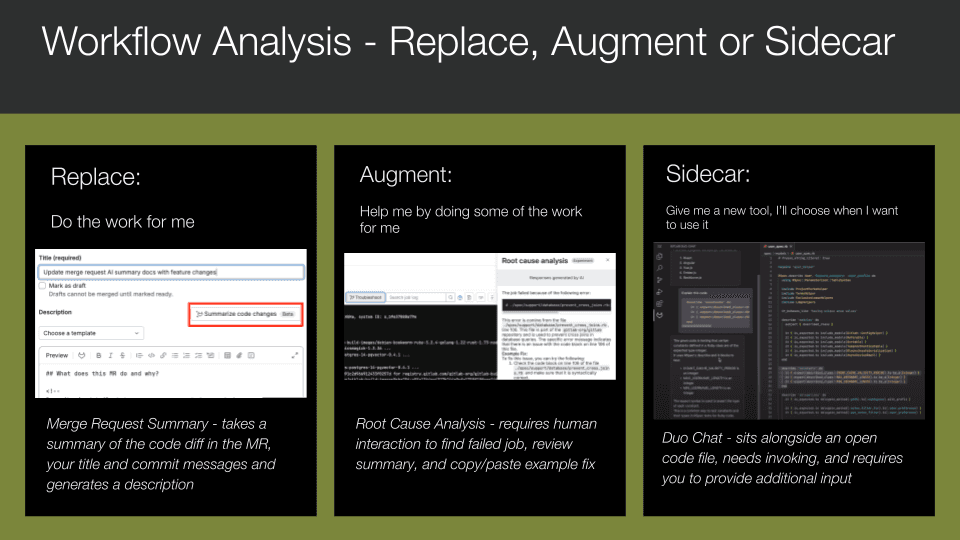

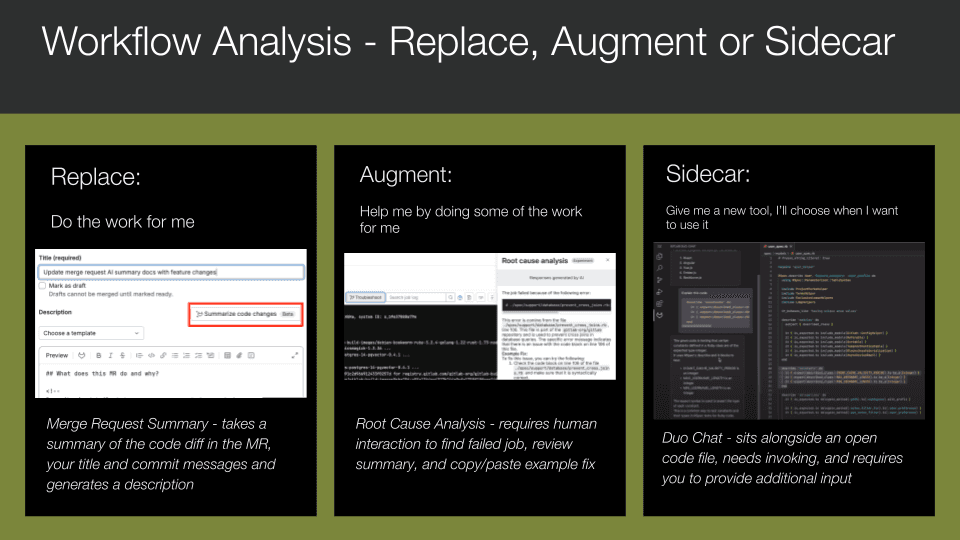

Understanding how AI might alter existing workflows is essential. Whether AI replaces, augments, or acts as a sidecar to current tools, it’s important to anticipate these changes and design accordingly. Let’s dive into each.

Replacement is when AI fully takes over a task you traditionally do, acting as the driver. It doesn’t mean removing control from you—maintaining control is crucial since this technology is still evolving. It means that with a simple click, AI completes an entire task for you, awaiting your acceptance of the suggestion. For example, GitLab Duo automatically writes a merge request summary based on the changes, commit messages, and provided title.

With augmentation, AI is integrated into an existing workflow but still requires user actions beyond just clicking “accept.” For instance, in Root Cause Analysis, AI doesn’t fix the issue on its own. It requires you to find the failed job, review the output, and decide if the suggested fix will work. Full automation might consume significant compute resources for large jobs, so user intervention is necessary.

A sidecar is a new tool in a user's workflow, which could theoretically stand alone. Chatbots are a clear example—it’s like adding ChatGPT into your UI. In this scenario, Duo Chat uses standard LLM techniques (e.g., asking for the command to push code via git or explaining a block of highlighted code) along with the context of the code and open files/dependencies you’re working with.

The next theme that Justin highlights is prototyping.

Prototyping AI Features

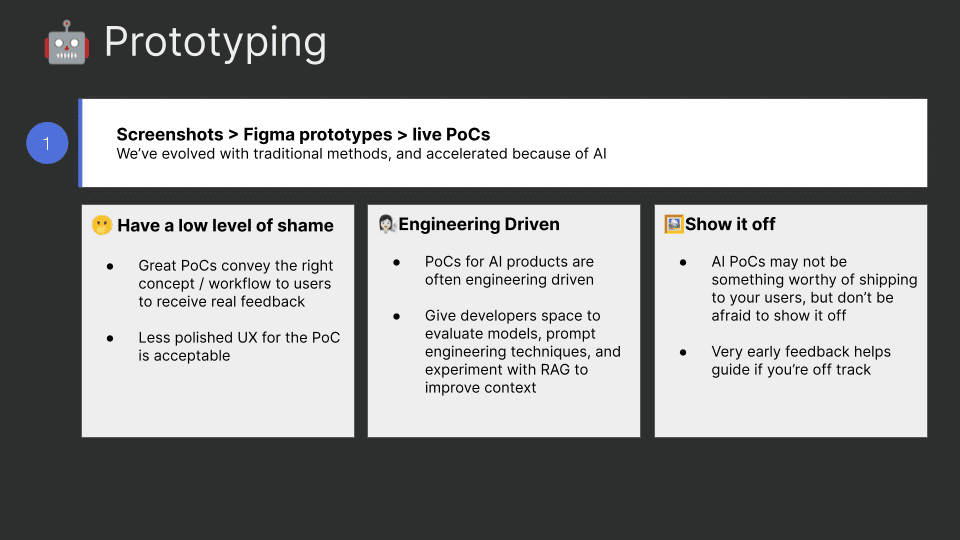

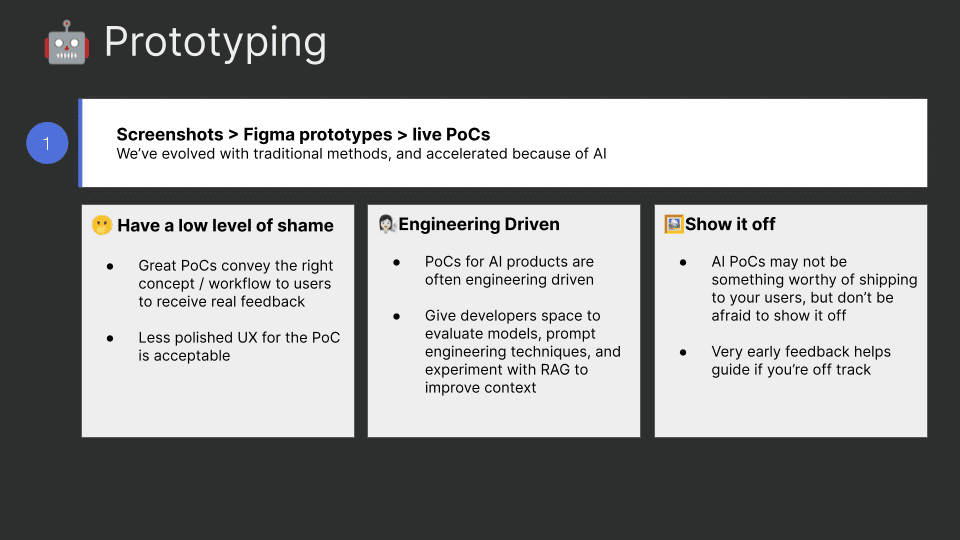

Justin notes that “the shift from static wireframes as mockups to using a tool like Figma to wire up your app before a single line of code is written has already been in motion for the past 10 years.” With Generative AI this has been accelerated.

***Related Reading: ***Bridging the gap from Gen AI POC to production

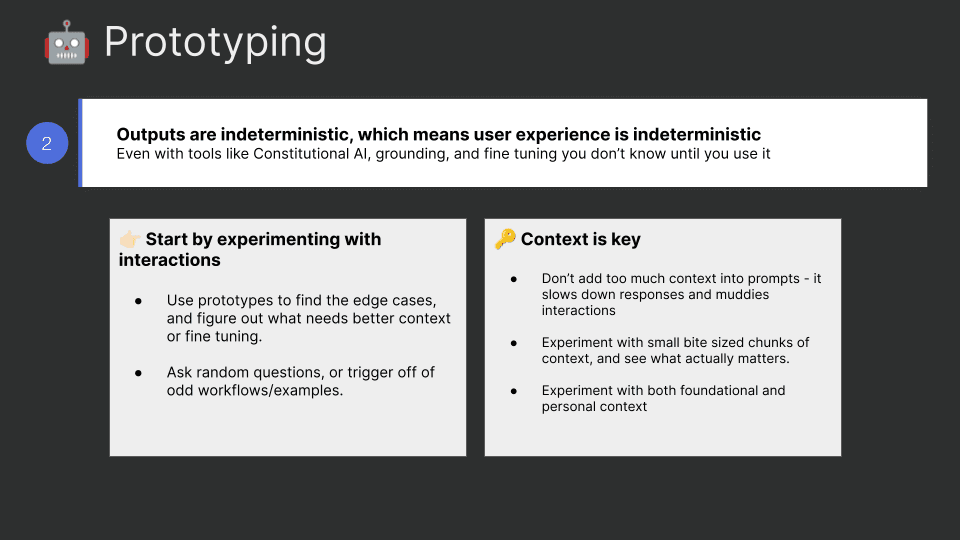

Prototyping AI features involves several unique considerations:

Low Level of Shame

Be prepared to show early, unpolished prototypes to users. This early feedback is crucial for guiding development. As Justin says, “It will probably suck, and that’s ok.”

Let the Engineers Play

Allow engineers to build and iterate on prototypes quickly. This approach facilitates rapid experimentation and refinement of AI models and prompts.

User Feedback and Context is Crucial

Gathering user feedback on prototypes helps identify edge cases and refine the product. Experimenting with different levels of context in prompts can also improve AI interactions.

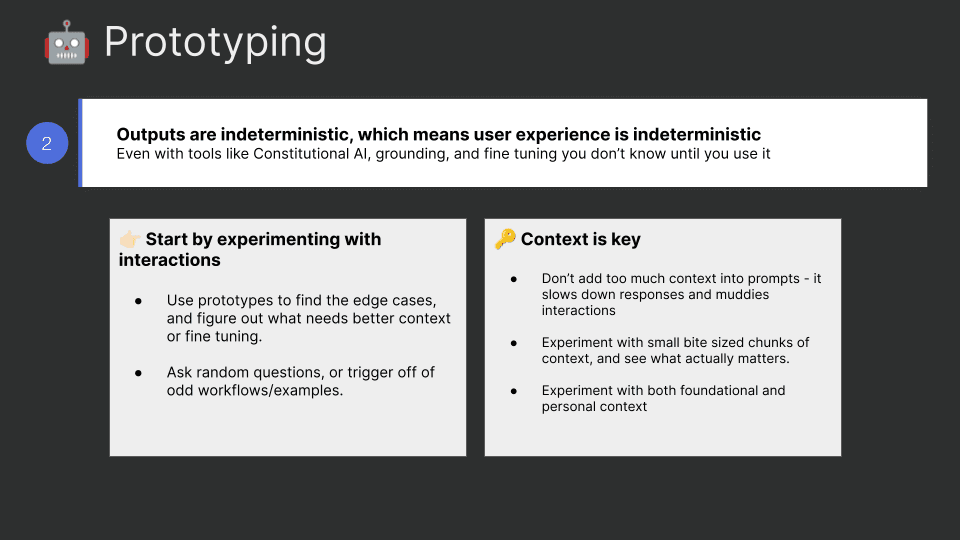

Real World Testing

In a vacuum, you may be able to conceptually understand how your product will work with AI deeply integrated, but by their nature, you’re working with an indeterministic system. There are new tools and techniques like Constitutional AI or “grounding,” and you can always fine-tune your logic to prevent unwanted outputs. However, nothing is as effective as poking at it yourself to see what happens. Justin firmly believes that AI products, once in the market, will develop their own features based on user engagement, much like Twitter did in its early years with hashtags and retweets.

Standing up a prototype quickly to share with users in an “early alpha” (at GitLab, they call it “experimental”) state will give you some quick, early validation of whether you’re on the right track. Here’s how Justin approaches this problem:

Experiment with Interactions: Your goal is to find edge cases and determine if they matter enough to solve for them. Ask random questions or trigger off strange workflows. For example, when GitLab first introduced Duo Chat, they asked many strange questions to see the outcomes. Some were silly, but others helped refine the context used in prompts.

Context Wins: Experiment with context in your prototype to avoid bloating context windows. For example, it’s logical to assume that when building a coding assistant, you might want to include the entire repository in the context window. However, GitLab learned that leveraging open files in a user’s IDE combined with a ‘manifest’ of the dependencies in the repo provides a great experience while balancing performance.

***Related Reading: ***How we built the Reforge Extension!

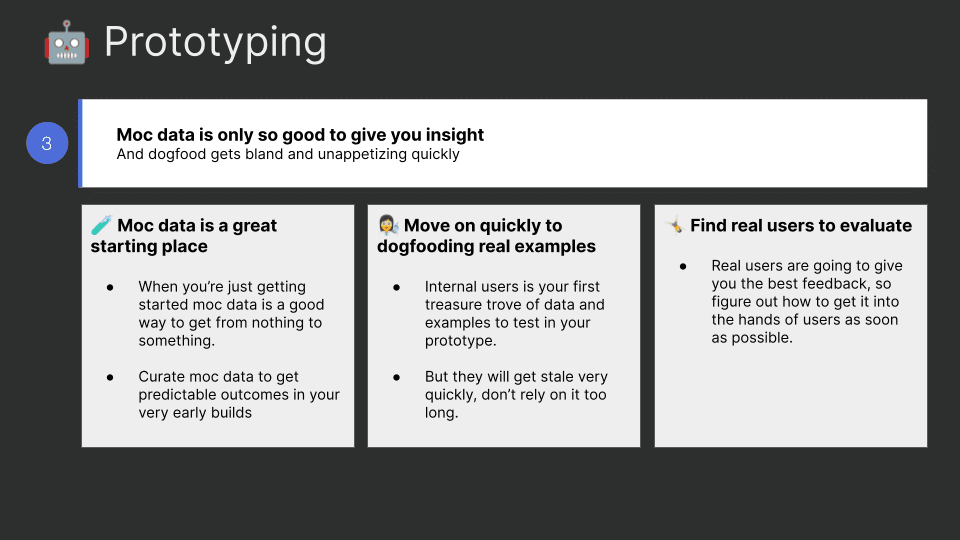

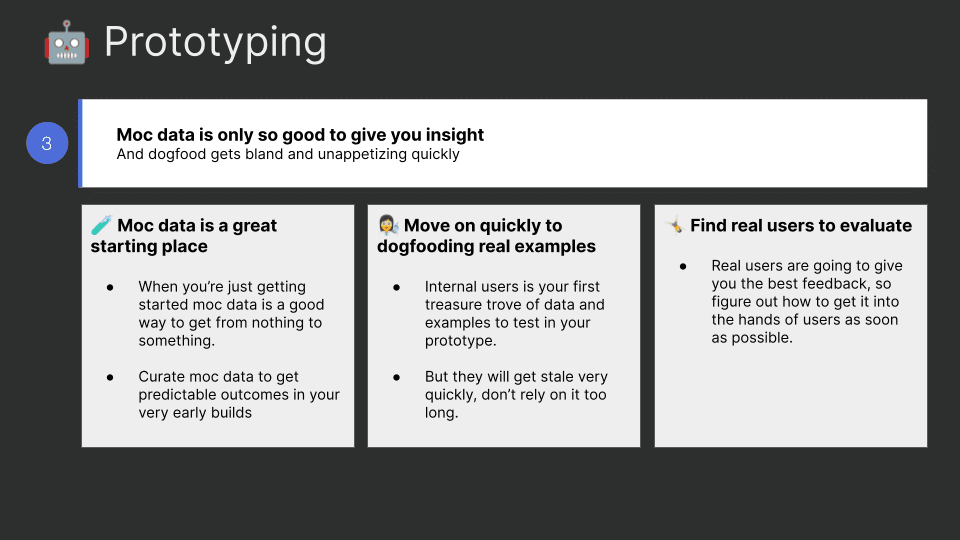

Data and Testing

Finally, Justin discusses the importance of real-world data and testing. While mock data is a good starting point, transitioning to real-world examples is essential for accurate validation. GitLab’s approach involves launching features in an experimental state to gather user feedback and iterate quickly.

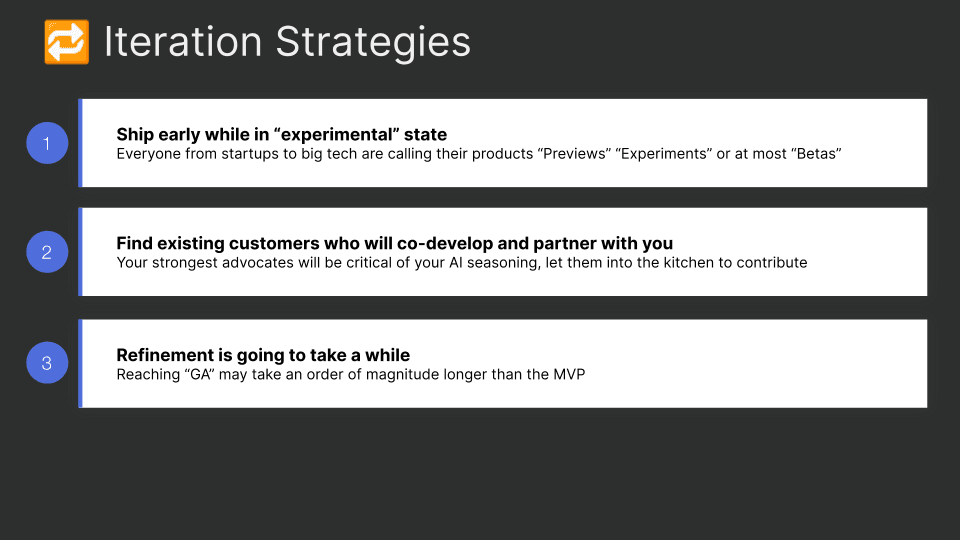

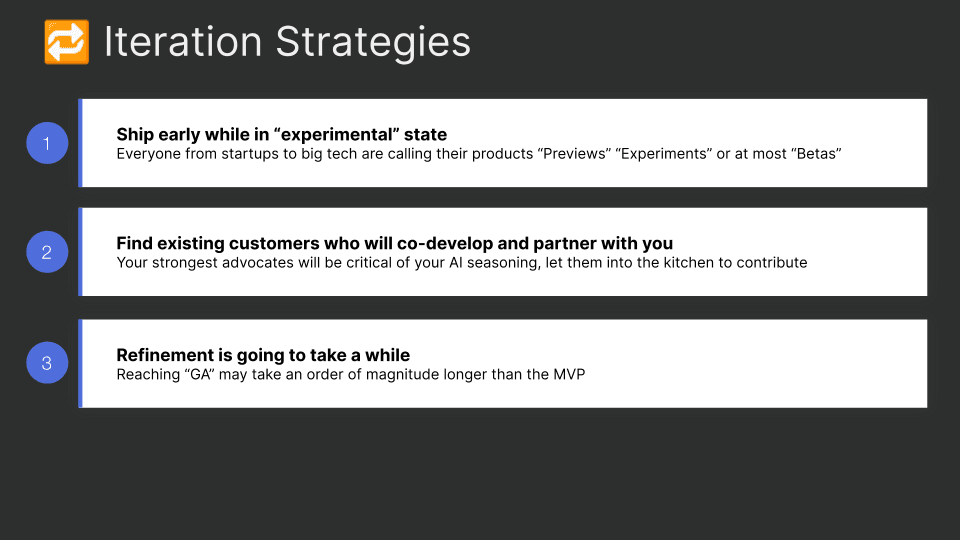

Iteration: Once You Ship, You’re Just Getting Started

Due to AI's unique challenges, traditional beta testing is insufficient as it often misses edge cases. AI systems excel at handling these unpredictable situations, but can't anticipate every scenario. Unlike conventional products, AI requires continuous iteration in real-world environments to effectively address these nuances.

User interaction is key. However, understanding AI experiences is complex. While tools like A/B testing provide insights, they're limited in assessing AI-generated content. Approaches like diary studies, which follow users through their workflows, can offer more detailed understanding.

By continuously iterating and engaging with users, AI teams can manage the unpredictability of these systems and create user-focused solutions. Justin outlines three key Iteration strategies:

Example: GitLab's Code Suggestions

To illustrate these points, Justin shares an example of GitLab’s code suggestions, one of their largest AI products. Launched in beta in May 2023 and in GA by December 2023, the product has undergone seven major releases, 328 deployments, and dozens of customer feedback sessions. This extensive iteration process underscores the importance of patience and persistence in AI product development.

Key Takeaways

Justin concludes with key takeaways for AI-driven product development:

Engage in Continuous Discovery: Talking to users is paramount. Move beyond surveys and quantitative data; engage directly with users to validate assumptions and gather real insights.

Prototype Early and Often: Build prototypes early in the process, allowing engineers to iterate and refine. Share these prototypes widely to gather feedback.

Embrace Iteration: Accept that AI products require ongoing iteration. Move away from the idea of a perfect MVP and focus on continuous improvement with user collaboration.

With these principles in mind, product builders can navigate the complexities of AI product development and harness its transformative potential. Justin’s insights provide a roadmap for embracing the new era of innovation brought by AI.

Related Resources

Artifacts: Real-world work examples from hundreds of experts

Product spec for AI-powered malware scan MVP at BitNinja

Using Reforge’s AI to brainstorm around a new use case

GenAI Product Strategy and Roadmap at Spiffy.ai

Guides: Members-only, step by step instruction

Evaluate the value of Gen AI for your product

Evaluate techniques for incorporating LLMs into your product

Define Successful Conversational AI Products

Understand conversational AI technology

Design a Conversational UX Experience

Courses: In-depth upskilling with Reforge experts

Generative AI Products: How to Get from Idea to MVP - Polly Allen and Rupa Chaturvedi

GenAI Product Strategy - Aniket Deosthali

Podcast: Reforge’s Unsolicited Feedback is available on the platform of your choice

Hear Box CTO, Ben Kus, evaluate AI models and discuss his approach to building enterprise AI tools.

Listen to Sachin Rekhi react to the shrinking S-Curve’s impact on Product and Marketing Strategy and learn how to quickly find product-market fit in an AI world.

Discover how Claire Vo built Chat PRD.

In his recent ref:AI session, Justin Farris, VP of Product at GitLab, delved into the transformative impact of AI on the product development process. With a background spanning roles at GitLab and Zillow, Justin shared his insights from building AI products over the past few years. In this post, we’ll examine Justin’s key points, and you can view the full recording below.

Introduction to AI-Driven Product Development

Justin begins by acknowledging the seismic shift in product development brought about by AI. Traditional product development has long relied on established frameworks and methodologies, refined over decades. However, the introduction of AI necessitates a fundamental change in approach. The traditional process, while still valuable, must now evolve to integrate AI's capabilities and unique challenges.

***Related Reading: ***AI/ML roadmap at Neurons Lab

Traditional product development often involves a linear progression: discovery, development, and launch. This approach is supported by decades of best practices and tools that streamline the journey from concept to market. However, with AI, several aspects of this process require rethinking. Justin outlines how this changes.

Discovery: A New Level of Importance

In the AI era, discovery is more crucial than ever. Product managers with deep domain expertise have always been valuable, but now, understanding the nuances of AI capabilities and limitations is essential. Justin emphasizes the need for rigorous user research and validation, as AI systems are inherently non-deterministic ( a process or system in which the outcome is not predetermined and can vary each time the process or system is executed). This means that continuous user feedback and real-world testing become vital to refining AI products.

Building AI Products: The Role of Prototyping

Prototyping in AI product development differs significantly from traditional methods. While a well-crafted PRD and FIGMA mock-ups were sufficient in the past, AI products require interactive prototypes that users can engage with. This hands-on approach helps validate early assumptions and refine the user experience in real-time. Justin highlights the importance of being comfortable with early, imperfect prototypes to gather valuable feedback.

The Evolving Definition of MVP

The concept of a Minimum Viable Product (MVP) has also evolved. In the past, an MVP was a stripped-down version of the final product, built to test core functionalities. However, AI products often need to be more mature before they are market-ready. Continuous iteration and improvement, even post-launch, are now integral to the development cycle.

Discovery in AI Product Development

Justin explains that you can start with all the things you’ve learned historically on user validation and discovery — practices like conducting UX research, running surveys, and joining customer calls all will still give you great insight.

But, with AI, you should take it a step further and add three additional steps to your discovery process:

Engaging with Domain Experts

Deep expertise in specific areas can unlock critical insights and guide prompt engineering. For example, GitLab’s code suggestions product benefits from the expertise of Ruby developers but also requires input from experts in other programming languages to ensure comprehensive coverage.

Observing Manual Workflows

Identifying pain points in current workflows can reveal opportunities for AI integration. Justin provides an example of GitLab’s root cause analysis feature, which automates the labor-intensive process of diagnosing CI pipeline failures.

Analyzing Workflow Changes

Understanding how AI might alter existing workflows is essential. Whether AI replaces, augments, or acts as a sidecar to current tools, it’s important to anticipate these changes and design accordingly. Let’s dive into each.

Replacement is when AI fully takes over a task you traditionally do, acting as the driver. It doesn’t mean removing control from you—maintaining control is crucial since this technology is still evolving. It means that with a simple click, AI completes an entire task for you, awaiting your acceptance of the suggestion. For example, GitLab Duo automatically writes a merge request summary based on the changes, commit messages, and provided title.

With augmentation, AI is integrated into an existing workflow but still requires user actions beyond just clicking “accept.” For instance, in Root Cause Analysis, AI doesn’t fix the issue on its own. It requires you to find the failed job, review the output, and decide if the suggested fix will work. Full automation might consume significant compute resources for large jobs, so user intervention is necessary.

A sidecar is a new tool in a user's workflow, which could theoretically stand alone. Chatbots are a clear example—it’s like adding ChatGPT into your UI. In this scenario, Duo Chat uses standard LLM techniques (e.g., asking for the command to push code via git or explaining a block of highlighted code) along with the context of the code and open files/dependencies you’re working with.

The next theme that Justin highlights is prototyping.

Prototyping AI Features

Justin notes that “the shift from static wireframes as mockups to using a tool like Figma to wire up your app before a single line of code is written has already been in motion for the past 10 years.” With Generative AI this has been accelerated.

***Related Reading: ***Bridging the gap from Gen AI POC to production

Prototyping AI features involves several unique considerations:

Low Level of Shame

Be prepared to show early, unpolished prototypes to users. This early feedback is crucial for guiding development. As Justin says, “It will probably suck, and that’s ok.”

Let the Engineers Play

Allow engineers to build and iterate on prototypes quickly. This approach facilitates rapid experimentation and refinement of AI models and prompts.

User Feedback and Context is Crucial

Gathering user feedback on prototypes helps identify edge cases and refine the product. Experimenting with different levels of context in prompts can also improve AI interactions.

Real World Testing

In a vacuum, you may be able to conceptually understand how your product will work with AI deeply integrated, but by their nature, you’re working with an indeterministic system. There are new tools and techniques like Constitutional AI or “grounding,” and you can always fine-tune your logic to prevent unwanted outputs. However, nothing is as effective as poking at it yourself to see what happens. Justin firmly believes that AI products, once in the market, will develop their own features based on user engagement, much like Twitter did in its early years with hashtags and retweets.

Standing up a prototype quickly to share with users in an “early alpha” (at GitLab, they call it “experimental”) state will give you some quick, early validation of whether you’re on the right track. Here’s how Justin approaches this problem:

Experiment with Interactions: Your goal is to find edge cases and determine if they matter enough to solve for them. Ask random questions or trigger off strange workflows. For example, when GitLab first introduced Duo Chat, they asked many strange questions to see the outcomes. Some were silly, but others helped refine the context used in prompts.

Context Wins: Experiment with context in your prototype to avoid bloating context windows. For example, it’s logical to assume that when building a coding assistant, you might want to include the entire repository in the context window. However, GitLab learned that leveraging open files in a user’s IDE combined with a ‘manifest’ of the dependencies in the repo provides a great experience while balancing performance.

***Related Reading: ***How we built the Reforge Extension!

Data and Testing

Finally, Justin discusses the importance of real-world data and testing. While mock data is a good starting point, transitioning to real-world examples is essential for accurate validation. GitLab’s approach involves launching features in an experimental state to gather user feedback and iterate quickly.

Iteration: Once You Ship, You’re Just Getting Started

Due to AI's unique challenges, traditional beta testing is insufficient as it often misses edge cases. AI systems excel at handling these unpredictable situations, but can't anticipate every scenario. Unlike conventional products, AI requires continuous iteration in real-world environments to effectively address these nuances.

User interaction is key. However, understanding AI experiences is complex. While tools like A/B testing provide insights, they're limited in assessing AI-generated content. Approaches like diary studies, which follow users through their workflows, can offer more detailed understanding.

By continuously iterating and engaging with users, AI teams can manage the unpredictability of these systems and create user-focused solutions. Justin outlines three key Iteration strategies:

Example: GitLab's Code Suggestions

To illustrate these points, Justin shares an example of GitLab’s code suggestions, one of their largest AI products. Launched in beta in May 2023 and in GA by December 2023, the product has undergone seven major releases, 328 deployments, and dozens of customer feedback sessions. This extensive iteration process underscores the importance of patience and persistence in AI product development.

Key Takeaways

Justin concludes with key takeaways for AI-driven product development:

Engage in Continuous Discovery: Talking to users is paramount. Move beyond surveys and quantitative data; engage directly with users to validate assumptions and gather real insights.

Prototype Early and Often: Build prototypes early in the process, allowing engineers to iterate and refine. Share these prototypes widely to gather feedback.

Embrace Iteration: Accept that AI products require ongoing iteration. Move away from the idea of a perfect MVP and focus on continuous improvement with user collaboration.

With these principles in mind, product builders can navigate the complexities of AI product development and harness its transformative potential. Justin’s insights provide a roadmap for embracing the new era of innovation brought by AI.

Related Resources

Artifacts: Real-world work examples from hundreds of experts

Product spec for AI-powered malware scan MVP at BitNinja

Using Reforge’s AI to brainstorm around a new use case

GenAI Product Strategy and Roadmap at Spiffy.ai

Guides: Members-only, step by step instruction

Evaluate the value of Gen AI for your product

Evaluate techniques for incorporating LLMs into your product

Define Successful Conversational AI Products

Understand conversational AI technology

Design a Conversational UX Experience

Courses: In-depth upskilling with Reforge experts

Generative AI Products: How to Get from Idea to MVP - Polly Allen and Rupa Chaturvedi

GenAI Product Strategy - Aniket Deosthali

Podcast: Reforge’s Unsolicited Feedback is available on the platform of your choice

Hear Box CTO, Ben Kus, evaluate AI models and discuss his approach to building enterprise AI tools.

Listen to Sachin Rekhi react to the shrinking S-Curve’s impact on Product and Marketing Strategy and learn how to quickly find product-market fit in an AI world.

Discover how Claire Vo built Chat PRD.