Reforge has joined Miro ↗

Article details

Reforge has been shipping like crazy lately. We've recently overhauled our course library to include in-depth courses on AI leadership, growth and productivity. We've also launched four AI SaaS tools for product teams:

🔧 Reforge Build is an AI prototyping tool to help you validate features faster without starting from scratch.

🚀 Reforge Launch enables builders to go from insight to launch with AI-native feature flags and configuration.

📈 Reforge Insights provides your entire product team (PM, Eng and Design) with actionable insights right in their existing tools.

🧠 Reforge Research helps you uncover new opportunities to drive higher conversion, retention and product satisfaction.

At our recent AI Summit, I opened with a simple premise. AI is touching every single thing we do as professionals, but the common narratives don't match what's actually happening. I've been tracking data across strategy, tools, teams, and process, and I want to share what I'm seeing. These are the gaps between expectations and reality that we all need to face.

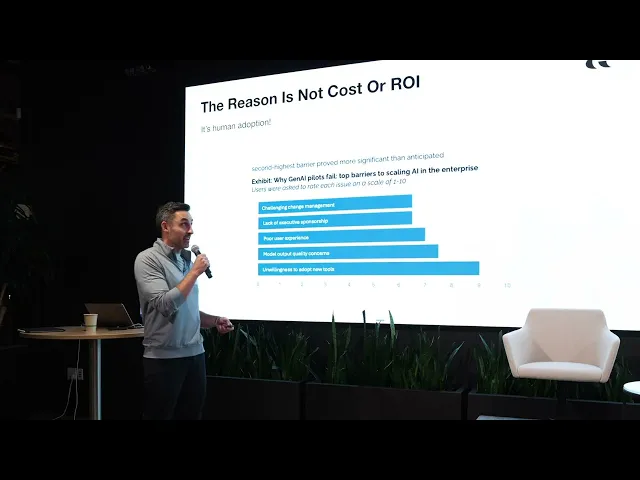

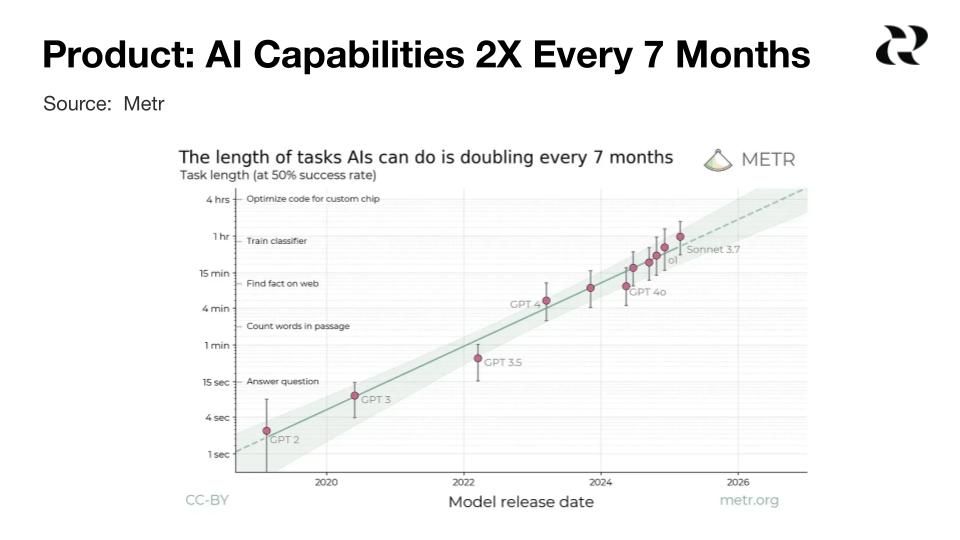

Product: AI capabilities double every 7 months

AI capabilities are still doubling every seven months. This creates fundamental questions for me about how we build durable product strategies when the ground keeps shifting beneath us.

We're facing pressure to re-embed core capabilities within our products rather than relying on external AI tools. But I don't know yet whether this trajectory will hit diminishing returns like self-driving technology did.

Here's the critical challenge I'm wrestling with: how do we build differentiated products that competitors can't easily replicate when the underlying technology is evolving this rapidly?

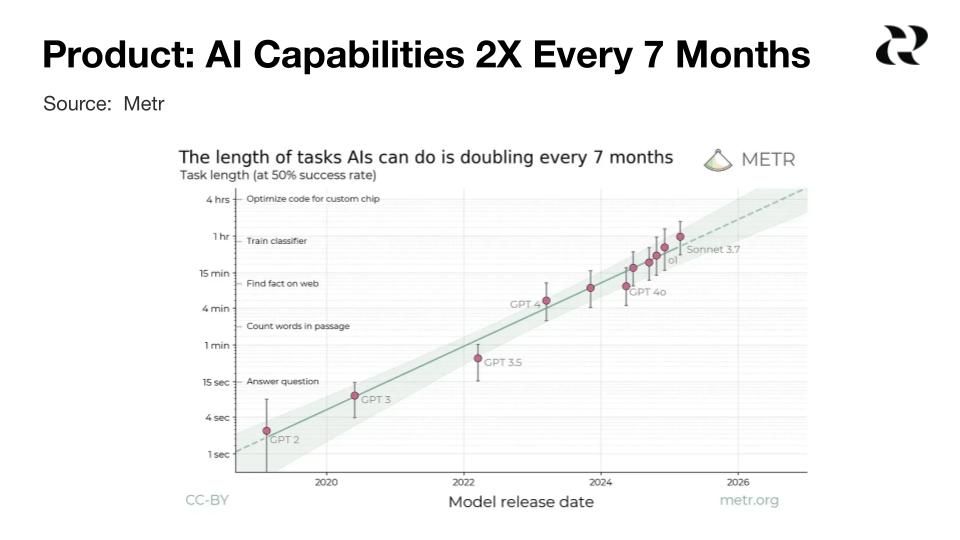

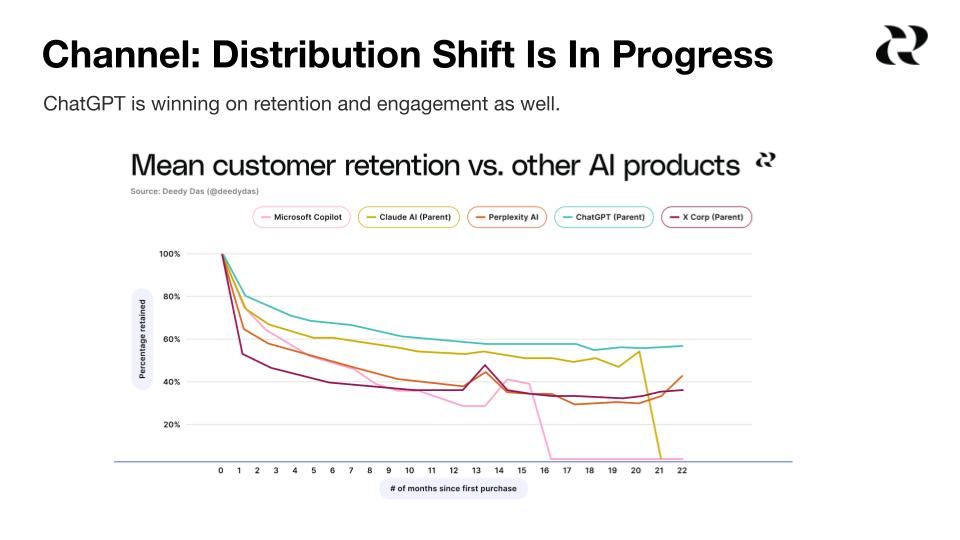

Channel: Distribution shift is in progress

ChatGPT is on track to surpass one billion monthly active users by the end of this year. What's striking to me isn't just that it's growing faster than other platforms but that its growth is actually accelerating.

I keep coming back to what history teaches us: the winners aren't those with the best initial distribution but those with the best retention and engagement. ChatGPT leads on both. Its retention curves are above all other players, and it has the highest time spent.

We've seen this pattern before. New platforms identify, open development opportunities to draw users, then close to monetize. And we make the same mistake every time. Incumbents try to copy-paste existing products into new environments rather than rebuilding for how people actually behave in these channels.

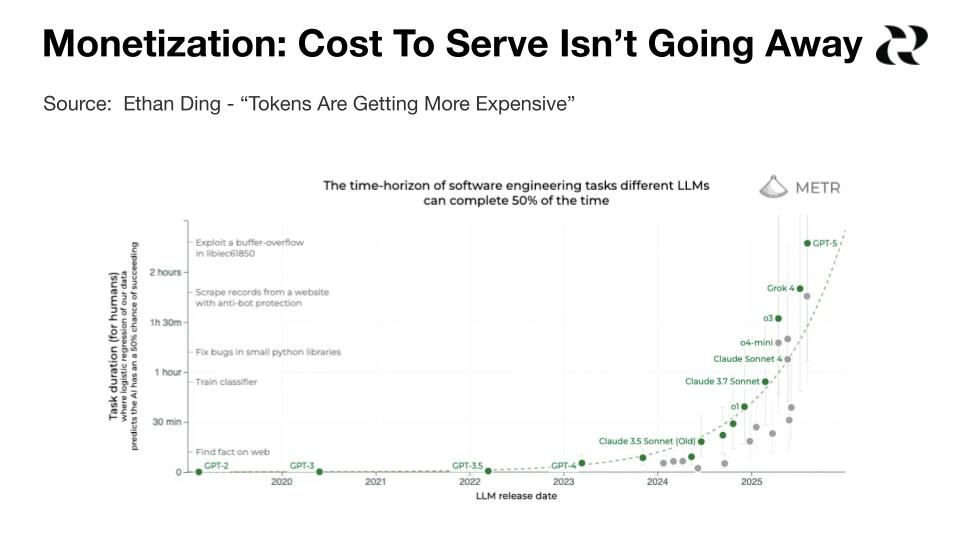

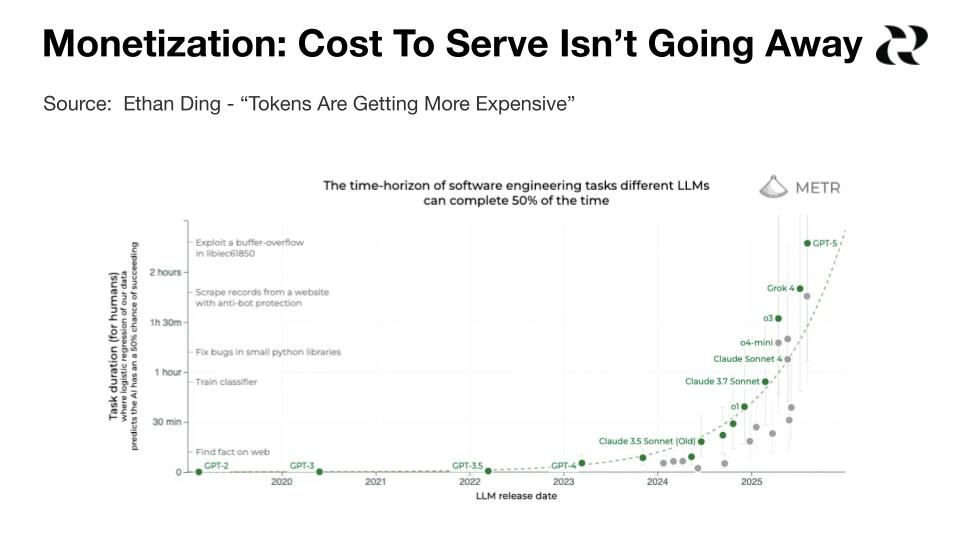

Monetization: Cost to serve isn't going away

Yes, older AI models are decreasing in cost rapidly. But here's what I'm tracking: the cost of the best, latest models at any given time has remained essentially flat. Consumers expect the quality and capabilities of the newest models, not yesterday's cheaper version.

A lot of startups took bets on 10x cost decreases, and I'm watching the math not work out. As model capabilities improve, users consume more tokens. That can actually increase total costs even if per-token pricing drops.

I'm seeing this gap between customer expectations and willingness to pay grow wider. Someone who says "this is amazing, it built what would have taken me two weeks in two prompts" in Q1 becomes "I paid $20 for these credits and got ripped off" by Q3.

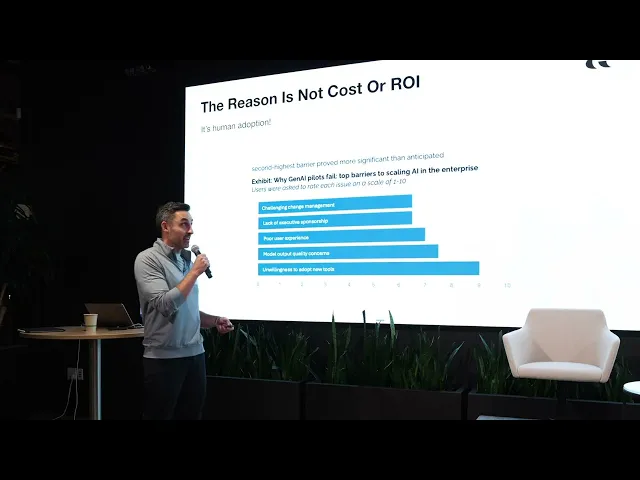

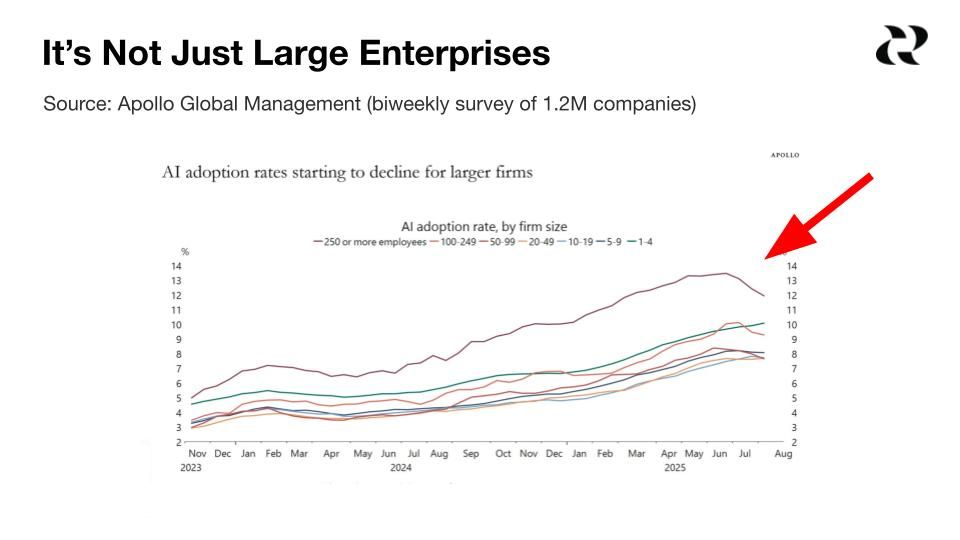

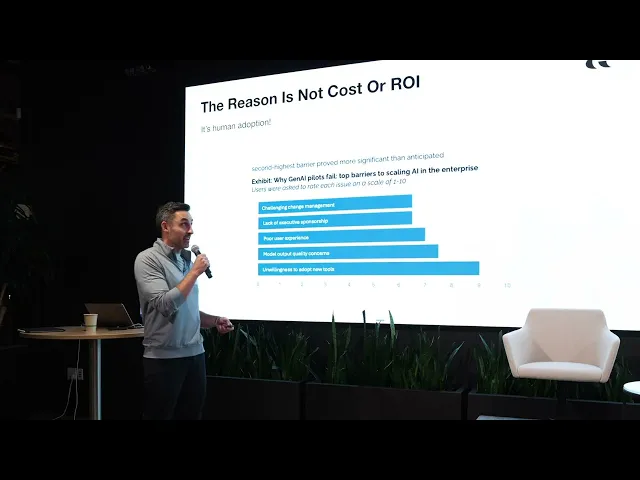

Tools: Only 5% of AI deployments make it to production

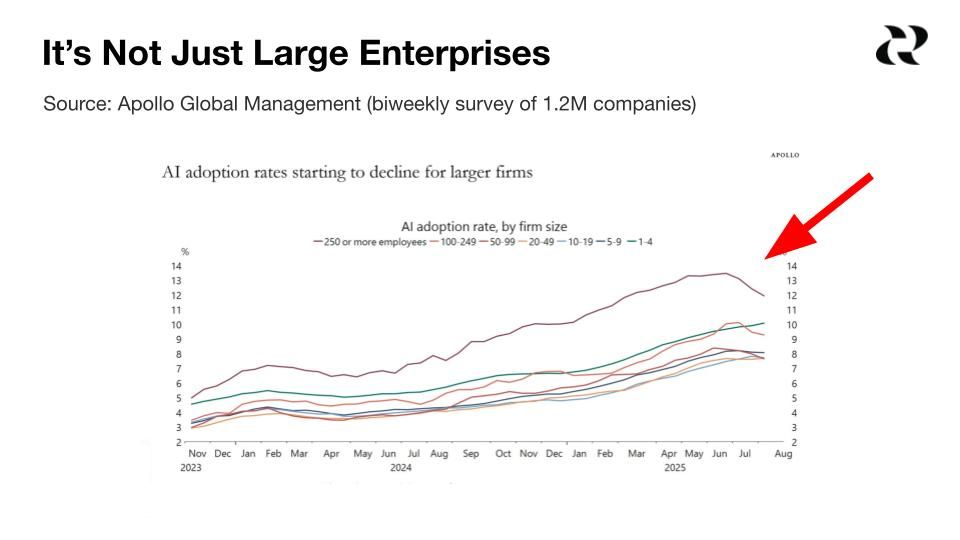

A MIT study found that only 5% of AI initiatives actually make it to production. This isn't just a large enterprise problem. I'm seeing it across companies of all sizes.

The failure reason isn't cost or ROI. It's human adoption. There's a major disconnect between what we as leaders think the friction points are versus what our employees actually experience.

I see barriers in three forms: procurement processes where defense controls offense (taking months to approve $200 tools), political barriers when roles collide as AI blurs responsibilities, and permission barriers where people fear doing something wrong so they simply wait.

Team: AI isn't making teams smaller (yet)

Only 5% of companies are reducing team size because of AI. What I find fascinating is that the companies deploying AI most aggressively are actually hiring more. 45% of that hiring is happening in areas like customer service where the narrative says headcount should be declining.

Entry-level positions, particularly for software developers ages 22-25, are seeing major declines. But when I look at the forward-looking employers, I see the opposite. Shopify is hiring 1,000 engineering interns. LinkedIn launched an AI Product Builders program specifically to attract early-career talent.

The real shift I'm observing isn't the triad of product, design, and engineering blurring into nothing. It's the collapse of specialist roles around that triad. We're moving responsibilities from product analysts, researchers, and marketers back to the core team as AI enables augmentation of these tasks.

Process: Engineering efficiency gains don't equal product velocity

The common narrative says AI is speeding up software development, but it depends entirely on context. I've seen studies of open-source developers working on larger codebases with complex context where they were actually 20% less productive.

By far, companies have focused AI deployment on coding assistance more than other parts of product development. This creates a problem. Product development is a system, and a system is only as fast as its slowest bottleneck.

Massively increasing the efficiency of engineering just shifts the bottleneck to product management. That's exactly what we're seeing happen. The old world of dozens of steps, multiple tools, specialists, and coordination handoffs needs to be completely reimagined for AI-native teams.

The path forward requires us to rethink not just how we use AI tools, but the fundamental systems of how product teams operate. This will be the largest transformation we've seen in the past 25 years.

Reforge has been shipping like crazy lately. We've recently overhauled our course library to include in-depth courses on AI leadership, growth and productivity. We've also launched four AI SaaS tools for product teams:

🔧 Reforge Build is an AI prototyping tool to help you validate features faster without starting from scratch.

🚀 Reforge Launch enables builders to go from insight to launch with AI-native feature flags and configuration.

📈 Reforge Insights provides your entire product team (PM, Eng and Design) with actionable insights right in their existing tools.

🧠 Reforge Research helps you uncover new opportunities to drive higher conversion, retention and product satisfaction.

At our recent AI Summit, I opened with a simple premise. AI is touching every single thing we do as professionals, but the common narratives don't match what's actually happening. I've been tracking data across strategy, tools, teams, and process, and I want to share what I'm seeing. These are the gaps between expectations and reality that we all need to face.

Product: AI capabilities double every 7 months

AI capabilities are still doubling every seven months. This creates fundamental questions for me about how we build durable product strategies when the ground keeps shifting beneath us.

We're facing pressure to re-embed core capabilities within our products rather than relying on external AI tools. But I don't know yet whether this trajectory will hit diminishing returns like self-driving technology did.

Here's the critical challenge I'm wrestling with: how do we build differentiated products that competitors can't easily replicate when the underlying technology is evolving this rapidly?

Channel: Distribution shift is in progress

ChatGPT is on track to surpass one billion monthly active users by the end of this year. What's striking to me isn't just that it's growing faster than other platforms but that its growth is actually accelerating.

I keep coming back to what history teaches us: the winners aren't those with the best initial distribution but those with the best retention and engagement. ChatGPT leads on both. Its retention curves are above all other players, and it has the highest time spent.

We've seen this pattern before. New platforms identify, open development opportunities to draw users, then close to monetize. And we make the same mistake every time. Incumbents try to copy-paste existing products into new environments rather than rebuilding for how people actually behave in these channels.

Monetization: Cost to serve isn't going away

Yes, older AI models are decreasing in cost rapidly. But here's what I'm tracking: the cost of the best, latest models at any given time has remained essentially flat. Consumers expect the quality and capabilities of the newest models, not yesterday's cheaper version.

A lot of startups took bets on 10x cost decreases, and I'm watching the math not work out. As model capabilities improve, users consume more tokens. That can actually increase total costs even if per-token pricing drops.

I'm seeing this gap between customer expectations and willingness to pay grow wider. Someone who says "this is amazing, it built what would have taken me two weeks in two prompts" in Q1 becomes "I paid $20 for these credits and got ripped off" by Q3.

Tools: Only 5% of AI deployments make it to production

A MIT study found that only 5% of AI initiatives actually make it to production. This isn't just a large enterprise problem. I'm seeing it across companies of all sizes.

The failure reason isn't cost or ROI. It's human adoption. There's a major disconnect between what we as leaders think the friction points are versus what our employees actually experience.

I see barriers in three forms: procurement processes where defense controls offense (taking months to approve $200 tools), political barriers when roles collide as AI blurs responsibilities, and permission barriers where people fear doing something wrong so they simply wait.

Team: AI isn't making teams smaller (yet)

Only 5% of companies are reducing team size because of AI. What I find fascinating is that the companies deploying AI most aggressively are actually hiring more. 45% of that hiring is happening in areas like customer service where the narrative says headcount should be declining.

Entry-level positions, particularly for software developers ages 22-25, are seeing major declines. But when I look at the forward-looking employers, I see the opposite. Shopify is hiring 1,000 engineering interns. LinkedIn launched an AI Product Builders program specifically to attract early-career talent.

The real shift I'm observing isn't the triad of product, design, and engineering blurring into nothing. It's the collapse of specialist roles around that triad. We're moving responsibilities from product analysts, researchers, and marketers back to the core team as AI enables augmentation of these tasks.

Process: Engineering efficiency gains don't equal product velocity

The common narrative says AI is speeding up software development, but it depends entirely on context. I've seen studies of open-source developers working on larger codebases with complex context where they were actually 20% less productive.

By far, companies have focused AI deployment on coding assistance more than other parts of product development. This creates a problem. Product development is a system, and a system is only as fast as its slowest bottleneck.

Massively increasing the efficiency of engineering just shifts the bottleneck to product management. That's exactly what we're seeing happen. The old world of dozens of steps, multiple tools, specialists, and coordination handoffs needs to be completely reimagined for AI-native teams.

The path forward requires us to rethink not just how we use AI tools, but the fundamental systems of how product teams operate. This will be the largest transformation we've seen in the past 25 years.