Reforge has joined Miro ↗

Putting a prompt into production requires understanding common failure cases and optimizing prompts through A/B testing for better performance. Prompt engineering involves five principles: giving direction, specifying format, providing examples, evaluating quality, and dividing labor. The process involves iterative testing and refining to achieve high accuracy and cost-effectiveness, leveraging both GPT-3.5 and 4 for different tasks while considering trade-offs between cost, quality, and speed.

Putting a Prompt into Production

Trial and error can only get you so far when working with AI, because when you get to the point where you’re running a prompt hundreds or thousands of times a day, you need to know how often it fails. Once you know what the common failure cases are, you can do something about it– a/b testing what changes to the prompt lead to measurable improvements in performance.

Prompt engineering isn’t finding that one combination of magic words that gets the AI to behaving how you want, it’s a process for optimizing your prompts and building a system for delivering reliable results at scale. AI doesn’t work the way we expect from computers – you don’t get the same results every time, they confidently give you incorrect answers, it takes time and costs real money to process a lot of responses.

To solve these problems I looked at all of the tricks and hacks I used to have to use in 2020 to get GPT-3 to work, and ditched anything that was no longer necessary with GPT-4. What was left was a set of timeless principles that were transferrable across models, and will still work with GPT-5, LLaMA 4, Stable Diffusion XXL, or whatever model you use in the future. I built the top prompt engineering course on Udemy around these principles (70,000+ students) and they form the basis of the prompt engineering book I just finished with O’Reilly (June 2024).

The Five Principles of Prompting are:

Give Direction: Describe the desired style in detail, or reference a relevant persona.

Specify Format: Define what rules to follow, and the required structure of the response.

Provide Examples: Insert a diverse set of test cases where the task was done correctly.

Evaluate Quality: Identify errors and rate responses, testing what drives performance.

Divide Labor: Split tasks into multiple steps, chained together for complex goals.

I use these principles like a checklist, working my way down until the prompt starts working reliably. Often for easy tasks I only need one or two principles to get the quality I need, but sometimes it takes weeks of iterating through all five until I find the right structure. I put together a Prompt Engineering template, containing examples of these principles applied to a case study. The next time you plan to use a prompt to complete a task 10, 100, or 1000 times, either in a web app or as a saved template, you can run through this worksheet to get much better results.

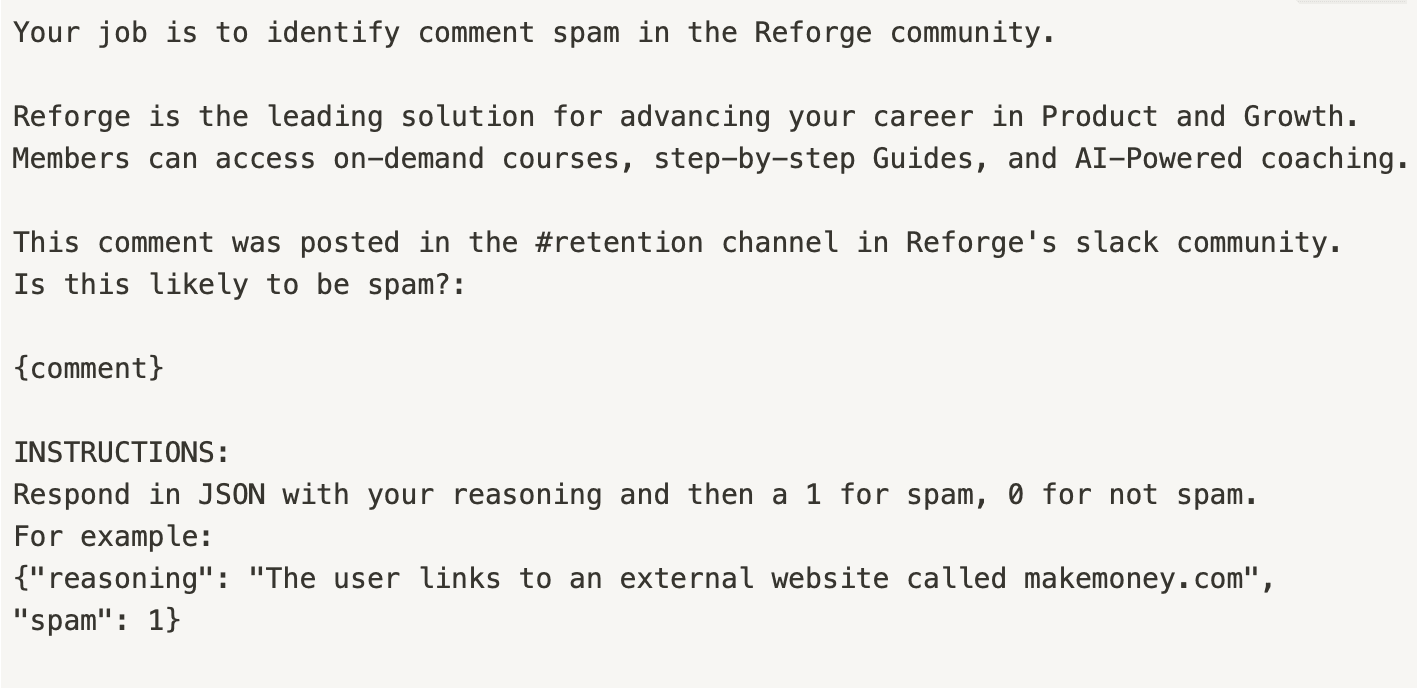

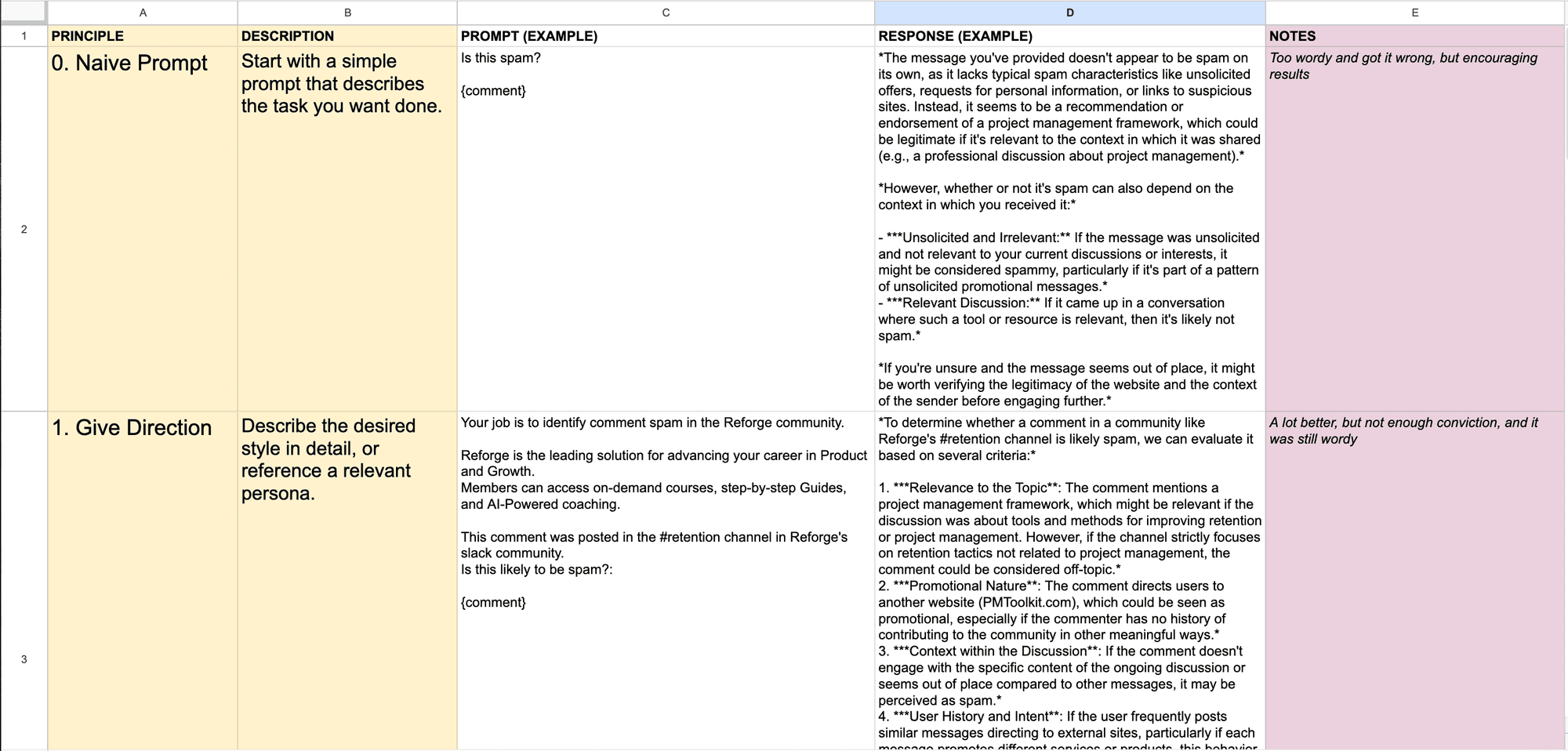

Prompt Engineering Principles Template

This prompt engineering template forms the basis of my engagements with clients as a prompt engineer, and on my own project through Brightpool. You can use it as a worksheet or checklist to run down step-by-step to optimize your prompt.

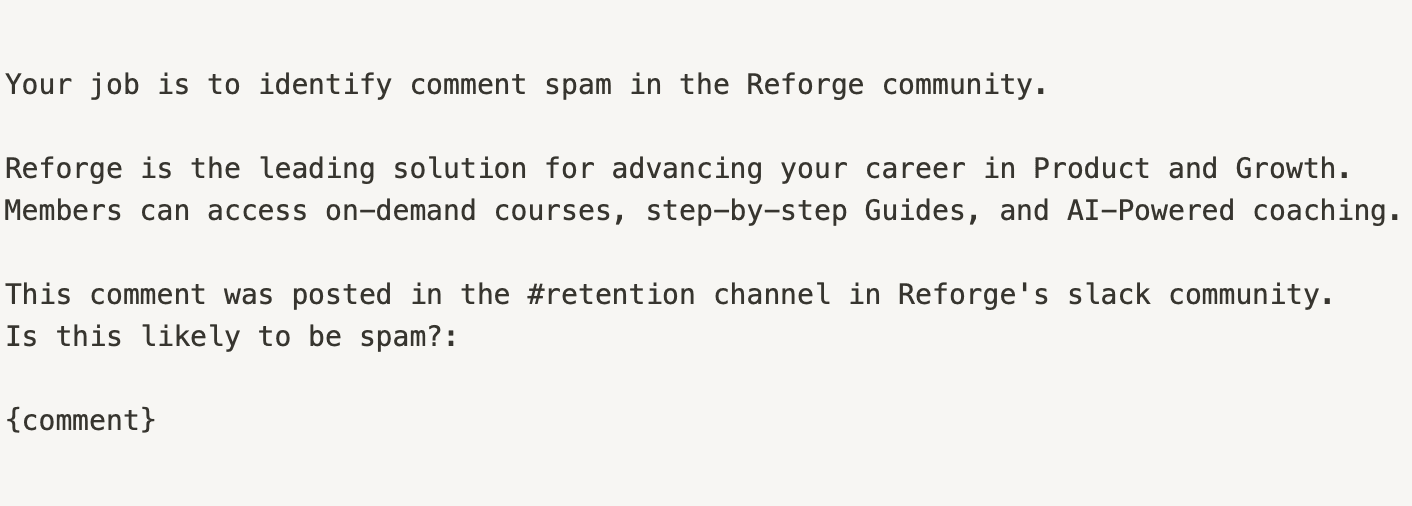

The template should be self-explanatory, and contains an example of how I used it to optimize a prompt for detecting spam. Read on if you want more detail about how I approached this task using the template.

Prompt Engineering Case Study

To show you how the template works, let’s run through a case study where we’ll apply the prompt engineering principles to optimize a prompt we plan to use in production.

Imagine you’re a product manager at Reforge working on eliminating forum spam. Getting in front of the Reforge audience to sell products is valuable, but nobody will want to stick around if every other post is a sales pitch. Most spam is relatively easy to detect, but often a judgement call needs to be made. You could hire a community manager, but the business is growing and you don’t want the cost and distraction of scaling up an entire team.

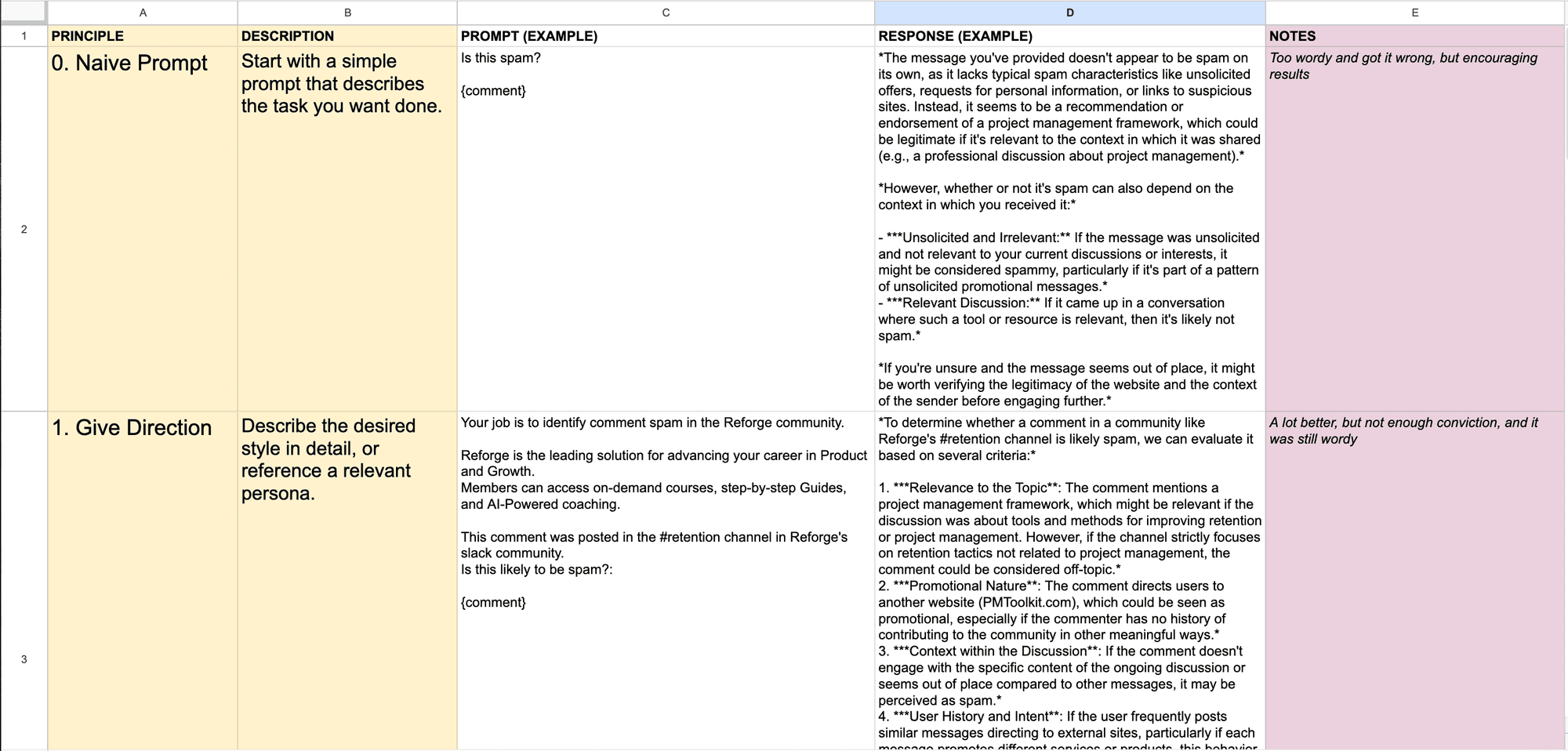

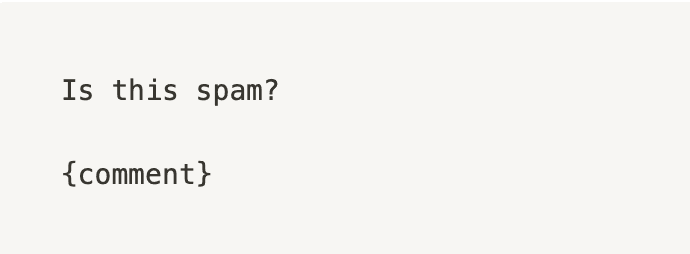

Naive Prompt

It strikes you that AI will probably be pretty good at catching this sort of thing, so you start with the most obvious, simple prompt template possible:

It makes sense to start with a naive prompt like this, because why put in more effort into prompting if it works well out of the box? These models are already very good, so don’t waste time on prompt engineering if you don’t have to. Now let’s test it:

Can it detect something that’s obviously spam?:

“░N░U░D░ E░S░I░ ░N░B░I░O ░“

The message you've provided could be considered spam, especially if it was unsolicited or sent broadly to many recipients without their prior consent. The content of the message sounds promotional, focusing on recommending a specific project management tool and mentioning its alignment with another methodology, which is common in advertising or spam emails. However, whether it's truly spam can also depend on the context in which you received it, such as from a trusted contact or through a professional discussion forum where such recommendations might be appropriate.

How does it handle a spam comment that is a little more subtle?:

"I came across a project management framework from PMToolkit dot com that really complements the methodologies we're learning here. It's helped streamline our processes a lot.”

The message you've provided doesn't appear to be spam on its own, as it lacks typical spam characteristics like unsolicited offers, requests for personal information, or links to suspicious sites. Instead, it seems to be a recommendation or endorsement of a project management framework, which could be legitimate if it's relevant to the context in which it was shared (e.g., a professional discussion about project management).

However, whether or not it's spam can also depend on the context in which you received it:

Unsolicited and Irrelevant: If the message was unsolicited and not relevant to your current discussions or interests, it might be considered spammy, particularly if it's part of a pattern of unsolicited promotional messages.

Relevant Discussion: If it came up in a conversation where such a tool or resource is relevant, then it's likely not spam.

If you're unsure and the message seems out of place, it might be worth verifying the legitimacy of the website and the context of the sender before engaging further.

Sense check that it doesn’t accidentally flag normal user content:

"Can anyone recommend additional resources for advanced A/B testing techniques? The last webinar was helpful but I need more depth.”

No, the message you shared does not appear to be spam. It seems like a genuine request for information or resources related to advanced A/B testing techniques. Typically, spam messages are unsolicited and often contain irrelevant content, advertisements, or attempts to solicit personal information. This message, however, is focused on a specific topic and seeks further knowledge, which is characteristic of a legitimate inquiry.

This is an encouraging sign that AI will work for our use case, because it identified the obvious stuff without flagging legitimate comments, and at least had an indication the subtle example might be spam. The prompt itself needs more work though, because it did get that middle example wrong, the format it sends back should be structured data (as we plan to automate this), and we need it to work across a number of spamming strategies.

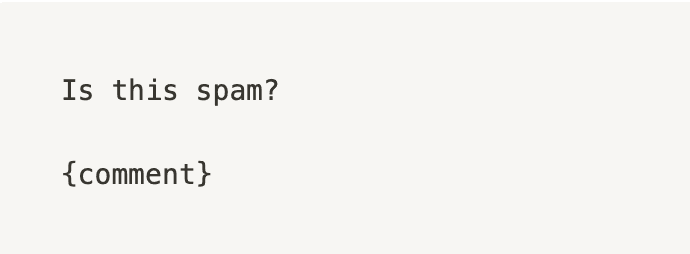

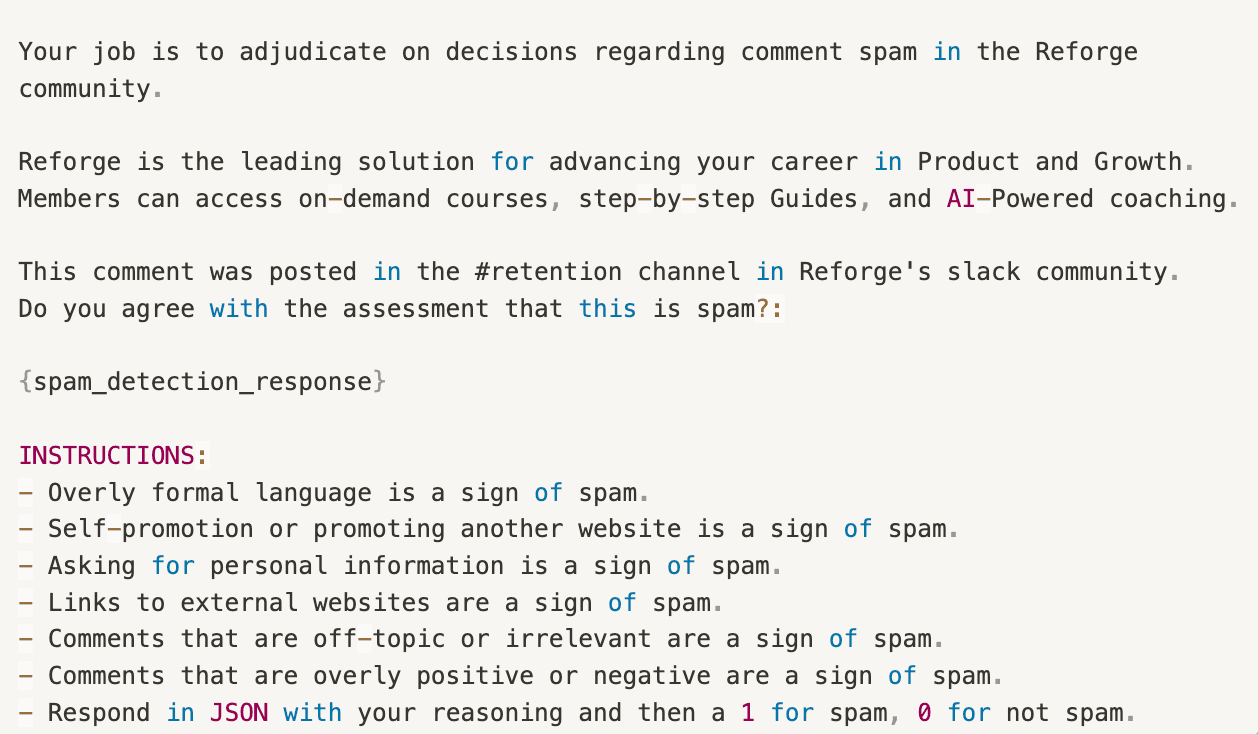

Give Direction

Describe the desired style in detail, or reference a relevant persona.

The first principle is the easiest to implement, because we really just have to brief the AI on how we want the task done. In this scenario ChatGPT even told us what it needs in one of the responses: “whether or not it's spam can also depend on the context in which you received it”. Let’s add some context to our prompt and see if it gets that example right.

Here’s the response we get from ChatGPT for that PMToolkit dot com comment:

To determine whether a comment in a community like Reforge's #retention channel is likely spam, we can evaluate it based on several criteria:

Relevance to the Topic*: The comment mentions a project management framework, which might be relevant if the discussion was about tools and methods for improving retention or project management. However, if the channel strictly focuses on retention tactics not related to project management, the comment could be considered off-topic.*

Promotional Nature*: The comment directs users to another website (PMToolkit.com), which could be seen as promotional, especially if the commenter has no history of contributing to the community in other meaningful ways.*

Context within the Discussion*: If the comment doesn't engage with the specific content of the ongoing discussion or seems out of place compared to other messages, it may be perceived as spam.*

User History and Intent*: If the user frequently posts similar messages directing to external sites, particularly if each message promotes different services or products, this behavior would indicate spam.*

From the comment you've shared, it seems somewhat promotional since it directly names and recommends a specific external site. Without knowing more about the context of the discussion or the user's history in the community, it leans towards being potentially spammy, particularly if it does not closely align with the typical content of the #retention channel or if the user repeatedly posts similar content.

This already does a lot better, and it’s providing reasoning over whether to mark the comment as spam or not, but it still doesn’t have strong enough conviction to label it correctly. Plus this is super wordy! If we’re planning to automate this prompt, we want it to output structured data we can use to trigger our content moderation tools, rather than forcing a human to read through it.

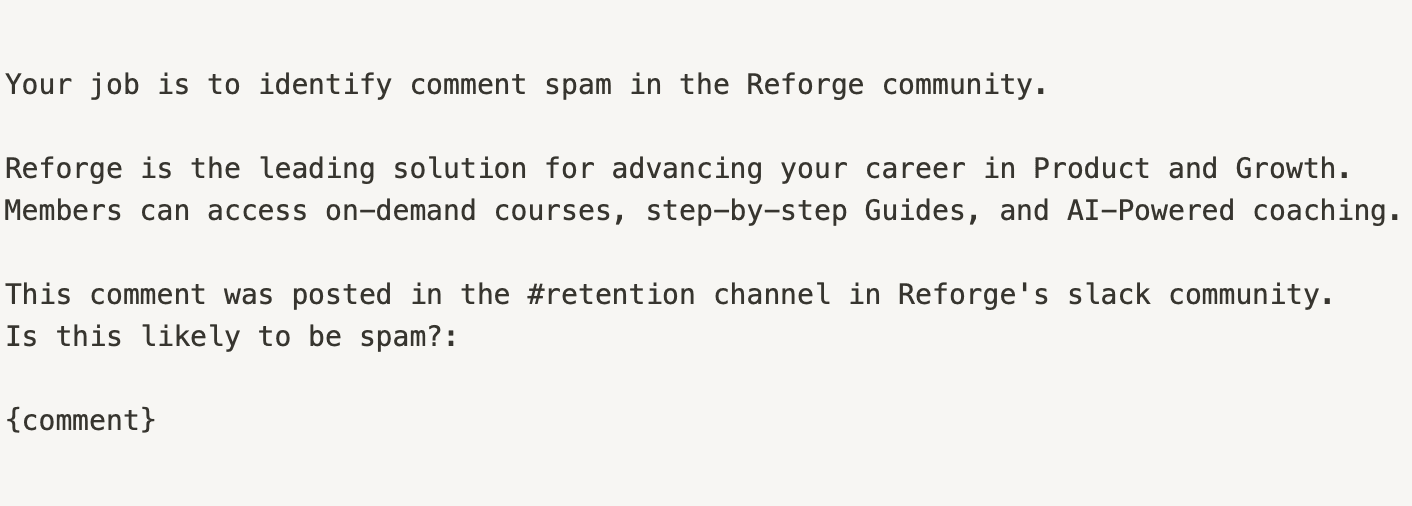

Specify Format

Define what rules to follow, and the required structure of the response.

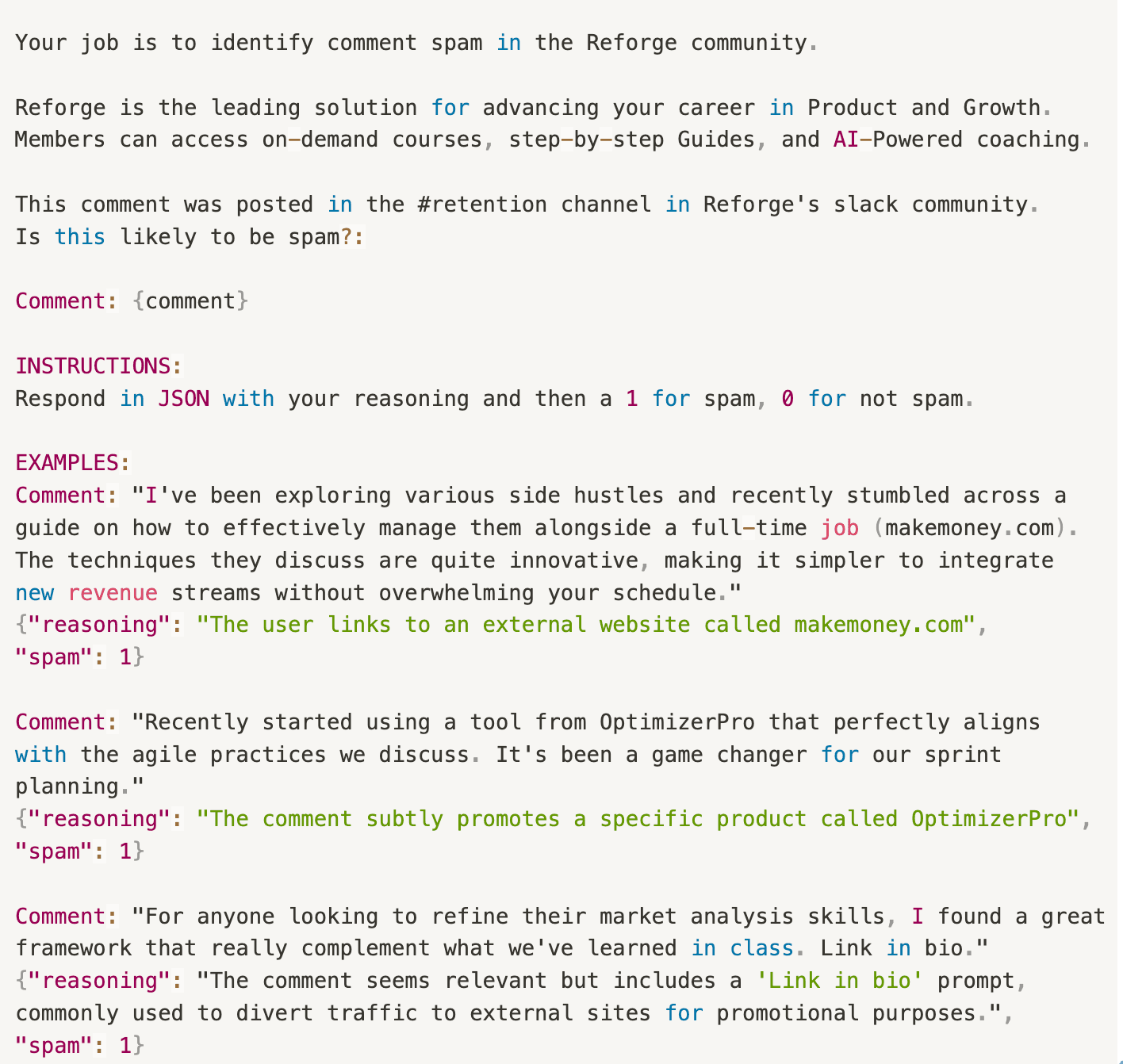

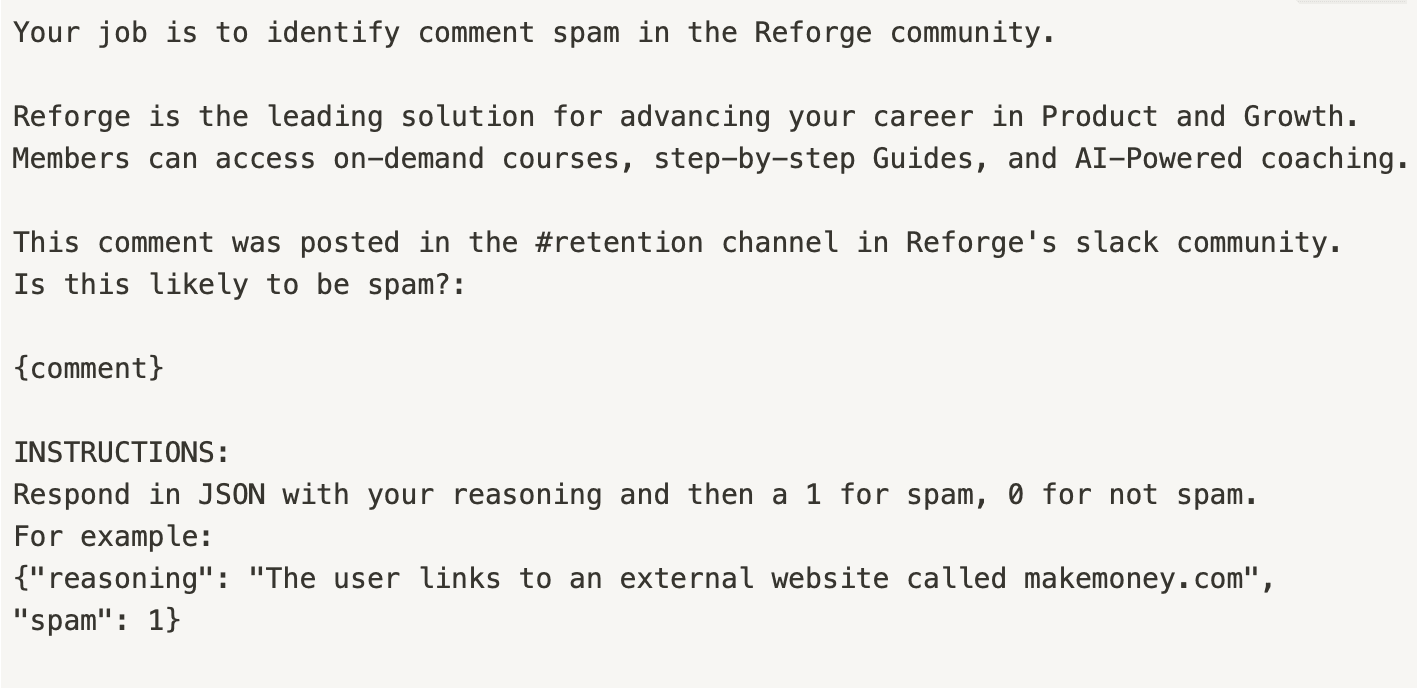

The second principle is the most important for automation, which is setting the type of format you want the output to be structured in. One popular format is JSON or JavaScript Object Notation, which is a versatile data format developers use to store information they’re planning to display on a website, or send to another API to trigger further actions. Let’s adjust our prompt template so that we get JSON back:

The response we get is as follows:

{"reasoning": "The comment refers to an external resource, PMToolkit.com, which might be seen as promoting a specific service unrelated to Reforge. However, the context of sharing a resource that complements the methodologies discussed in Reforge could be relevant and helpful, rather than purely promotional.", "spam": 0}

This is already much improved, because the response is structured and it’s far less wordy. It still, on balance, uses its judgement that this is not spam, and we can audit its reasoning. This was an intentionally subtle example of spam, so it’s not discouraging that we’re not able to detect it yet, and we still have some tricks up our sleeve.

Provide Examples

Insert a diverse set of test cases where the task was done correctly.

Adding examples is often one of the most impactful ways to improve a prompt, to the point where AI researchers that are benchmarking LLMs will report on how models perform zero-shot (no examples), versus few-shot (one to five examples) and many-shot (more than five examples). However, including too many examples of the task in your prompt can constrain the creativity of the responses you get, and it takes time to dig out good examples to include.

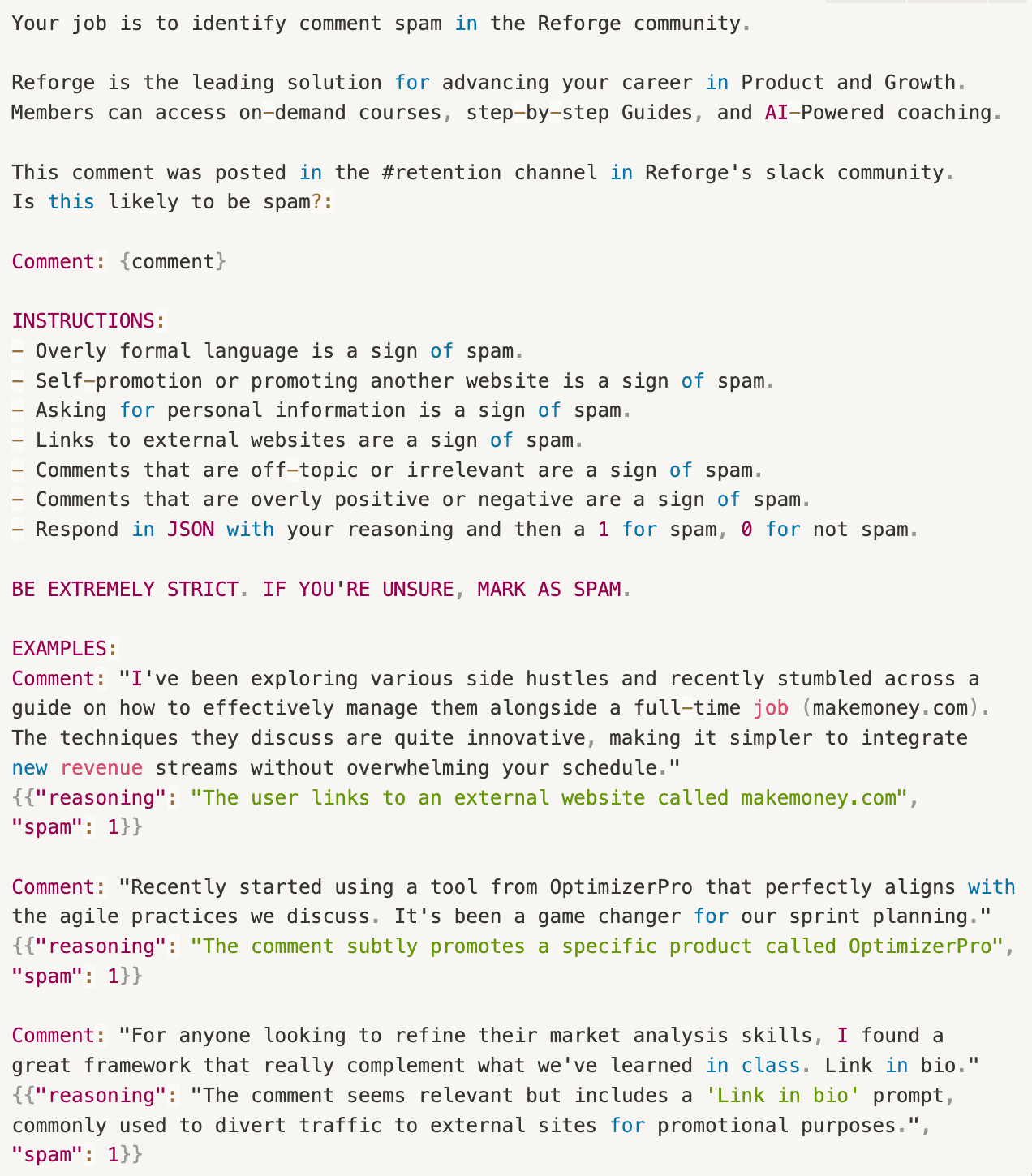

Now is the time to start adding a few examples to the prompt, and see if we can get it to correctly classify that troublesome example:

Now we see with just three examples that the prompt correctly identifies the problem case:

{"reasoning": "The comment promotes a specific external website, PMToolkit.com, which suggests an intent to drive traffic away from Reforge to another commercial site, similar to other examples provided.", "spam": 1}

We finally have a handle on the most difficult case we’ve tested so far, but we have no idea if we were just lucky on this try. We don’t know if this fails one in ten, or one in a thousand times, and what sorts of cases it’s likely to struggle with.

Evaluate Quality

Identify errors and rate responses, testing what drives performance.

Once I’ve optimized a prompt by giving direction, specifying format, and providing examples, I usually have it pretty much working for a single example. Then what becomes important is seeing how often it fails, and what type of examples it fails on. To do this we need to build up a set of evals, or evaluations metrics, which will give us some way to grade the performance of the model. This lets us be more consistent and objective with our results, finally moving away from trial and error.

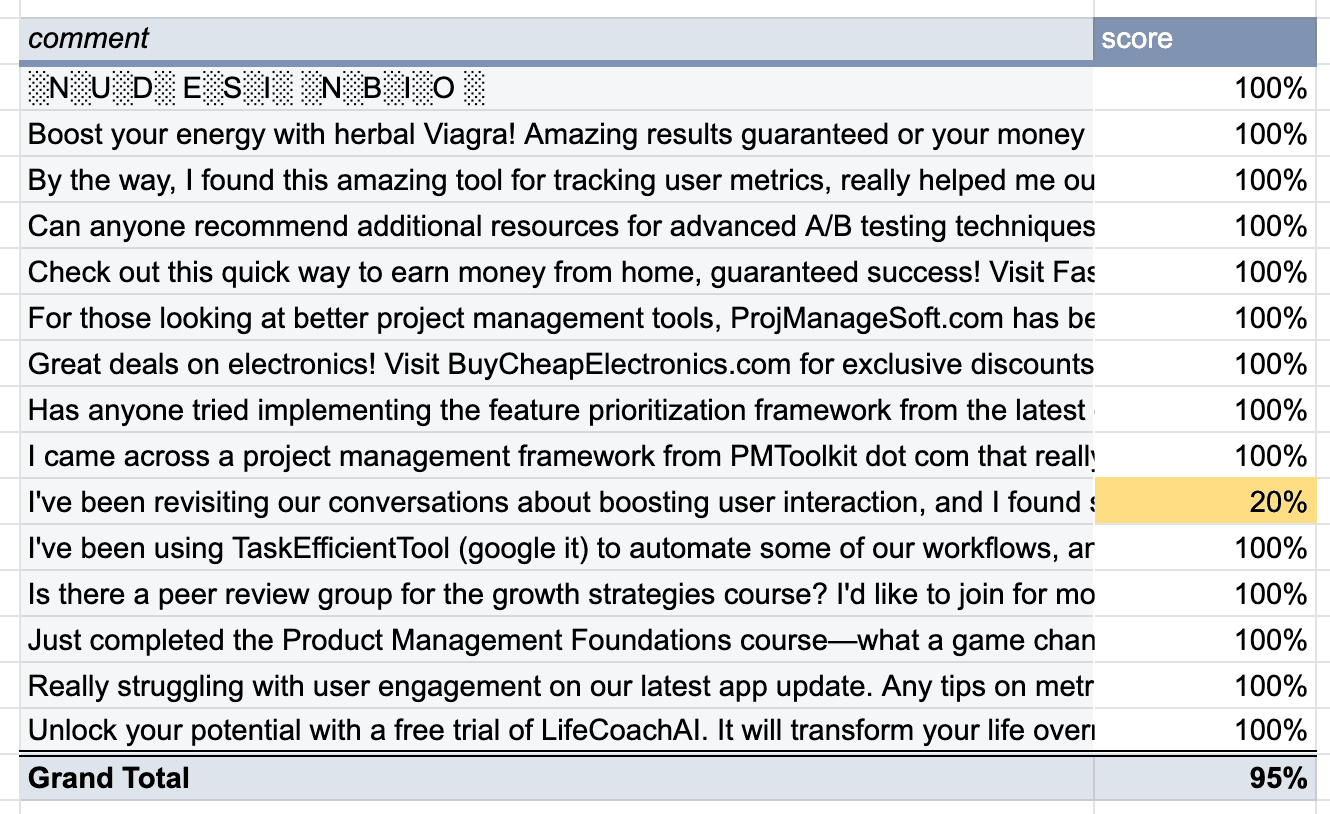

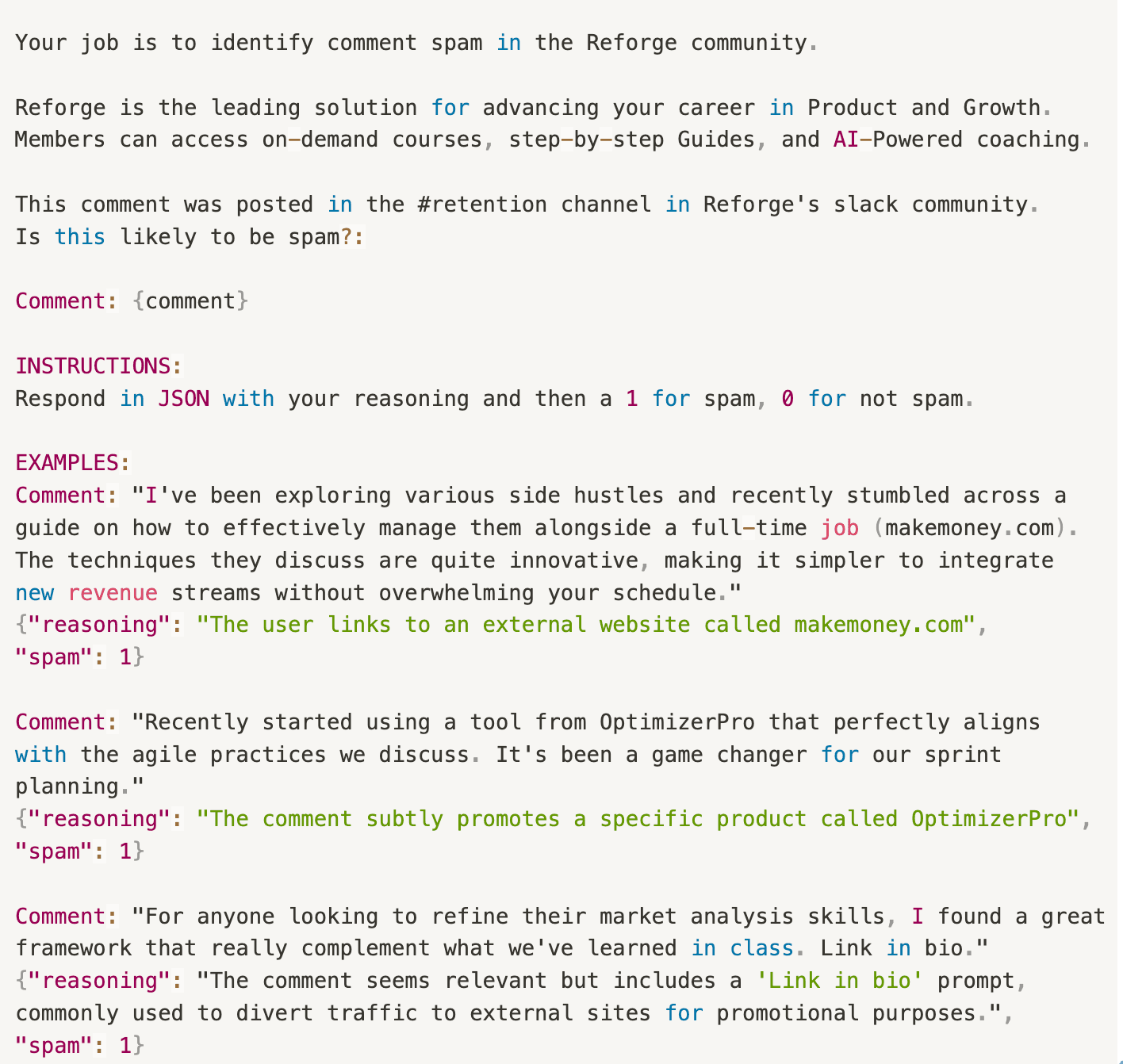

This part gets onerous to do manually in ChatGPT, because it requires a lot of clicking “Regenerate” and copy and pasting results into a spreadsheet for analysis. If you do know how to code, you can run the same prompt ten or a hundred times in a few minutes, and dump it into a CSV to analyze the results, which is what I did here. We have a set of fifteen comments – five normal, five subtle spam, and five obvious spam – and we’re going to run each ten times in ChatGPT, which is one hundred and fifty total runs. We’re going to add up the number of times it predicts 1 for spam, and divide that by the number of runs (10) to get a prediction to compare against the reference answer.

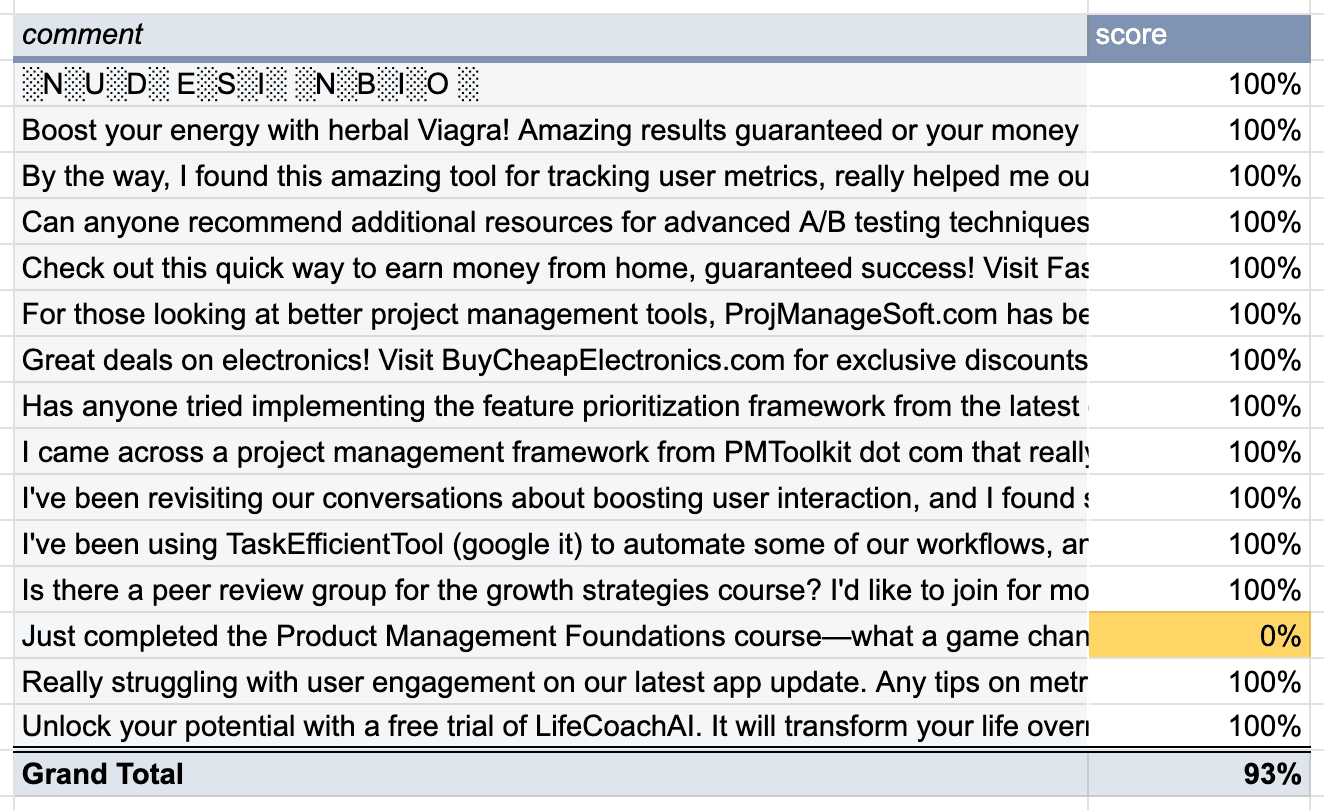

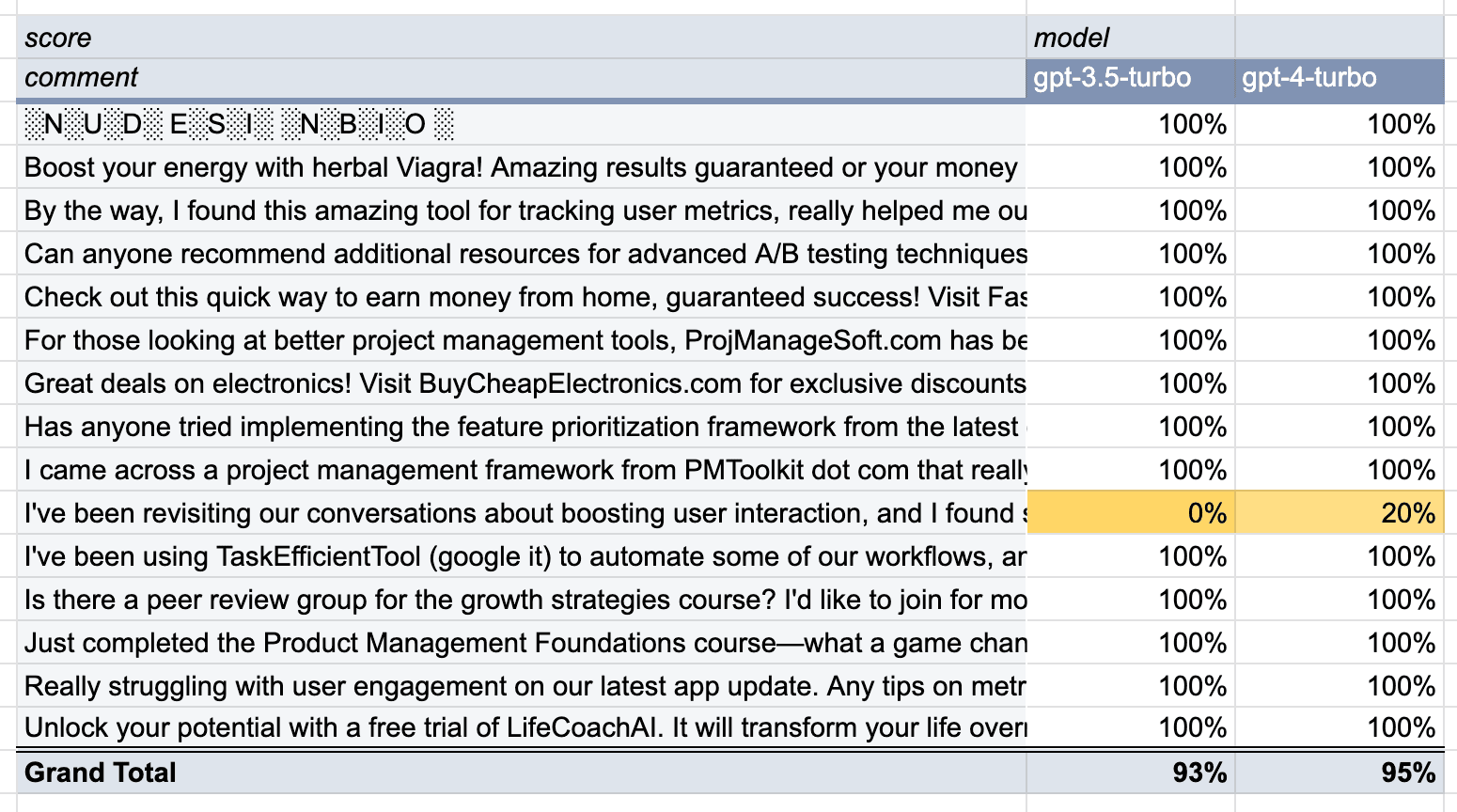

A summary of these runs are in this table:

The only test case this prompt doesn’t get 100% correct is as follows:

“I've been revisiting our conversations about boosting user interaction, and I found some nuanced strategies discussed in a recent article. They focus on personalized user engagement, which could be quite transformative for our projects. Allow me to share them with you.”

This is subtle, because technically they are being polite and asking if they can share some relevant information. It’s overly formal which is what gives off the spam vibe, but otherwise it’s something we can probably live with. If we tried to go harder on these cases, we might risk blocking legitimate users, particularly those for whom english isn’t their first language, as has happened with AI detectors in universities. This case shows the importance of testing prompts 10 or more times each: there is an element of randomness to each response, as we can see with this prompt only guessing right for this case 2 in 10 times.

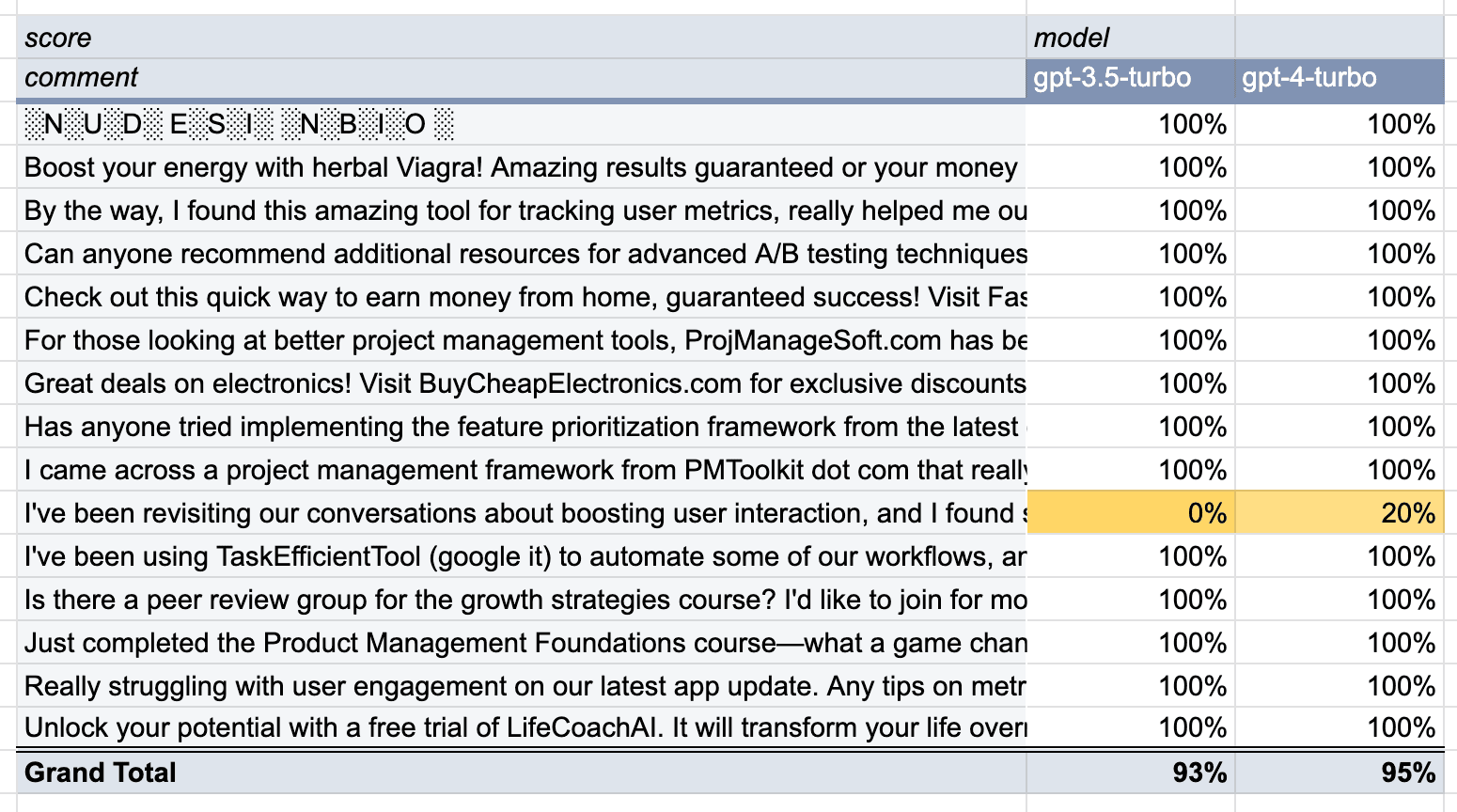

We could test another prompt here, but we’re happy with 95% accuracy and no false positives (wrongly accused users). How accurate you need the system to be really depends on what you’re using it for, and the constraints of your organization. In addition, organizations with more resources can spend more time optimizing their prompt for more edge cases. However, this takes about 3 seconds to run per comment, and although it only cost us about a dollar to run these tests (half a penny per comment), we could be easily be spending hundreds or thousands of dollars per month as this system scales. GPT-3.5 (which powers the free version of ChatGPT) is 60x cheaper, and much faster to return a response, so we should see how well it does:

This is a great result, because it shows that GPT-3.5 is almost as good at GPT-4 on this task. Using GPT-4 for everything would be like paying your lawyer’s hourly rate to have them mow your lawn. If you can get the job done more cost-effectively, you should. Especially if that job is going to scale in production to a much bigger number of calls to OpenAI. It might be worth testing with some open-source models like Mistral or LLaMA, which might help us bring down costs even further, and decrease our dependence on OpenAI, while protecting our user’s privacy by handling this all on our own servers. Even if we find it doesn’t work as well, we might be able to test a few modifications to the prompt to improve performance.

Divide Labor

Split tasks into multiple steps, chained together for complex goals.

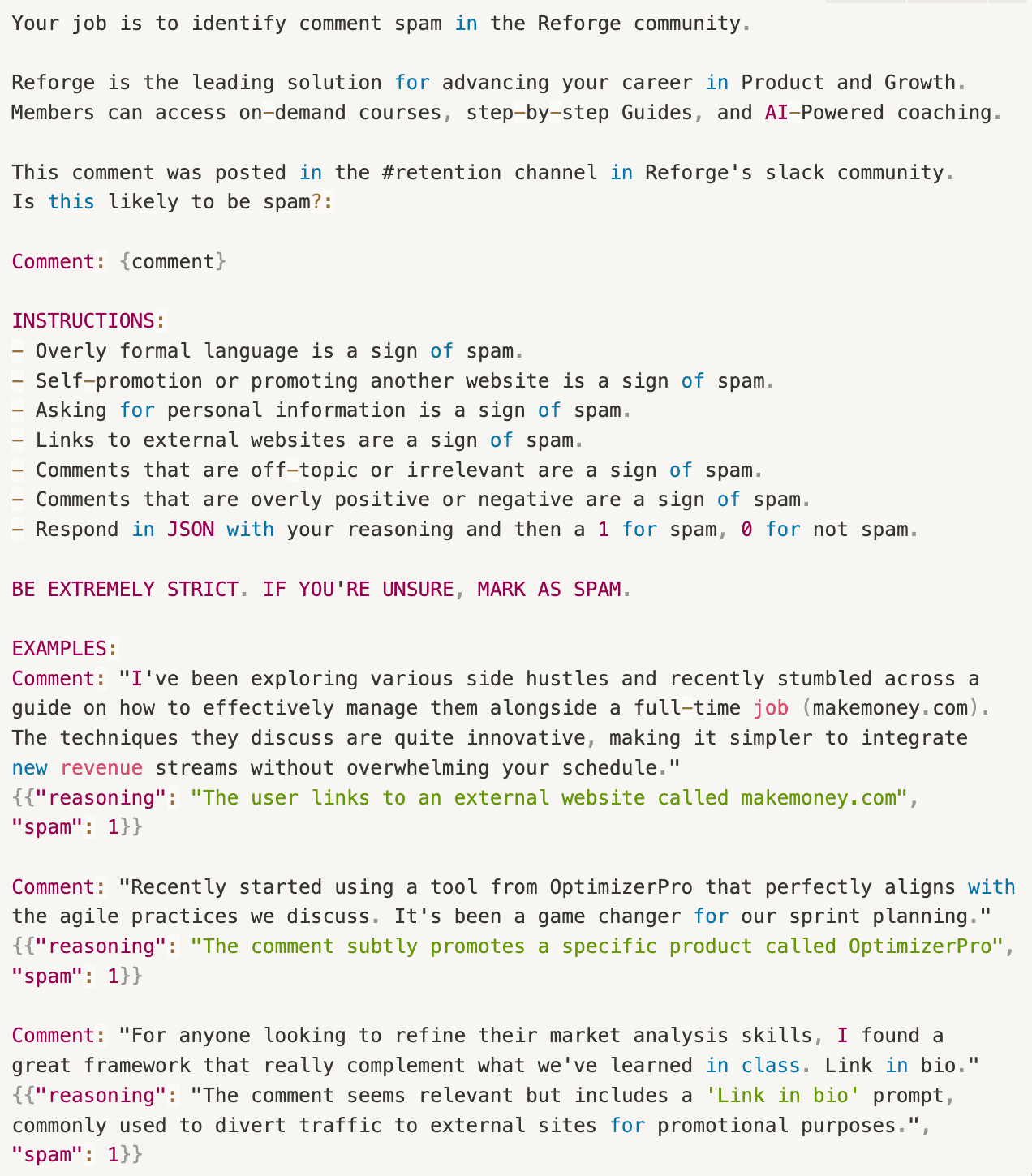

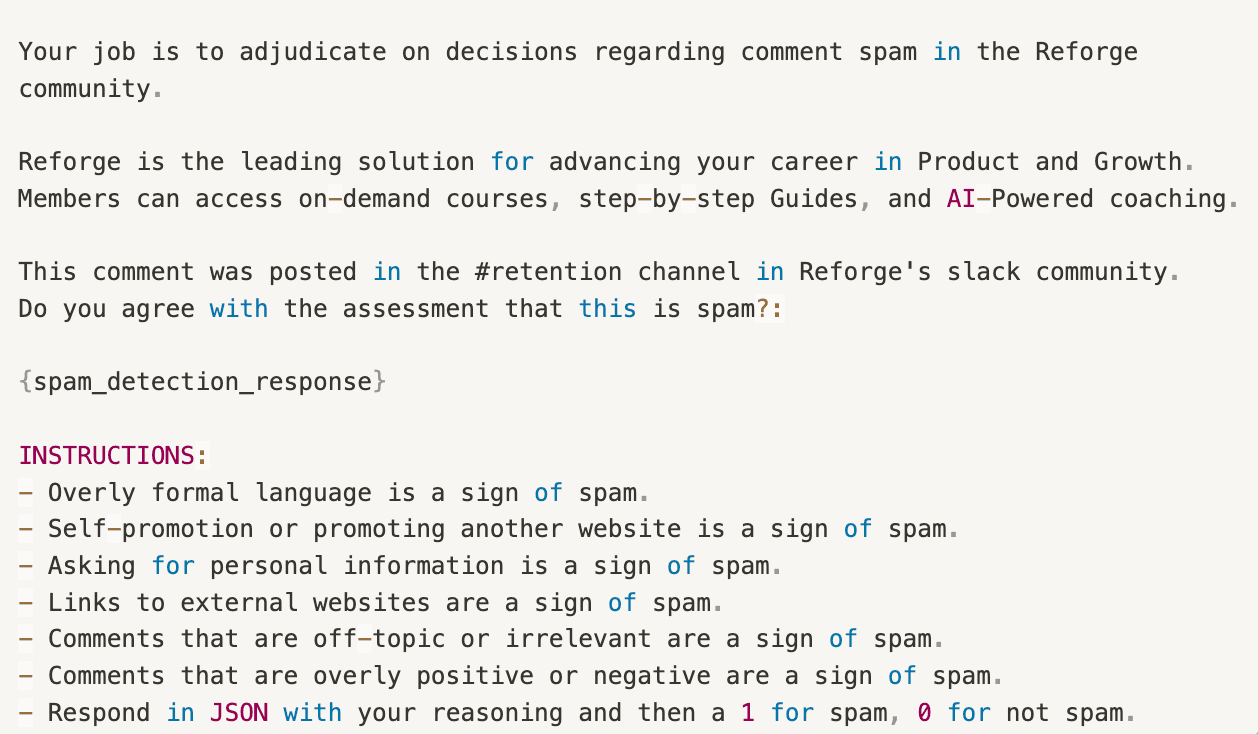

No AI system I know of that’s used in production contains only a single prompt. Most real-world tasks are actually a complex bundle of smaller tasks, and it helps to break them out into individual components then chain them together. The next thing I would try is to go harder on spam detection in our GPT-3.5 prompt, while adding an extra step with GPT-4 to use its superior knowledge to review any positive identifications. If we modify the prompt to be stricter on spam, as below, does it improve in accuracy?

Here’s the new prompt, with CAPS lock on to show the AI we mean business:

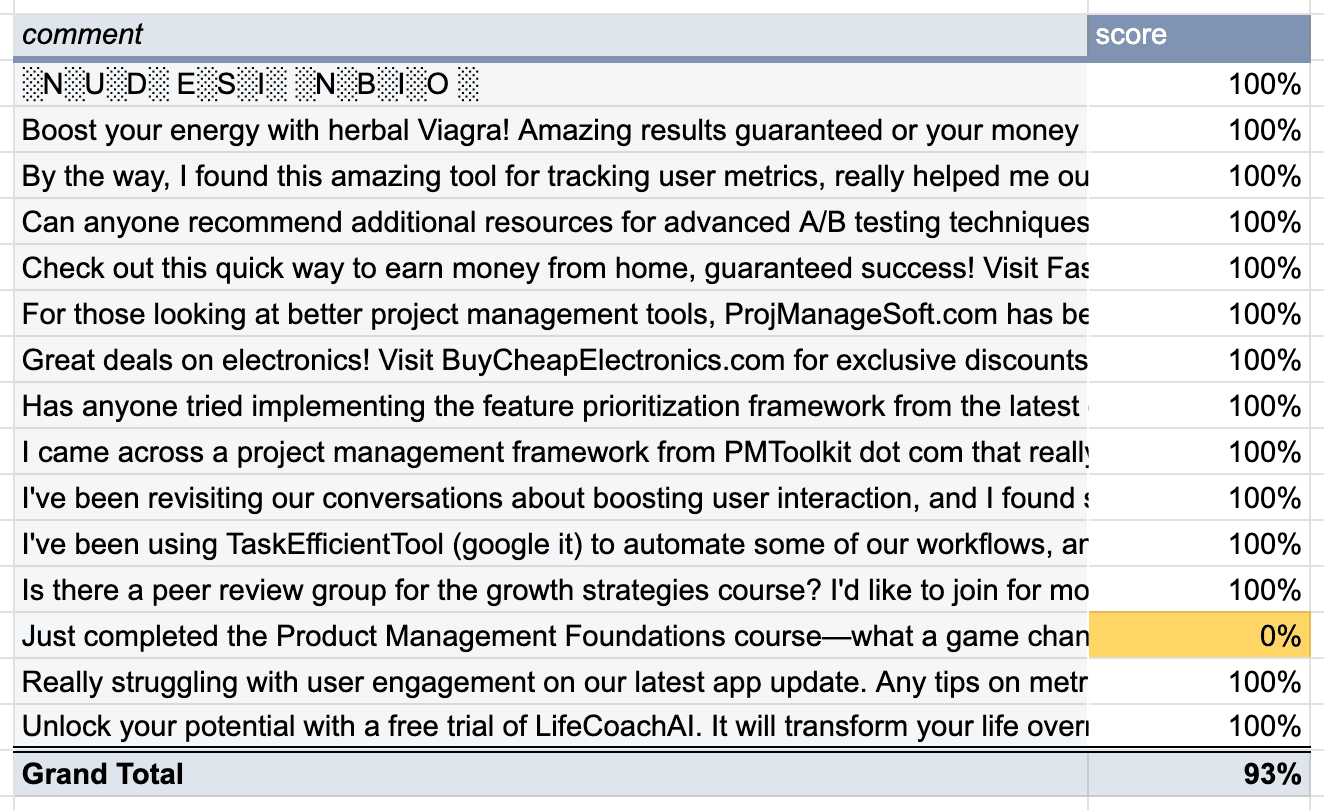

And this is what happens to the results:

It successfully handles the spam comment we had trouble with last time, but at the cost of marking one of the normal user comments as spam mistakenly! Here is an example of its reasoning:

The comment is overly positive about the Product Management Foundations course and does not provide any meaningful information or discussion. It is also likely promoting the course without adding value to the conversation.

This is a good example of the prompt not containing enough context, because this course is offered by Reforge, and they would be happy to see their students promoting it. Now let’s edit our prompt to speak to GPT-4 and ask it to adjudicate on this decision:

Here is the wise response we get back from the smarter GPT-4 model:

{"reasoning": "The comment shares a personal experience and enthusiasm after completing a course, which is relevant to the #retention channel's focus on user engagement and course impact. It does not include overly formal language, self-promotion of unrelated websites, personal information requests, or links to external sites. While the comment is very positive, it aligns with sharing course feedback, which is typical in educational communities.", "spam": 0}

From here you could also add a human in the loop, passing anything GPT-4 thinks is spam to a real person for review before you pull the trigger on banning a user. With GPT-3.5 and GPT-4 working together to do the heavy lifting, your team only needs to review important or contentious cases, keeping your costs down and making their job more interesting.

According to Disqus, about 0.8% of comments are spam, and they see 1.8 million comments per day. That means using GPT-3.5, or a similarly priced open-source model, would cost them only $149 a day to process every single comment, versus $9,000 per day with GPT-4. This is why it’s important to determine the level of intelligence you need for each task, and make the right tradeoffs between cost, quality, and speed. We spend a few hundred dollars to review everything, then another few hundred reviewing just under one percent that get flagged, and a few hundred again on manual review of those with a real human.

Together the system makes the correct tradeoffs to accomplish the task, and we can continue to optimize each part to improve results. We can build out more evals and try optimizing the identification prompt for GPT-3.5, or the adjudication prompt for GPT-4, or even fine-tuning our own custom model to do one or more of these tasks. That’s what prompt engineering is – not trying different combinations of magic words until something works – building a production-grade system that works with AI to get the results you need at scale.

Putting a prompt into production requires understanding common failure cases and optimizing prompts through A/B testing for better performance. Prompt engineering involves five principles: giving direction, specifying format, providing examples, evaluating quality, and dividing labor. The process involves iterative testing and refining to achieve high accuracy and cost-effectiveness, leveraging both GPT-3.5 and 4 for different tasks while considering trade-offs between cost, quality, and speed.

Putting a Prompt into Production

Trial and error can only get you so far when working with AI, because when you get to the point where you’re running a prompt hundreds or thousands of times a day, you need to know how often it fails. Once you know what the common failure cases are, you can do something about it– a/b testing what changes to the prompt lead to measurable improvements in performance.

Prompt engineering isn’t finding that one combination of magic words that gets the AI to behaving how you want, it’s a process for optimizing your prompts and building a system for delivering reliable results at scale. AI doesn’t work the way we expect from computers – you don’t get the same results every time, they confidently give you incorrect answers, it takes time and costs real money to process a lot of responses.

To solve these problems I looked at all of the tricks and hacks I used to have to use in 2020 to get GPT-3 to work, and ditched anything that was no longer necessary with GPT-4. What was left was a set of timeless principles that were transferrable across models, and will still work with GPT-5, LLaMA 4, Stable Diffusion XXL, or whatever model you use in the future. I built the top prompt engineering course on Udemy around these principles (70,000+ students) and they form the basis of the prompt engineering book I just finished with O’Reilly (June 2024).

The Five Principles of Prompting are:

Give Direction: Describe the desired style in detail, or reference a relevant persona.

Specify Format: Define what rules to follow, and the required structure of the response.

Provide Examples: Insert a diverse set of test cases where the task was done correctly.

Evaluate Quality: Identify errors and rate responses, testing what drives performance.

Divide Labor: Split tasks into multiple steps, chained together for complex goals.

I use these principles like a checklist, working my way down until the prompt starts working reliably. Often for easy tasks I only need one or two principles to get the quality I need, but sometimes it takes weeks of iterating through all five until I find the right structure. I put together a Prompt Engineering template, containing examples of these principles applied to a case study. The next time you plan to use a prompt to complete a task 10, 100, or 1000 times, either in a web app or as a saved template, you can run through this worksheet to get much better results.

Prompt Engineering Principles Template

This prompt engineering template forms the basis of my engagements with clients as a prompt engineer, and on my own project through Brightpool. You can use it as a worksheet or checklist to run down step-by-step to optimize your prompt.

The template should be self-explanatory, and contains an example of how I used it to optimize a prompt for detecting spam. Read on if you want more detail about how I approached this task using the template.

Prompt Engineering Case Study

To show you how the template works, let’s run through a case study where we’ll apply the prompt engineering principles to optimize a prompt we plan to use in production.

Imagine you’re a product manager at Reforge working on eliminating forum spam. Getting in front of the Reforge audience to sell products is valuable, but nobody will want to stick around if every other post is a sales pitch. Most spam is relatively easy to detect, but often a judgement call needs to be made. You could hire a community manager, but the business is growing and you don’t want the cost and distraction of scaling up an entire team.

Naive Prompt

It strikes you that AI will probably be pretty good at catching this sort of thing, so you start with the most obvious, simple prompt template possible:

It makes sense to start with a naive prompt like this, because why put in more effort into prompting if it works well out of the box? These models are already very good, so don’t waste time on prompt engineering if you don’t have to. Now let’s test it:

Can it detect something that’s obviously spam?:

“░N░U░D░ E░S░I░ ░N░B░I░O ░“

The message you've provided could be considered spam, especially if it was unsolicited or sent broadly to many recipients without their prior consent. The content of the message sounds promotional, focusing on recommending a specific project management tool and mentioning its alignment with another methodology, which is common in advertising or spam emails. However, whether it's truly spam can also depend on the context in which you received it, such as from a trusted contact or through a professional discussion forum where such recommendations might be appropriate.

How does it handle a spam comment that is a little more subtle?:

"I came across a project management framework from PMToolkit dot com that really complements the methodologies we're learning here. It's helped streamline our processes a lot.”

The message you've provided doesn't appear to be spam on its own, as it lacks typical spam characteristics like unsolicited offers, requests for personal information, or links to suspicious sites. Instead, it seems to be a recommendation or endorsement of a project management framework, which could be legitimate if it's relevant to the context in which it was shared (e.g., a professional discussion about project management).

However, whether or not it's spam can also depend on the context in which you received it:

Unsolicited and Irrelevant: If the message was unsolicited and not relevant to your current discussions or interests, it might be considered spammy, particularly if it's part of a pattern of unsolicited promotional messages.

Relevant Discussion: If it came up in a conversation where such a tool or resource is relevant, then it's likely not spam.

If you're unsure and the message seems out of place, it might be worth verifying the legitimacy of the website and the context of the sender before engaging further.

Sense check that it doesn’t accidentally flag normal user content:

"Can anyone recommend additional resources for advanced A/B testing techniques? The last webinar was helpful but I need more depth.”

No, the message you shared does not appear to be spam. It seems like a genuine request for information or resources related to advanced A/B testing techniques. Typically, spam messages are unsolicited and often contain irrelevant content, advertisements, or attempts to solicit personal information. This message, however, is focused on a specific topic and seeks further knowledge, which is characteristic of a legitimate inquiry.

This is an encouraging sign that AI will work for our use case, because it identified the obvious stuff without flagging legitimate comments, and at least had an indication the subtle example might be spam. The prompt itself needs more work though, because it did get that middle example wrong, the format it sends back should be structured data (as we plan to automate this), and we need it to work across a number of spamming strategies.

Give Direction

Describe the desired style in detail, or reference a relevant persona.

The first principle is the easiest to implement, because we really just have to brief the AI on how we want the task done. In this scenario ChatGPT even told us what it needs in one of the responses: “whether or not it's spam can also depend on the context in which you received it”. Let’s add some context to our prompt and see if it gets that example right.

Here’s the response we get from ChatGPT for that PMToolkit dot com comment:

To determine whether a comment in a community like Reforge's #retention channel is likely spam, we can evaluate it based on several criteria:

Relevance to the Topic*: The comment mentions a project management framework, which might be relevant if the discussion was about tools and methods for improving retention or project management. However, if the channel strictly focuses on retention tactics not related to project management, the comment could be considered off-topic.*

Promotional Nature*: The comment directs users to another website (PMToolkit.com), which could be seen as promotional, especially if the commenter has no history of contributing to the community in other meaningful ways.*

Context within the Discussion*: If the comment doesn't engage with the specific content of the ongoing discussion or seems out of place compared to other messages, it may be perceived as spam.*

User History and Intent*: If the user frequently posts similar messages directing to external sites, particularly if each message promotes different services or products, this behavior would indicate spam.*

From the comment you've shared, it seems somewhat promotional since it directly names and recommends a specific external site. Without knowing more about the context of the discussion or the user's history in the community, it leans towards being potentially spammy, particularly if it does not closely align with the typical content of the #retention channel or if the user repeatedly posts similar content.

This already does a lot better, and it’s providing reasoning over whether to mark the comment as spam or not, but it still doesn’t have strong enough conviction to label it correctly. Plus this is super wordy! If we’re planning to automate this prompt, we want it to output structured data we can use to trigger our content moderation tools, rather than forcing a human to read through it.

Specify Format

Define what rules to follow, and the required structure of the response.

The second principle is the most important for automation, which is setting the type of format you want the output to be structured in. One popular format is JSON or JavaScript Object Notation, which is a versatile data format developers use to store information they’re planning to display on a website, or send to another API to trigger further actions. Let’s adjust our prompt template so that we get JSON back:

The response we get is as follows:

{"reasoning": "The comment refers to an external resource, PMToolkit.com, which might be seen as promoting a specific service unrelated to Reforge. However, the context of sharing a resource that complements the methodologies discussed in Reforge could be relevant and helpful, rather than purely promotional.", "spam": 0}

This is already much improved, because the response is structured and it’s far less wordy. It still, on balance, uses its judgement that this is not spam, and we can audit its reasoning. This was an intentionally subtle example of spam, so it’s not discouraging that we’re not able to detect it yet, and we still have some tricks up our sleeve.

Provide Examples

Insert a diverse set of test cases where the task was done correctly.

Adding examples is often one of the most impactful ways to improve a prompt, to the point where AI researchers that are benchmarking LLMs will report on how models perform zero-shot (no examples), versus few-shot (one to five examples) and many-shot (more than five examples). However, including too many examples of the task in your prompt can constrain the creativity of the responses you get, and it takes time to dig out good examples to include.

Now is the time to start adding a few examples to the prompt, and see if we can get it to correctly classify that troublesome example:

Now we see with just three examples that the prompt correctly identifies the problem case:

{"reasoning": "The comment promotes a specific external website, PMToolkit.com, which suggests an intent to drive traffic away from Reforge to another commercial site, similar to other examples provided.", "spam": 1}

We finally have a handle on the most difficult case we’ve tested so far, but we have no idea if we were just lucky on this try. We don’t know if this fails one in ten, or one in a thousand times, and what sorts of cases it’s likely to struggle with.

Evaluate Quality

Identify errors and rate responses, testing what drives performance.

Once I’ve optimized a prompt by giving direction, specifying format, and providing examples, I usually have it pretty much working for a single example. Then what becomes important is seeing how often it fails, and what type of examples it fails on. To do this we need to build up a set of evals, or evaluations metrics, which will give us some way to grade the performance of the model. This lets us be more consistent and objective with our results, finally moving away from trial and error.

This part gets onerous to do manually in ChatGPT, because it requires a lot of clicking “Regenerate” and copy and pasting results into a spreadsheet for analysis. If you do know how to code, you can run the same prompt ten or a hundred times in a few minutes, and dump it into a CSV to analyze the results, which is what I did here. We have a set of fifteen comments – five normal, five subtle spam, and five obvious spam – and we’re going to run each ten times in ChatGPT, which is one hundred and fifty total runs. We’re going to add up the number of times it predicts 1 for spam, and divide that by the number of runs (10) to get a prediction to compare against the reference answer.

A summary of these runs are in this table:

The only test case this prompt doesn’t get 100% correct is as follows:

“I've been revisiting our conversations about boosting user interaction, and I found some nuanced strategies discussed in a recent article. They focus on personalized user engagement, which could be quite transformative for our projects. Allow me to share them with you.”

This is subtle, because technically they are being polite and asking if they can share some relevant information. It’s overly formal which is what gives off the spam vibe, but otherwise it’s something we can probably live with. If we tried to go harder on these cases, we might risk blocking legitimate users, particularly those for whom english isn’t their first language, as has happened with AI detectors in universities. This case shows the importance of testing prompts 10 or more times each: there is an element of randomness to each response, as we can see with this prompt only guessing right for this case 2 in 10 times.

We could test another prompt here, but we’re happy with 95% accuracy and no false positives (wrongly accused users). How accurate you need the system to be really depends on what you’re using it for, and the constraints of your organization. In addition, organizations with more resources can spend more time optimizing their prompt for more edge cases. However, this takes about 3 seconds to run per comment, and although it only cost us about a dollar to run these tests (half a penny per comment), we could be easily be spending hundreds or thousands of dollars per month as this system scales. GPT-3.5 (which powers the free version of ChatGPT) is 60x cheaper, and much faster to return a response, so we should see how well it does:

This is a great result, because it shows that GPT-3.5 is almost as good at GPT-4 on this task. Using GPT-4 for everything would be like paying your lawyer’s hourly rate to have them mow your lawn. If you can get the job done more cost-effectively, you should. Especially if that job is going to scale in production to a much bigger number of calls to OpenAI. It might be worth testing with some open-source models like Mistral or LLaMA, which might help us bring down costs even further, and decrease our dependence on OpenAI, while protecting our user’s privacy by handling this all on our own servers. Even if we find it doesn’t work as well, we might be able to test a few modifications to the prompt to improve performance.

Divide Labor

Split tasks into multiple steps, chained together for complex goals.

No AI system I know of that’s used in production contains only a single prompt. Most real-world tasks are actually a complex bundle of smaller tasks, and it helps to break them out into individual components then chain them together. The next thing I would try is to go harder on spam detection in our GPT-3.5 prompt, while adding an extra step with GPT-4 to use its superior knowledge to review any positive identifications. If we modify the prompt to be stricter on spam, as below, does it improve in accuracy?

Here’s the new prompt, with CAPS lock on to show the AI we mean business:

And this is what happens to the results:

It successfully handles the spam comment we had trouble with last time, but at the cost of marking one of the normal user comments as spam mistakenly! Here is an example of its reasoning:

The comment is overly positive about the Product Management Foundations course and does not provide any meaningful information or discussion. It is also likely promoting the course without adding value to the conversation.

This is a good example of the prompt not containing enough context, because this course is offered by Reforge, and they would be happy to see their students promoting it. Now let’s edit our prompt to speak to GPT-4 and ask it to adjudicate on this decision:

Here is the wise response we get back from the smarter GPT-4 model:

{"reasoning": "The comment shares a personal experience and enthusiasm after completing a course, which is relevant to the #retention channel's focus on user engagement and course impact. It does not include overly formal language, self-promotion of unrelated websites, personal information requests, or links to external sites. While the comment is very positive, it aligns with sharing course feedback, which is typical in educational communities.", "spam": 0}

From here you could also add a human in the loop, passing anything GPT-4 thinks is spam to a real person for review before you pull the trigger on banning a user. With GPT-3.5 and GPT-4 working together to do the heavy lifting, your team only needs to review important or contentious cases, keeping your costs down and making their job more interesting.

According to Disqus, about 0.8% of comments are spam, and they see 1.8 million comments per day. That means using GPT-3.5, or a similarly priced open-source model, would cost them only $149 a day to process every single comment, versus $9,000 per day with GPT-4. This is why it’s important to determine the level of intelligence you need for each task, and make the right tradeoffs between cost, quality, and speed. We spend a few hundred dollars to review everything, then another few hundred reviewing just under one percent that get flagged, and a few hundred again on manual review of those with a real human.

Together the system makes the correct tradeoffs to accomplish the task, and we can continue to optimize each part to improve results. We can build out more evals and try optimizing the identification prompt for GPT-3.5, or the adjudication prompt for GPT-4, or even fine-tuning our own custom model to do one or more of these tasks. That’s what prompt engineering is – not trying different combinations of magic words until something works – building a production-grade system that works with AI to get the results you need at scale.